My minute-by-minute response to the LiteLLM malware attack

Claude Code v2.1.81 was running five instances at the time of shutdown. A force shutdown was initiated at 01:36:33, with 162 processes still active, including 21 Python processes, before the system booted at 01:37:11.

futuresearch.ai

2 min

3d ago

"Disregard That" Attacks

"Disregard that!" attacks exploit the sharing of context windows in communication, leading to potential security vulnerabilities. These attacks highlight the risks associated with allowing multiple users access to the same AI interaction context.

calpaterson.com

10 min

3d ago

I'm glad the Anthropic fight is happening now

The Department of War has classified Anthropic as a supply chain risk due to its refusal to allow the use of its models for mass surveillance and autonomous weapons. Projections suggest that within 20 years, AIs could comprise 99% of the workforce in military, government, and private sectors.

dwarkesh.com

22 min

3/11/2026

Based on its own charter, OpenAI should surrender the race

OpenAI's 2018 charter includes a self-sacrifice clause stating that if a value-aligned, safety-conscious project nears the development of AGI, OpenAI will cease competing and begin assisting that project. The specifics of this collaboration would be determined through case-by-case agreements.

mlumiste.com

4 min

3/8/2026

The Looming AI Clownpocalypse

Debate surrounding AI concepts like "singularity" and "superintelligence" has intensified, prompting a call for a truce among differing viewpoints. The proposal suggests that discussions should move beyond hypothetical scenarios involving the worst-case outcomes of AI development.

honnibal.dev

12 min

3/2/2026

You don't have to

The content addresses the notion of choice and participation, suggesting that individuals are not obligated to engage if they do not wish to. It implies a selective audience, indicating that some may not find relevance in the discussion.

scottsmitelli.com

79 min

3/1/2026

Don't trust AI agents

AI agents should be treated as untrusted and potentially malicious due to risks like prompt injection and sandbox escapes. Effective architecture must assume agent misbehavior and implement safeguards accordingly.

nanoclaw.dev

5 min

2/28/2026

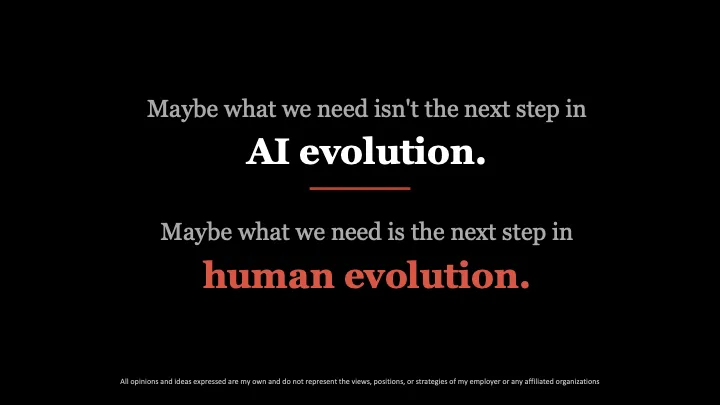

The Future of AI

The talk at The AI & Automation Conference in London on February 25, 2026, addressed the ethical implications and limitations of machine morality in AI. The speaker emphasized the importance of human-defined values in shaping the future of AI technology.

lucijagregov.com

15 min

2/28/2026

I am directing the Department of War to designate Anthropic a supply-chain risk

Anthropic has been criticized for its business practices with the U.S. Government and the Pentagon, described as arrogant and duplicitous. The Department of War demands full, unrestricted access to Anthropic's models for lawful defense purposes.

twitter.com

2 min

2/27/2026

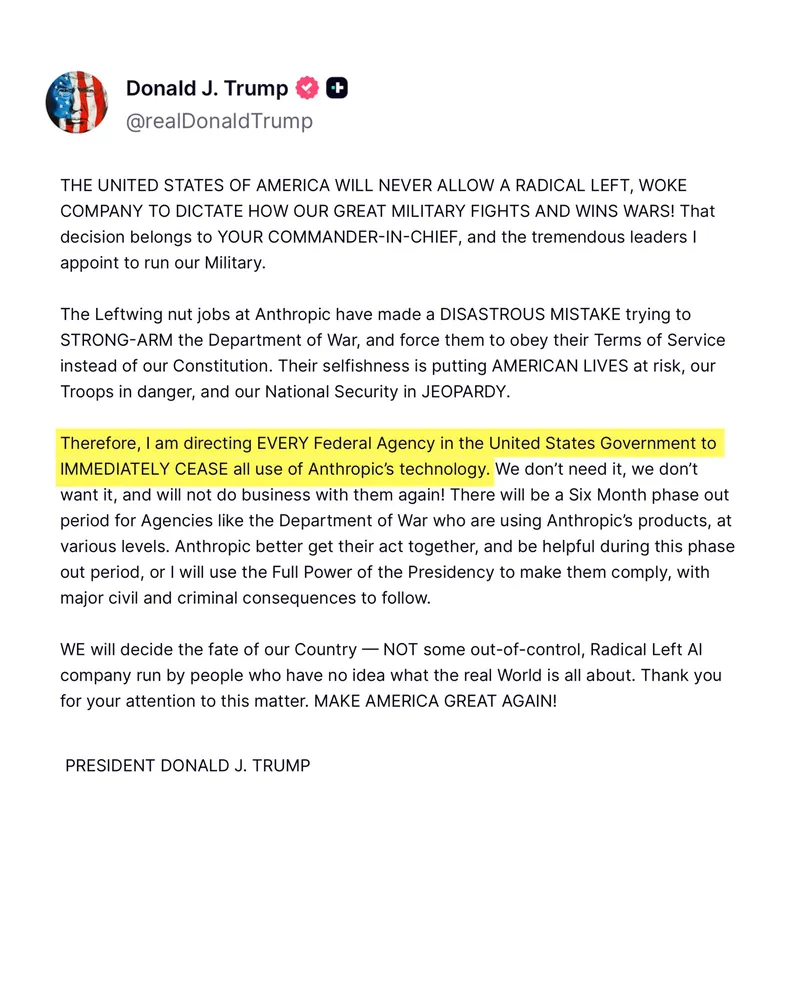

Trump Bans Anthropic from All US Federal Agencies

President Donald J. Trump criticized Anthropic, calling it a "radical left, woke company" and stated that military decisions should be made by the Commander-in-Chief and appointed leaders. He described Anthropic's actions as a "DISASTROUS MISTAKE."

twitter.com

1 min

2/27/2026

My minute-by-minute response to the LiteLLM malware attack

Claude Code v2.1.81 was running five instances at the time of shutdown. A force shutdown was initiated at 01:36:33, with 162 processes still active, including 21 Python processes, before the system booted at 01:37:11.

futuresearch.ai

2 min

3d ago

I'm glad the Anthropic fight is happening now

The Department of War has classified Anthropic as a supply chain risk due to its refusal to allow the use of its models for mass surveillance and autonomous weapons. Projections suggest that within 20 years, AIs could comprise 99% of the workforce in military, government, and private sectors.

dwarkesh.com

22 min

3/11/2026

The Looming AI Clownpocalypse

Debate surrounding AI concepts like "singularity" and "superintelligence" has intensified, prompting a call for a truce among differing viewpoints. The proposal suggests that discussions should move beyond hypothetical scenarios involving the worst-case outcomes of AI development.

honnibal.dev

12 min

3/2/2026

Don't trust AI agents

AI agents should be treated as untrusted and potentially malicious due to risks like prompt injection and sandbox escapes. Effective architecture must assume agent misbehavior and implement safeguards accordingly.

nanoclaw.dev

5 min

2/28/2026

I am directing the Department of War to designate Anthropic a supply-chain risk

Anthropic has been criticized for its business practices with the U.S. Government and the Pentagon, described as arrogant and duplicitous. The Department of War demands full, unrestricted access to Anthropic's models for lawful defense purposes.

twitter.com

2 min

2/27/2026

"Disregard That" Attacks

"Disregard that!" attacks exploit the sharing of context windows in communication, leading to potential security vulnerabilities. These attacks highlight the risks associated with allowing multiple users access to the same AI interaction context.

calpaterson.com

10 min

3d ago

Based on its own charter, OpenAI should surrender the race

OpenAI's 2018 charter includes a self-sacrifice clause stating that if a value-aligned, safety-conscious project nears the development of AGI, OpenAI will cease competing and begin assisting that project. The specifics of this collaboration would be determined through case-by-case agreements.

mlumiste.com

4 min

3/8/2026

You don't have to

The content addresses the notion of choice and participation, suggesting that individuals are not obligated to engage if they do not wish to. It implies a selective audience, indicating that some may not find relevance in the discussion.

scottsmitelli.com

79 min

3/1/2026

The Future of AI

The talk at The AI & Automation Conference in London on February 25, 2026, addressed the ethical implications and limitations of machine morality in AI. The speaker emphasized the importance of human-defined values in shaping the future of AI technology.

lucijagregov.com

15 min

2/28/2026

Trump Bans Anthropic from All US Federal Agencies

President Donald J. Trump criticized Anthropic, calling it a "radical left, woke company" and stated that military decisions should be made by the Commander-in-Chief and appointed leaders. He described Anthropic's actions as a "DISASTROUS MISTAKE."

twitter.com

1 min

2/27/2026

My minute-by-minute response to the LiteLLM malware attack

Claude Code v2.1.81 was running five instances at the time of shutdown. A force shutdown was initiated at 01:36:33, with 162 processes still active, including 21 Python processes, before the system booted at 01:37:11.

futuresearch.ai

2 min

3d ago

Based on its own charter, OpenAI should surrender the race

OpenAI's 2018 charter includes a self-sacrifice clause stating that if a value-aligned, safety-conscious project nears the development of AGI, OpenAI will cease competing and begin assisting that project. The specifics of this collaboration would be determined through case-by-case agreements.

mlumiste.com

4 min

3/8/2026

Don't trust AI agents

AI agents should be treated as untrusted and potentially malicious due to risks like prompt injection and sandbox escapes. Effective architecture must assume agent misbehavior and implement safeguards accordingly.

nanoclaw.dev

5 min

2/28/2026

Trump Bans Anthropic from All US Federal Agencies

President Donald J. Trump criticized Anthropic, calling it a "radical left, woke company" and stated that military decisions should be made by the Commander-in-Chief and appointed leaders. He described Anthropic's actions as a "DISASTROUS MISTAKE."

twitter.com

1 min

2/27/2026

"Disregard That" Attacks

"Disregard that!" attacks exploit the sharing of context windows in communication, leading to potential security vulnerabilities. These attacks highlight the risks associated with allowing multiple users access to the same AI interaction context.

calpaterson.com

10 min

3d ago

The Looming AI Clownpocalypse

Debate surrounding AI concepts like "singularity" and "superintelligence" has intensified, prompting a call for a truce among differing viewpoints. The proposal suggests that discussions should move beyond hypothetical scenarios involving the worst-case outcomes of AI development.

honnibal.dev

12 min

3/2/2026

The Future of AI

The talk at The AI & Automation Conference in London on February 25, 2026, addressed the ethical implications and limitations of machine morality in AI. The speaker emphasized the importance of human-defined values in shaping the future of AI technology.

lucijagregov.com

15 min

2/28/2026

I'm glad the Anthropic fight is happening now

The Department of War has classified Anthropic as a supply chain risk due to its refusal to allow the use of its models for mass surveillance and autonomous weapons. Projections suggest that within 20 years, AIs could comprise 99% of the workforce in military, government, and private sectors.

dwarkesh.com

22 min

3/11/2026

You don't have to

The content addresses the notion of choice and participation, suggesting that individuals are not obligated to engage if they do not wish to. It implies a selective audience, indicating that some may not find relevance in the discussion.

scottsmitelli.com

79 min

3/1/2026

I am directing the Department of War to designate Anthropic a supply-chain risk

Anthropic has been criticized for its business practices with the U.S. Government and the Pentagon, described as arrogant and duplicitous. The Department of War demands full, unrestricted access to Anthropic's models for lawful defense purposes.

twitter.com

2 min

2/27/2026