Arm AGI CPU

Arm has announced the Arm AGI CPU, a new class of production-ready silicon built on the Arm Neoverse platform, aimed at enhancing AI infrastructure. This marks Arm's first foray into delivering its own silicon products, expanding the options for customers deploying Arm compute.

newsroom.arm.com

8 min

5d ago

Running a One Trillion-Parameter LLM Locally on AMD Ryzen AI Max+ Cluster

A small-scale distributed inference cluster can be built using AMD’s Ryzen™ AI Max+ AI PC platform to run a one trillion-parameter Large Language Model. A four-node cluster of Framework Desktop systems demonstrates the local inference of the Kimi K2.5 open-source model.

amd.com

14 min

3/1/2026

Crawling a billion web pages in just over 24 hours, in 2025

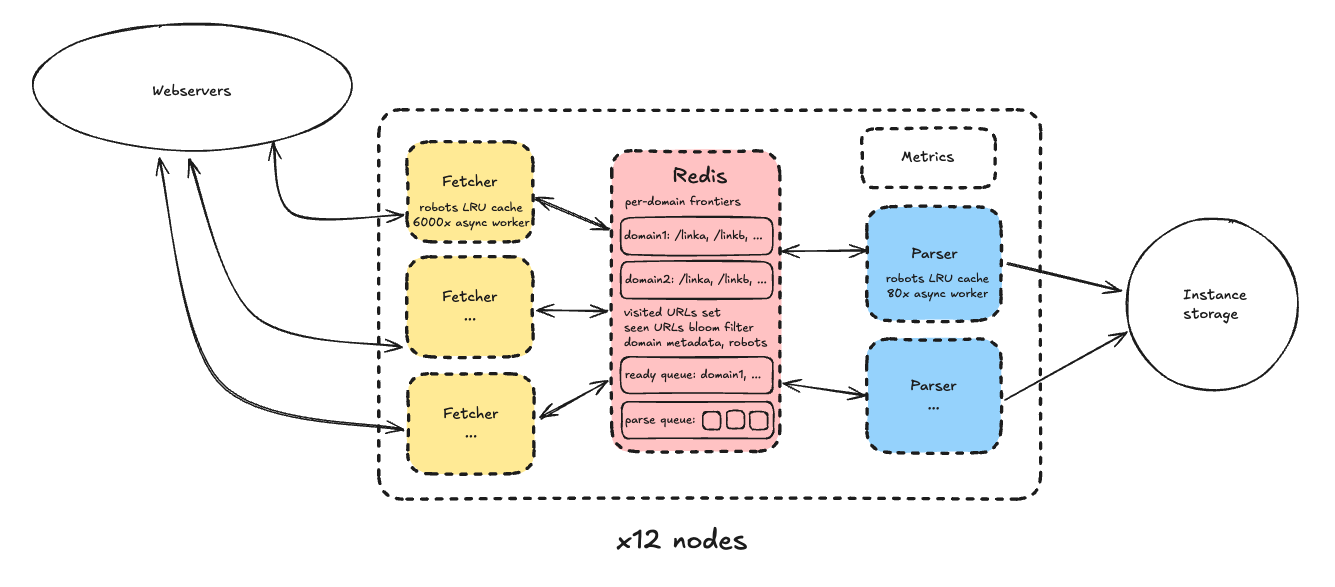

Crawling 1.005 billion web pages took just over 25.5 hours and cost $462. Advances in technology, such as multi-core CPUs and NVMe solid-state drives, have significantly improved web crawling efficiency since 2012.

andrewkchan.dev

15 min

2/23/2026

Arm AGI CPU

Arm has announced the Arm AGI CPU, a new class of production-ready silicon built on the Arm Neoverse platform, aimed at enhancing AI infrastructure. This marks Arm's first foray into delivering its own silicon products, expanding the options for customers deploying Arm compute.

newsroom.arm.com

8 min

5d ago

Crawling a billion web pages in just over 24 hours, in 2025

Crawling 1.005 billion web pages took just over 25.5 hours and cost $462. Advances in technology, such as multi-core CPUs and NVMe solid-state drives, have significantly improved web crawling efficiency since 2012.

andrewkchan.dev

15 min

2/23/2026

Running a One Trillion-Parameter LLM Locally on AMD Ryzen AI Max+ Cluster

A small-scale distributed inference cluster can be built using AMD’s Ryzen™ AI Max+ AI PC platform to run a one trillion-parameter Large Language Model. A four-node cluster of Framework Desktop systems demonstrates the local inference of the Kimi K2.5 open-source model.

amd.com

14 min

3/1/2026

Arm AGI CPU

Arm has announced the Arm AGI CPU, a new class of production-ready silicon built on the Arm Neoverse platform, aimed at enhancing AI infrastructure. This marks Arm's first foray into delivering its own silicon products, expanding the options for customers deploying Arm compute.

newsroom.arm.com

8 min

5d ago

Running a One Trillion-Parameter LLM Locally on AMD Ryzen AI Max+ Cluster

A small-scale distributed inference cluster can be built using AMD’s Ryzen™ AI Max+ AI PC platform to run a one trillion-parameter Large Language Model. A four-node cluster of Framework Desktop systems demonstrates the local inference of the Kimi K2.5 open-source model.

amd.com

14 min

3/1/2026

Crawling a billion web pages in just over 24 hours, in 2025

Crawling 1.005 billion web pages took just over 25.5 hours and cost $462. Advances in technology, such as multi-core CPUs and NVMe solid-state drives, have significantly improved web crawling efficiency since 2012.

andrewkchan.dev

15 min

2/23/2026

No more articles to load