$500 GPU outperforms Claude Sonnet on coding benchmarks

A.T.L.A.S achieves a 74.6% pass rate on LiveCodeBench with a frozen 14B model using a single consumer GPU, significantly improving from the previous 36-41% in V2. The system utilizes constraint-driven generation and self-verified iterative refinement, allowing a smaller model to compete with larger models at a reduced cost without fine-tuning.

github.com

8 min

2d ago

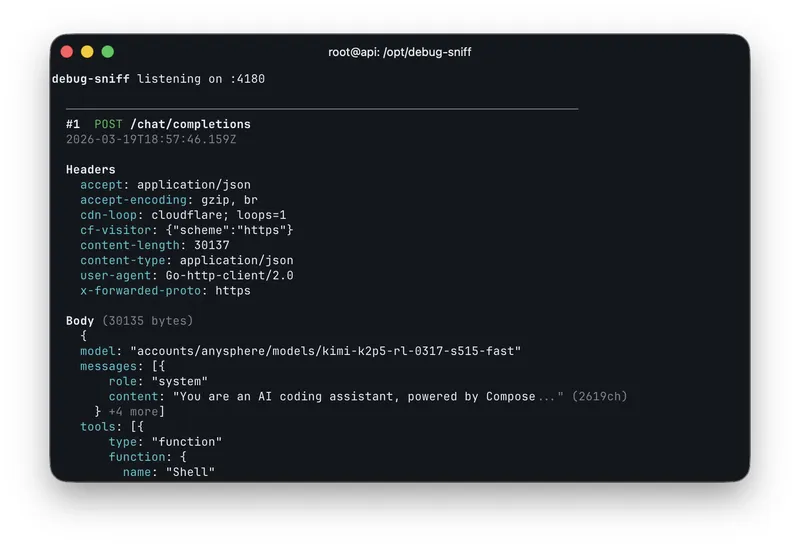

Cursor Composer 2 is just Kimi K2.5 with RL

Fynn discovered a model ID, "kimi-k2p5-rl-0317-s515-fast," while experimenting with the OpenAI base URL in Cursor. Composer 2 is identified as Kimi K2.5 with reinforcement learning (RL) capabilities.

twitter.com

1 min

3/20/2026

NanoGPT Slowrun: 10x Data Efficiency with Infinite Compute

Posted by sdpmas. Score: 89 points. Comments: 14.

qlabs.sh

1 min

3/19/2026

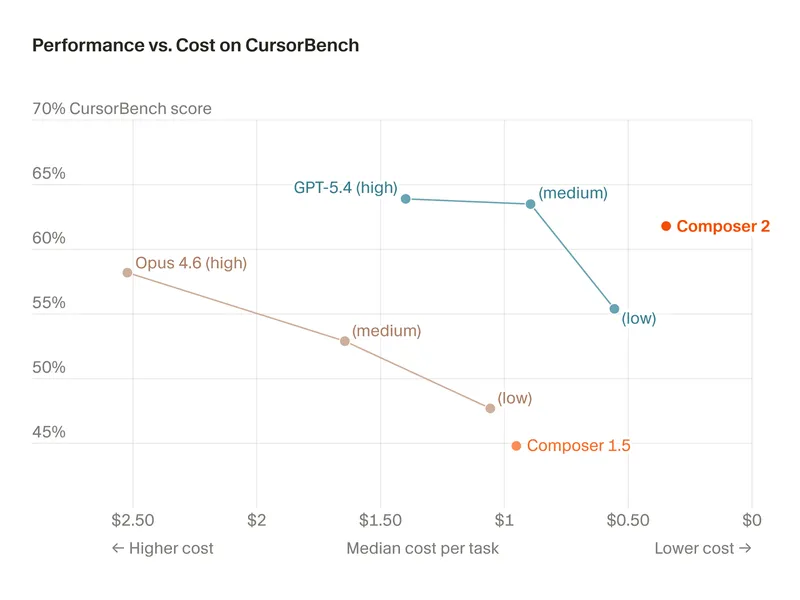

Composer 2

Composer 2 is now available in Cursor, offering frontier-level coding intelligence at a cost of $0.50 per million input tokens and $2.50 per million output tokens. The model shows significant improvements across various benchmarks, including Terminal-Bench 2.01 and SWE-bench Multilingual.

cursor.com

2 min

3/19/2026

GPT‑5.4 Mini and Nano

GPT-5.4 mini and nano are newly released small models that enhance performance for high-volume workloads. GPT-5.4 mini offers over 2x faster processing and improved capabilities in coding, reasoning, multimodal understanding, and tool use, nearing the performance of the larger GPT-5.4 model in various evaluations.

openai.com

5 min

3/17/2026

Can I run AI locally?

Detect your hardware and find out which AI models you can run locally. GPU, CPU, and RAM analysis in your browser.

canirun.ai

1 min

3/13/2026

How to run Qwen 3.5 locally

Qwen3.5 is a family of multimodal hybrid reasoning LLMs from Alibaba, featuring models such as Qwen3.5-35B-A3B, 27B, 122B-A10B, and 397B-A17B, as well as smaller versions like Qwen3.5-0.8B, 2B, 4B, and 9B. These models support a 256K context across 201 languages and deliver strong performance for their sizes.

unsloth.ai

13 min

3/7/2026

GLiNER2: Unified Schema-Based Information Extraction

GLiNER2 is a unified model for Named Entity Recognition, Text Classification, Structured Data Extraction, and Relation Extraction, consisting of 205 million parameters. It allows for efficient CPU-based inference without the need for complex pipelines or external API dependencies.

github.com

18 min

3/6/2026

Right-sizes LLM models to your system's RAM, CPU, and GPU

llmfit is a terminal tool that optimizes large language models (LLMs) for specific hardware configurations, assessing RAM, CPU, and GPU capabilities. It features an interactive TUI and classic CLI mode, supports multi-GPU setups, and provides dynamic quantization selection and speed estimation.

github.com

15 min

3/2/2026

Nano Banana 2: Google's latest AI image generation model

Nano Banana 2, based on the Gemini 3.1 Flash Image model, combines advanced capabilities with high-speed performance for image generation and editing. It integrates features from both the original Nano Banana and Nano Banana Pro, providing users with enhanced creative control and intelligence.

blog.google

4 min

2/26/2026

$500 GPU outperforms Claude Sonnet on coding benchmarks

A.T.L.A.S achieves a 74.6% pass rate on LiveCodeBench with a frozen 14B model using a single consumer GPU, significantly improving from the previous 36-41% in V2. The system utilizes constraint-driven generation and self-verified iterative refinement, allowing a smaller model to compete with larger models at a reduced cost without fine-tuning.

github.com

8 min

2d ago

NanoGPT Slowrun: 10x Data Efficiency with Infinite Compute

Posted by sdpmas. Score: 89 points. Comments: 14.

qlabs.sh

1 min

3/19/2026

GPT‑5.4 Mini and Nano

GPT-5.4 mini and nano are newly released small models that enhance performance for high-volume workloads. GPT-5.4 mini offers over 2x faster processing and improved capabilities in coding, reasoning, multimodal understanding, and tool use, nearing the performance of the larger GPT-5.4 model in various evaluations.

openai.com

5 min

3/17/2026

How to run Qwen 3.5 locally

Qwen3.5 is a family of multimodal hybrid reasoning LLMs from Alibaba, featuring models such as Qwen3.5-35B-A3B, 27B, 122B-A10B, and 397B-A17B, as well as smaller versions like Qwen3.5-0.8B, 2B, 4B, and 9B. These models support a 256K context across 201 languages and deliver strong performance for their sizes.

unsloth.ai

13 min

3/7/2026

Right-sizes LLM models to your system's RAM, CPU, and GPU

llmfit is a terminal tool that optimizes large language models (LLMs) for specific hardware configurations, assessing RAM, CPU, and GPU capabilities. It features an interactive TUI and classic CLI mode, supports multi-GPU setups, and provides dynamic quantization selection and speed estimation.

github.com

15 min

3/2/2026

Cursor Composer 2 is just Kimi K2.5 with RL

Fynn discovered a model ID, "kimi-k2p5-rl-0317-s515-fast," while experimenting with the OpenAI base URL in Cursor. Composer 2 is identified as Kimi K2.5 with reinforcement learning (RL) capabilities.

twitter.com

1 min

3/20/2026

Composer 2

Composer 2 is now available in Cursor, offering frontier-level coding intelligence at a cost of $0.50 per million input tokens and $2.50 per million output tokens. The model shows significant improvements across various benchmarks, including Terminal-Bench 2.01 and SWE-bench Multilingual.

cursor.com

2 min

3/19/2026

Can I run AI locally?

Detect your hardware and find out which AI models you can run locally. GPU, CPU, and RAM analysis in your browser.

canirun.ai

1 min

3/13/2026

GLiNER2: Unified Schema-Based Information Extraction

GLiNER2 is a unified model for Named Entity Recognition, Text Classification, Structured Data Extraction, and Relation Extraction, consisting of 205 million parameters. It allows for efficient CPU-based inference without the need for complex pipelines or external API dependencies.

github.com

18 min

3/6/2026

Nano Banana 2: Google's latest AI image generation model

Nano Banana 2, based on the Gemini 3.1 Flash Image model, combines advanced capabilities with high-speed performance for image generation and editing. It integrates features from both the original Nano Banana and Nano Banana Pro, providing users with enhanced creative control and intelligence.

blog.google

4 min

2/26/2026

$500 GPU outperforms Claude Sonnet on coding benchmarks

A.T.L.A.S achieves a 74.6% pass rate on LiveCodeBench with a frozen 14B model using a single consumer GPU, significantly improving from the previous 36-41% in V2. The system utilizes constraint-driven generation and self-verified iterative refinement, allowing a smaller model to compete with larger models at a reduced cost without fine-tuning.

github.com

8 min

2d ago

Composer 2

Composer 2 is now available in Cursor, offering frontier-level coding intelligence at a cost of $0.50 per million input tokens and $2.50 per million output tokens. The model shows significant improvements across various benchmarks, including Terminal-Bench 2.01 and SWE-bench Multilingual.

cursor.com

2 min

3/19/2026

How to run Qwen 3.5 locally

Qwen3.5 is a family of multimodal hybrid reasoning LLMs from Alibaba, featuring models such as Qwen3.5-35B-A3B, 27B, 122B-A10B, and 397B-A17B, as well as smaller versions like Qwen3.5-0.8B, 2B, 4B, and 9B. These models support a 256K context across 201 languages and deliver strong performance for their sizes.

unsloth.ai

13 min

3/7/2026

Nano Banana 2: Google's latest AI image generation model

Nano Banana 2, based on the Gemini 3.1 Flash Image model, combines advanced capabilities with high-speed performance for image generation and editing. It integrates features from both the original Nano Banana and Nano Banana Pro, providing users with enhanced creative control and intelligence.

blog.google

4 min

2/26/2026

Cursor Composer 2 is just Kimi K2.5 with RL

Fynn discovered a model ID, "kimi-k2p5-rl-0317-s515-fast," while experimenting with the OpenAI base URL in Cursor. Composer 2 is identified as Kimi K2.5 with reinforcement learning (RL) capabilities.

twitter.com

1 min

3/20/2026

GPT‑5.4 Mini and Nano

GPT-5.4 mini and nano are newly released small models that enhance performance for high-volume workloads. GPT-5.4 mini offers over 2x faster processing and improved capabilities in coding, reasoning, multimodal understanding, and tool use, nearing the performance of the larger GPT-5.4 model in various evaluations.

openai.com

5 min

3/17/2026

GLiNER2: Unified Schema-Based Information Extraction

GLiNER2 is a unified model for Named Entity Recognition, Text Classification, Structured Data Extraction, and Relation Extraction, consisting of 205 million parameters. It allows for efficient CPU-based inference without the need for complex pipelines or external API dependencies.

github.com

18 min

3/6/2026

NanoGPT Slowrun: 10x Data Efficiency with Infinite Compute

Posted by sdpmas. Score: 89 points. Comments: 14.

qlabs.sh

1 min

3/19/2026

Can I run AI locally?

Detect your hardware and find out which AI models you can run locally. GPU, CPU, and RAM analysis in your browser.

canirun.ai

1 min

3/13/2026

Right-sizes LLM models to your system's RAM, CPU, and GPU

llmfit is a terminal tool that optimizes large language models (LLMs) for specific hardware configurations, assessing RAM, CPU, and GPU capabilities. It features an interactive TUI and classic CLI mode, supports multi-GPU setups, and provides dynamic quantization selection and speed estimation.

github.com

15 min

3/2/2026