Accelerating Gemma 4: faster inference with multi-token prediction drafters

Gemma 4 now features Multi-Token Prediction (MTP) drafters, enhancing inference speed and efficiency. This update aims to improve performance across developer workstations, mobile devices, and cloud environments.

blog.google

4 min

5/5/2026

TurboQuant: A first-principles walkthrough

TurboQuant compresses high-dimensional AI vectors to 2–4 bits per number with minimal distortion and no memory overhead. This method employs random rotation to transform input vectors efficiently without the need for training or calibration.

arkaung.github.io

24 min

4/27/2026

Universal Claude.md – cut Claude output tokens

The universal CLAUDE.md file reduces Claude's output verbosity by approximately 63% without requiring any code changes. While it primarily addresses output behavior, most costs associated with Claude stem from input tokens rather than output.

github.com

7 min

3/31/2026

TurboQuant: Redefining AI efficiency with extreme compression

TurboQuant introduces advanced quantization algorithms that facilitate significant compression of large language models and vector search engines. These algorithms enhance AI efficiency by optimizing how models process and understand information through vector representation.

research.google

7 min

3/25/2026

IRS lost 40% of IT staff, 80% of tech leaders in 'efficiency' shakeup

The IRS has cut 40% of its IT staff and 80% of its tech leaders during a major reorganization. This restructuring is the most significant in two decades, as reported by the agency's CIO, Kaschit Pandya.

theregister.com

3 min

2/19/2026

Productivity gains from AI coding assistants haven’t budged past 10% – survey

93% of developers utilize AI coding assistants, according to research from Laura Tacho, CTO at DX. Despite high usage rates, overall productivity among developers remains at only 10%.

shiftmag.dev

5 min

2/19/2026

How AI is affecting productivity and jobs in Europe

Europe is at a crossroads regarding AI, with optimists predicting a significant boost to productivity and economic growth, while skeptics caution about barriers to adoption and potential increases in inequality. Policymakers must navigate these competing narratives to harness AI's potential effectively.

cepr.org

10 min

2/19/2026

Thousands of CEOs just admitted AI had no impact on employment or productivity

A recent survey revealed that thousands of CEOs believe AI has not significantly impacted employment or productivity. This sentiment has led economists to revisit Robert Solow's paradox regarding the unexpected slowdown in productivity growth following technological advancements in the Information Age.

fortune.com

5 min

2/18/2026

Haskell for all: Beyond agentic coding

Agentic coding tools often produce unsatisfactory results, leading to decreased productivity and user comfort with the codebase. Interview candidates using these tools tend to perform worse compared to those who do not.

haskellforall.com

11 min

2/8/2026

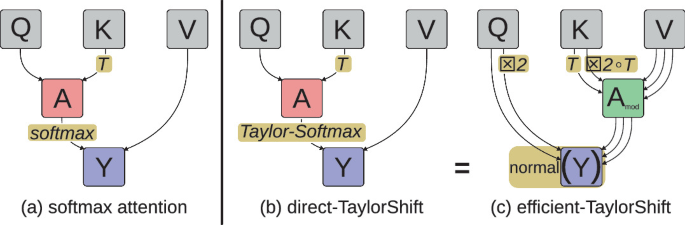

Attention at Constant Cost per Token via Symmetry-Aware Taylor Approximation

Self-attention mechanisms in Transformers typically incur costs that increase with context length, leading to higher demands for storage, compute, and energy. A new method using symmetry-aware Taylor approximation aims to maintain constant cost per token for self-attention, potentially alleviating these resource demands.

arxiv.org

2 min

2/4/2026

Accelerating Gemma 4: faster inference with multi-token prediction drafters

Gemma 4 now features Multi-Token Prediction (MTP) drafters, enhancing inference speed and efficiency. This update aims to improve performance across developer workstations, mobile devices, and cloud environments.

blog.google

4 min

5/5/2026

Universal Claude.md – cut Claude output tokens

The universal CLAUDE.md file reduces Claude's output verbosity by approximately 63% without requiring any code changes. While it primarily addresses output behavior, most costs associated with Claude stem from input tokens rather than output.

github.com

7 min

3/31/2026

IRS lost 40% of IT staff, 80% of tech leaders in 'efficiency' shakeup

The IRS has cut 40% of its IT staff and 80% of its tech leaders during a major reorganization. This restructuring is the most significant in two decades, as reported by the agency's CIO, Kaschit Pandya.

theregister.com

3 min

2/19/2026

How AI is affecting productivity and jobs in Europe

Europe is at a crossroads regarding AI, with optimists predicting a significant boost to productivity and economic growth, while skeptics caution about barriers to adoption and potential increases in inequality. Policymakers must navigate these competing narratives to harness AI's potential effectively.

cepr.org

10 min

2/19/2026

Haskell for all: Beyond agentic coding

Agentic coding tools often produce unsatisfactory results, leading to decreased productivity and user comfort with the codebase. Interview candidates using these tools tend to perform worse compared to those who do not.

haskellforall.com

11 min

2/8/2026

TurboQuant: A first-principles walkthrough

TurboQuant compresses high-dimensional AI vectors to 2–4 bits per number with minimal distortion and no memory overhead. This method employs random rotation to transform input vectors efficiently without the need for training or calibration.

arkaung.github.io

24 min

4/27/2026

TurboQuant: Redefining AI efficiency with extreme compression

TurboQuant introduces advanced quantization algorithms that facilitate significant compression of large language models and vector search engines. These algorithms enhance AI efficiency by optimizing how models process and understand information through vector representation.

research.google

7 min

3/25/2026

Productivity gains from AI coding assistants haven’t budged past 10% – survey

93% of developers utilize AI coding assistants, according to research from Laura Tacho, CTO at DX. Despite high usage rates, overall productivity among developers remains at only 10%.

shiftmag.dev

5 min

2/19/2026

Thousands of CEOs just admitted AI had no impact on employment or productivity

A recent survey revealed that thousands of CEOs believe AI has not significantly impacted employment or productivity. This sentiment has led economists to revisit Robert Solow's paradox regarding the unexpected slowdown in productivity growth following technological advancements in the Information Age.

fortune.com

5 min

2/18/2026

Attention at Constant Cost per Token via Symmetry-Aware Taylor Approximation

Self-attention mechanisms in Transformers typically incur costs that increase with context length, leading to higher demands for storage, compute, and energy. A new method using symmetry-aware Taylor approximation aims to maintain constant cost per token for self-attention, potentially alleviating these resource demands.

arxiv.org

2 min

2/4/2026

Accelerating Gemma 4: faster inference with multi-token prediction drafters

Gemma 4 now features Multi-Token Prediction (MTP) drafters, enhancing inference speed and efficiency. This update aims to improve performance across developer workstations, mobile devices, and cloud environments.

blog.google

4 min

5/5/2026

TurboQuant: Redefining AI efficiency with extreme compression

TurboQuant introduces advanced quantization algorithms that facilitate significant compression of large language models and vector search engines. These algorithms enhance AI efficiency by optimizing how models process and understand information through vector representation.

research.google

7 min

3/25/2026

How AI is affecting productivity and jobs in Europe

Europe is at a crossroads regarding AI, with optimists predicting a significant boost to productivity and economic growth, while skeptics caution about barriers to adoption and potential increases in inequality. Policymakers must navigate these competing narratives to harness AI's potential effectively.

cepr.org

10 min

2/19/2026

Attention at Constant Cost per Token via Symmetry-Aware Taylor Approximation

Self-attention mechanisms in Transformers typically incur costs that increase with context length, leading to higher demands for storage, compute, and energy. A new method using symmetry-aware Taylor approximation aims to maintain constant cost per token for self-attention, potentially alleviating these resource demands.

arxiv.org

2 min

2/4/2026

TurboQuant: A first-principles walkthrough

TurboQuant compresses high-dimensional AI vectors to 2–4 bits per number with minimal distortion and no memory overhead. This method employs random rotation to transform input vectors efficiently without the need for training or calibration.

arkaung.github.io

24 min

4/27/2026

IRS lost 40% of IT staff, 80% of tech leaders in 'efficiency' shakeup

The IRS has cut 40% of its IT staff and 80% of its tech leaders during a major reorganization. This restructuring is the most significant in two decades, as reported by the agency's CIO, Kaschit Pandya.

theregister.com

3 min

2/19/2026

Thousands of CEOs just admitted AI had no impact on employment or productivity

A recent survey revealed that thousands of CEOs believe AI has not significantly impacted employment or productivity. This sentiment has led economists to revisit Robert Solow's paradox regarding the unexpected slowdown in productivity growth following technological advancements in the Information Age.

fortune.com

5 min

2/18/2026

Universal Claude.md – cut Claude output tokens

The universal CLAUDE.md file reduces Claude's output verbosity by approximately 63% without requiring any code changes. While it primarily addresses output behavior, most costs associated with Claude stem from input tokens rather than output.

github.com

7 min

3/31/2026

Productivity gains from AI coding assistants haven’t budged past 10% – survey

93% of developers utilize AI coding assistants, according to research from Laura Tacho, CTO at DX. Despite high usage rates, overall productivity among developers remains at only 10%.

shiftmag.dev

5 min

2/19/2026

Haskell for all: Beyond agentic coding

Agentic coding tools often produce unsatisfactory results, leading to decreased productivity and user comfort with the codebase. Interview candidates using these tools tend to perform worse compared to those who do not.

haskellforall.com

11 min

2/8/2026