Google Chrome silently installs a 4 GB AI model on your device without consent

Google Chrome installs a 4 GB AI model on user devices without consent, impacting privacy and potentially increasing climate costs at a billion-device scale. The installation occurs silently and can re-install itself if removed by the user, bypassing traditional opt-out options.

thatprivacyguy.com

21 min

5/5/2026

White House Considers Vetting A.I. Models Before They Are Released

The Trump administration is considering implementing government oversight on artificial intelligence models prior to their public release. This marks a shift from the previous noninterventionist stance towards AI technology.

nytimes.com

2 min

5/4/2026

DeepSeek V4–almost on the frontier, a fraction of the price

DeepSeek has released two preview models in its V4 series: DeepSeek-V4-Pro and DeepSeek-V4-Flash. The Pro model features 1.6 trillion total parameters with 49 billion active, while the Flash model has 284 billion total parameters and 13 billion active, both utilizing a 1 million token context Mixture of Experts architecture under the MIT license.

simonwillison.net

3 min

5/1/2026

Microsoft VibeVoice: Open-Source Frontier Voice AI

VibeVoice ASR is an open-source speech-to-text model that processes 60-minute long-form audio in a single pass, producing structured transcriptions with speaker identification, timestamps, and content. It is now integrated into the Hugging Face Transformers library for easy project implementation.

github.com

4 min

4/28/2026

Eden AI – European Alternative to OpenRouter

Eden AI provides a single API to access various leading AI models, including LLMs and specialized models for tasks like speech, vision, OCR, and translation. The platform features smart routing and fallbacks, allowing developers to standardize integration across different AI providers without modifying their code.

edenai.co

1 min

4/26/2026

Study: Back-to-basics approach can match or outperform AI in language analysis

A grammar-based method called LambdaG can match or outperform advanced AI systems in identifying text authorship. This method utilizes patterns in grammar and sentence construction, providing comparable accuracy with greater transparency and lower computational costs.

manchester.ac.uk

3 min

4/15/2026

Cohere Transcribe: Speech Recognition

Cohere has launched Transcribe, an open-source automatic speech recognition (ASR) model designed for high accuracy in practical conditions. The model supports various applications, including meeting transcription, speech analytics, and real-time customer support.

cohere.com

5 min

3/31/2026

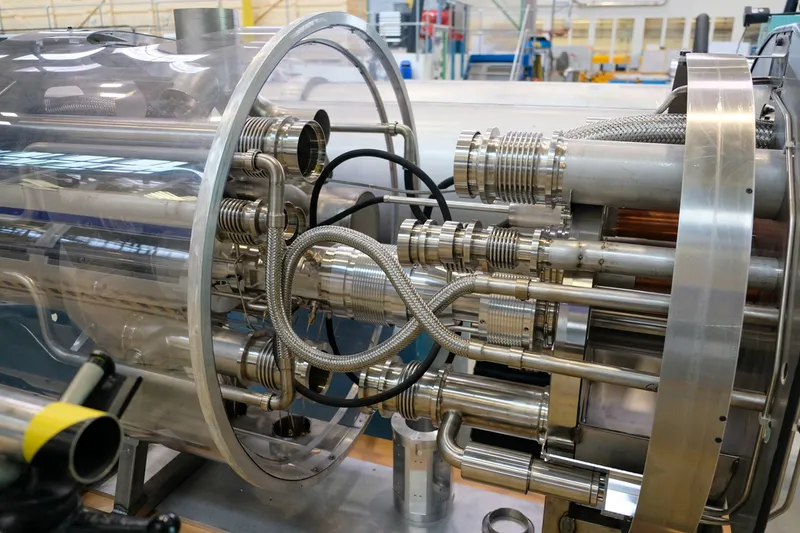

CERN uses tiny AI models burned into silicon for real-time LHC data filtering

CERN is utilizing tiny AI models burned into silicon chips for real-time filtering of data generated by the Large Hadron Collider. The LHC produces approximately 40,000 exabytes of raw data annually.

theopenreader.org

6 min

3/28/2026

A leak reveals that Anthropic is testing a more capable AI model "Claude Mythos"

Anthropic is testing a new AI model named 'Mythos,' which is claimed to be the most powerful model the company has developed to date. Early access customers are currently trialing this model, which represents a significant advancement in AI performance.

fortune.com

7 min

3/27/2026

$500 GPU outperforms Claude Sonnet on coding benchmarks

A.T.L.A.S achieves a 74.6% pass rate on LiveCodeBench with a frozen 14B model using a single consumer GPU, significantly improving from the previous 36-41% in V2. The system utilizes constraint-driven generation and self-verified iterative refinement, allowing a smaller model to compete with larger models at a reduced cost without fine-tuning.

github.com

8 min

3/27/2026

Google Chrome silently installs a 4 GB AI model on your device without consent

Google Chrome installs a 4 GB AI model on user devices without consent, impacting privacy and potentially increasing climate costs at a billion-device scale. The installation occurs silently and can re-install itself if removed by the user, bypassing traditional opt-out options.

thatprivacyguy.com

21 min

5/5/2026

DeepSeek V4–almost on the frontier, a fraction of the price

DeepSeek has released two preview models in its V4 series: DeepSeek-V4-Pro and DeepSeek-V4-Flash. The Pro model features 1.6 trillion total parameters with 49 billion active, while the Flash model has 284 billion total parameters and 13 billion active, both utilizing a 1 million token context Mixture of Experts architecture under the MIT license.

simonwillison.net

3 min

5/1/2026

Eden AI – European Alternative to OpenRouter

Eden AI provides a single API to access various leading AI models, including LLMs and specialized models for tasks like speech, vision, OCR, and translation. The platform features smart routing and fallbacks, allowing developers to standardize integration across different AI providers without modifying their code.

edenai.co

1 min

4/26/2026

Cohere Transcribe: Speech Recognition

Cohere has launched Transcribe, an open-source automatic speech recognition (ASR) model designed for high accuracy in practical conditions. The model supports various applications, including meeting transcription, speech analytics, and real-time customer support.

cohere.com

5 min

3/31/2026

A leak reveals that Anthropic is testing a more capable AI model "Claude Mythos"

Anthropic is testing a new AI model named 'Mythos,' which is claimed to be the most powerful model the company has developed to date. Early access customers are currently trialing this model, which represents a significant advancement in AI performance.

fortune.com

7 min

3/27/2026

White House Considers Vetting A.I. Models Before They Are Released

The Trump administration is considering implementing government oversight on artificial intelligence models prior to their public release. This marks a shift from the previous noninterventionist stance towards AI technology.

nytimes.com

2 min

5/4/2026

Microsoft VibeVoice: Open-Source Frontier Voice AI

VibeVoice ASR is an open-source speech-to-text model that processes 60-minute long-form audio in a single pass, producing structured transcriptions with speaker identification, timestamps, and content. It is now integrated into the Hugging Face Transformers library for easy project implementation.

github.com

4 min

4/28/2026

Study: Back-to-basics approach can match or outperform AI in language analysis

A grammar-based method called LambdaG can match or outperform advanced AI systems in identifying text authorship. This method utilizes patterns in grammar and sentence construction, providing comparable accuracy with greater transparency and lower computational costs.

manchester.ac.uk

3 min

4/15/2026

CERN uses tiny AI models burned into silicon for real-time LHC data filtering

CERN is utilizing tiny AI models burned into silicon chips for real-time filtering of data generated by the Large Hadron Collider. The LHC produces approximately 40,000 exabytes of raw data annually.

theopenreader.org

6 min

3/28/2026

$500 GPU outperforms Claude Sonnet on coding benchmarks

A.T.L.A.S achieves a 74.6% pass rate on LiveCodeBench with a frozen 14B model using a single consumer GPU, significantly improving from the previous 36-41% in V2. The system utilizes constraint-driven generation and self-verified iterative refinement, allowing a smaller model to compete with larger models at a reduced cost without fine-tuning.

github.com

8 min

3/27/2026

Google Chrome silently installs a 4 GB AI model on your device without consent

Google Chrome installs a 4 GB AI model on user devices without consent, impacting privacy and potentially increasing climate costs at a billion-device scale. The installation occurs silently and can re-install itself if removed by the user, bypassing traditional opt-out options.

thatprivacyguy.com

21 min

5/5/2026

Microsoft VibeVoice: Open-Source Frontier Voice AI

VibeVoice ASR is an open-source speech-to-text model that processes 60-minute long-form audio in a single pass, producing structured transcriptions with speaker identification, timestamps, and content. It is now integrated into the Hugging Face Transformers library for easy project implementation.

github.com

4 min

4/28/2026

Cohere Transcribe: Speech Recognition

Cohere has launched Transcribe, an open-source automatic speech recognition (ASR) model designed for high accuracy in practical conditions. The model supports various applications, including meeting transcription, speech analytics, and real-time customer support.

cohere.com

5 min

3/31/2026

$500 GPU outperforms Claude Sonnet on coding benchmarks

A.T.L.A.S achieves a 74.6% pass rate on LiveCodeBench with a frozen 14B model using a single consumer GPU, significantly improving from the previous 36-41% in V2. The system utilizes constraint-driven generation and self-verified iterative refinement, allowing a smaller model to compete with larger models at a reduced cost without fine-tuning.

github.com

8 min

3/27/2026

White House Considers Vetting A.I. Models Before They Are Released

The Trump administration is considering implementing government oversight on artificial intelligence models prior to their public release. This marks a shift from the previous noninterventionist stance towards AI technology.

nytimes.com

2 min

5/4/2026

Eden AI – European Alternative to OpenRouter

Eden AI provides a single API to access various leading AI models, including LLMs and specialized models for tasks like speech, vision, OCR, and translation. The platform features smart routing and fallbacks, allowing developers to standardize integration across different AI providers without modifying their code.

edenai.co

1 min

4/26/2026

CERN uses tiny AI models burned into silicon for real-time LHC data filtering

CERN is utilizing tiny AI models burned into silicon chips for real-time filtering of data generated by the Large Hadron Collider. The LHC produces approximately 40,000 exabytes of raw data annually.

theopenreader.org

6 min

3/28/2026

DeepSeek V4–almost on the frontier, a fraction of the price

DeepSeek has released two preview models in its V4 series: DeepSeek-V4-Pro and DeepSeek-V4-Flash. The Pro model features 1.6 trillion total parameters with 49 billion active, while the Flash model has 284 billion total parameters and 13 billion active, both utilizing a 1 million token context Mixture of Experts architecture under the MIT license.

simonwillison.net

3 min

5/1/2026

Study: Back-to-basics approach can match or outperform AI in language analysis

A grammar-based method called LambdaG can match or outperform advanced AI systems in identifying text authorship. This method utilizes patterns in grammar and sentence construction, providing comparable accuracy with greater transparency and lower computational costs.

manchester.ac.uk

3 min

4/15/2026

A leak reveals that Anthropic is testing a more capable AI model "Claude Mythos"

Anthropic is testing a new AI model named 'Mythos,' which is claimed to be the most powerful model the company has developed to date. Early access customers are currently trialing this model, which represents a significant advancement in AI performance.

fortune.com

7 min

3/27/2026