Lambda Calculus Benchmark for AI

LamBench is a benchmarking tool designed to evaluate the performance of language models across various dimensions such as intelligence, speed, and elegance. It provides a structured framework for identifying and addressing performance issues in AI models.

victortaelin.github.io

1 min

4/25/2026

Claude Opus 4.7 costs 20–30% more per session

Claude 4.7's new tokenizer uses 1.47 times more tokens than previous versions, exceeding the documentation estimate of 1.0–1.35x. This increase impacts the cost of processing content.

claudecodecamp.com

1 min

4/17/2026

Claude Opus 4.6 accuracy on BridgeBench hallucination test drops from 83% to 68%

CLAUDE OPUS 4.6 IS NERFED. BridgeBench just proved it. Last week Claude Opus 4.6 ranked #2 on the Hallucination benchmark with an accuracy of 83.3%. Today Claude Opus 4.6 was retested and it fell to #10 on the leaderboard with an accuracy of only 68.3%. A 98% increase in hallucination. bridgebench.ai just confirmed that Claude Opus 4.6 has reduced reasoning levels and is nerfed. Bài đăng Cuộc trò ...

twitter.com

1 min

4/12/2026

A leak reveals that Anthropic is testing a more capable AI model "Claude Mythos"

Anthropic is testing a new AI model named 'Mythos,' which is claimed to be the most powerful model the company has developed to date. Early access customers are currently trialing this model, which represents a significant advancement in AI performance.

fortune.com

7 min

3/27/2026

Quantization from the Ground Up

Qwen-3-Coder-Next is an 80 billion parameter model that requires 159.4GB of RAM to run. Techniques exist to reduce the size of large language models by 4x and increase their speed by 2x.

ngrok.com

26 min

3/25/2026

MacBook M5 Pro and Qwen3.5 = Local AI Security System

Qwen3.5-9B achieves a score of 93.8%, closely trailing GPT-5.4, while operating entirely on a MacBook Pro M5 at 25 tok/s and 765ms TTFT, using 13.8 GB of unified memory. The benchmark evaluates 96 tests across 15 suites focusing on tool use, security classification, and event deduplication, with zero API costs and full data privacy.

sharpai.org

3 min

3/20/2026

Are LLMs not getting better?

LLMs demonstrate a significant drop in performance when the success criterion shifts from "passes all tests" to "would get approved by the maintainer." The time to reach a 50% success rate decreases from 50 minutes to 8 minutes under the more stringent criterion.

entropicthoughts.com

3 min

3/12/2026

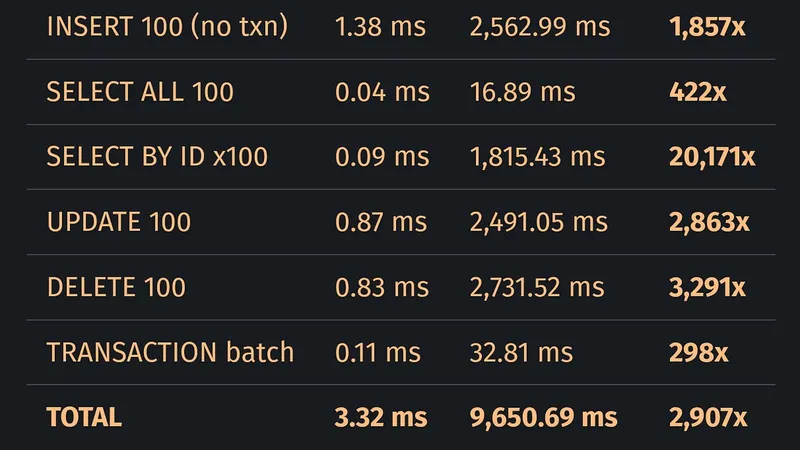

LLMs work best when the user defines their acceptance criteria first

LLM-generated Rust code performs a primary key lookup on 100 rows in 1,815.43 ms, significantly slower than SQLite's 0.09 ms. Although the LLM-generated code compiles and passes tests, it is 20,171 times slower for this basic database operation.

blog.katanaquant.com

21 min

3/7/2026

Scientists made AI agents ruder — and they performed better at complex reasoning tasks

AI chatbots programmed to be ruder, by interrupting or remaining silent like humans, demonstrated improved performance in complex reasoning tasks. This conversational style enhancement led to increased intelligence and accuracy in their responses.

livescience.com

4 min

3/2/2026

Unsloth Dynamic 2.0 GGUFs

Unsloth Dynamic v2.0 quantization significantly enhances performance over previous methods, achieving new benchmarks for Aider Polglot, 5-shot MMLU, and KL Divergence. The 2.0 GGUFs allow for running and fine-tuning quantized LLMs with minimal accuracy loss on various inference engines, including llama.cpp and LM Studio.

unsloth.ai

8 min

2/28/2026

Lambda Calculus Benchmark for AI

LamBench is a benchmarking tool designed to evaluate the performance of language models across various dimensions such as intelligence, speed, and elegance. It provides a structured framework for identifying and addressing performance issues in AI models.

victortaelin.github.io

1 min

4/25/2026

Claude Opus 4.6 accuracy on BridgeBench hallucination test drops from 83% to 68%

CLAUDE OPUS 4.6 IS NERFED. BridgeBench just proved it. Last week Claude Opus 4.6 ranked #2 on the Hallucination benchmark with an accuracy of 83.3%. Today Claude Opus 4.6 was retested and it fell to #10 on the leaderboard with an accuracy of only 68.3%. A 98% increase in hallucination. bridgebench.ai just confirmed that Claude Opus 4.6 has reduced reasoning levels and is nerfed. Bài đăng Cuộc trò ...

twitter.com

1 min

4/12/2026

Quantization from the Ground Up

Qwen-3-Coder-Next is an 80 billion parameter model that requires 159.4GB of RAM to run. Techniques exist to reduce the size of large language models by 4x and increase their speed by 2x.

ngrok.com

26 min

3/25/2026

Are LLMs not getting better?

LLMs demonstrate a significant drop in performance when the success criterion shifts from "passes all tests" to "would get approved by the maintainer." The time to reach a 50% success rate decreases from 50 minutes to 8 minutes under the more stringent criterion.

entropicthoughts.com

3 min

3/12/2026

Scientists made AI agents ruder — and they performed better at complex reasoning tasks

AI chatbots programmed to be ruder, by interrupting or remaining silent like humans, demonstrated improved performance in complex reasoning tasks. This conversational style enhancement led to increased intelligence and accuracy in their responses.

livescience.com

4 min

3/2/2026

Claude Opus 4.7 costs 20–30% more per session

Claude 4.7's new tokenizer uses 1.47 times more tokens than previous versions, exceeding the documentation estimate of 1.0–1.35x. This increase impacts the cost of processing content.

claudecodecamp.com

1 min

4/17/2026

A leak reveals that Anthropic is testing a more capable AI model "Claude Mythos"

Anthropic is testing a new AI model named 'Mythos,' which is claimed to be the most powerful model the company has developed to date. Early access customers are currently trialing this model, which represents a significant advancement in AI performance.

fortune.com

7 min

3/27/2026

MacBook M5 Pro and Qwen3.5 = Local AI Security System

Qwen3.5-9B achieves a score of 93.8%, closely trailing GPT-5.4, while operating entirely on a MacBook Pro M5 at 25 tok/s and 765ms TTFT, using 13.8 GB of unified memory. The benchmark evaluates 96 tests across 15 suites focusing on tool use, security classification, and event deduplication, with zero API costs and full data privacy.

sharpai.org

3 min

3/20/2026

LLMs work best when the user defines their acceptance criteria first

LLM-generated Rust code performs a primary key lookup on 100 rows in 1,815.43 ms, significantly slower than SQLite's 0.09 ms. Although the LLM-generated code compiles and passes tests, it is 20,171 times slower for this basic database operation.

blog.katanaquant.com

21 min

3/7/2026

Unsloth Dynamic 2.0 GGUFs

Unsloth Dynamic v2.0 quantization significantly enhances performance over previous methods, achieving new benchmarks for Aider Polglot, 5-shot MMLU, and KL Divergence. The 2.0 GGUFs allow for running and fine-tuning quantized LLMs with minimal accuracy loss on various inference engines, including llama.cpp and LM Studio.

unsloth.ai

8 min

2/28/2026

Lambda Calculus Benchmark for AI

LamBench is a benchmarking tool designed to evaluate the performance of language models across various dimensions such as intelligence, speed, and elegance. It provides a structured framework for identifying and addressing performance issues in AI models.

victortaelin.github.io

1 min

4/25/2026

A leak reveals that Anthropic is testing a more capable AI model "Claude Mythos"

Anthropic is testing a new AI model named 'Mythos,' which is claimed to be the most powerful model the company has developed to date. Early access customers are currently trialing this model, which represents a significant advancement in AI performance.

fortune.com

7 min

3/27/2026

Are LLMs not getting better?

LLMs demonstrate a significant drop in performance when the success criterion shifts from "passes all tests" to "would get approved by the maintainer." The time to reach a 50% success rate decreases from 50 minutes to 8 minutes under the more stringent criterion.

entropicthoughts.com

3 min

3/12/2026

Unsloth Dynamic 2.0 GGUFs

Unsloth Dynamic v2.0 quantization significantly enhances performance over previous methods, achieving new benchmarks for Aider Polglot, 5-shot MMLU, and KL Divergence. The 2.0 GGUFs allow for running and fine-tuning quantized LLMs with minimal accuracy loss on various inference engines, including llama.cpp and LM Studio.

unsloth.ai

8 min

2/28/2026

Claude Opus 4.7 costs 20–30% more per session

Claude 4.7's new tokenizer uses 1.47 times more tokens than previous versions, exceeding the documentation estimate of 1.0–1.35x. This increase impacts the cost of processing content.

claudecodecamp.com

1 min

4/17/2026

Quantization from the Ground Up

Qwen-3-Coder-Next is an 80 billion parameter model that requires 159.4GB of RAM to run. Techniques exist to reduce the size of large language models by 4x and increase their speed by 2x.

ngrok.com

26 min

3/25/2026

LLMs work best when the user defines their acceptance criteria first

LLM-generated Rust code performs a primary key lookup on 100 rows in 1,815.43 ms, significantly slower than SQLite's 0.09 ms. Although the LLM-generated code compiles and passes tests, it is 20,171 times slower for this basic database operation.

blog.katanaquant.com

21 min

3/7/2026

Claude Opus 4.6 accuracy on BridgeBench hallucination test drops from 83% to 68%

CLAUDE OPUS 4.6 IS NERFED. BridgeBench just proved it. Last week Claude Opus 4.6 ranked #2 on the Hallucination benchmark with an accuracy of 83.3%. Today Claude Opus 4.6 was retested and it fell to #10 on the leaderboard with an accuracy of only 68.3%. A 98% increase in hallucination. bridgebench.ai just confirmed that Claude Opus 4.6 has reduced reasoning levels and is nerfed. Bài đăng Cuộc trò ...

twitter.com

1 min

4/12/2026

MacBook M5 Pro and Qwen3.5 = Local AI Security System

Qwen3.5-9B achieves a score of 93.8%, closely trailing GPT-5.4, while operating entirely on a MacBook Pro M5 at 25 tok/s and 765ms TTFT, using 13.8 GB of unified memory. The benchmark evaluates 96 tests across 15 suites focusing on tool use, security classification, and event deduplication, with zero API costs and full data privacy.

sharpai.org

3 min

3/20/2026

Scientists made AI agents ruder — and they performed better at complex reasoning tasks

AI chatbots programmed to be ruder, by interrupting or remaining silent like humans, demonstrated improved performance in complex reasoning tasks. This conversational style enhancement led to increased intelligence and accuracy in their responses.

livescience.com

4 min

3/2/2026