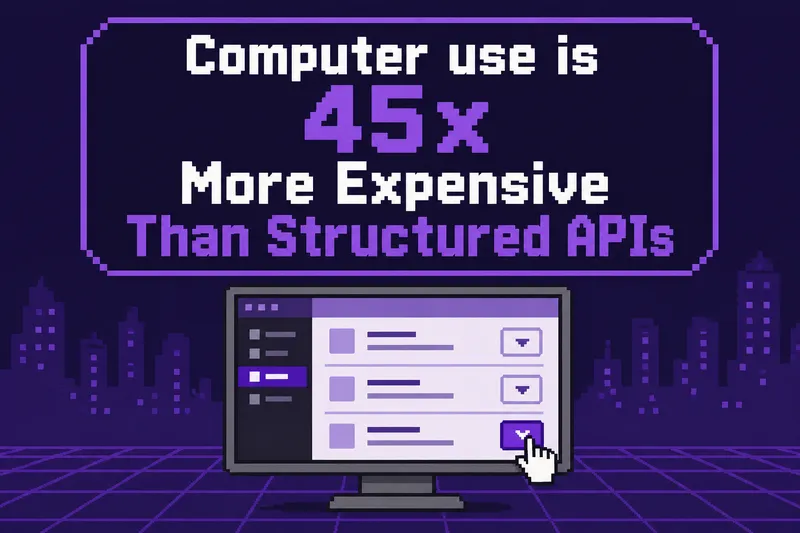

Computer Use Is 45x More Expensive Than Structured APIs

Computer use requires 53 steps and 551,000 tokens, while structured APIs only need 8 calls and 12,000 tokens. This results in computer use being 45 times more expensive than using structured APIs.

reflex.dev

7 min

5/5/2026

Lambda Calculus Benchmark for AI

LamBench is a benchmarking tool designed to evaluate the performance of language models across various dimensions such as intelligence, speed, and elegance. It provides a structured framework for identifying and addressing performance issues in AI models.

victortaelin.github.io

1 min

4/25/2026

StepFun 3.5 Flash is #1 cost-effective model for OpenClaw tasks (300 battles)

OpenClaw Arena provides a public benchmark to assess AI agents' ability to complete real workflows. Users can compare model performance and cost-effectiveness on actual agent tasks.

app.uniclaw.ai

1 min

4/1/2026

MacBook M5 Pro and Qwen3.5 = Local AI Security System

Qwen3.5-9B achieves a score of 93.8%, closely trailing GPT-5.4, while operating entirely on a MacBook Pro M5 at 25 tok/s and 765ms TTFT, using 13.8 GB of unified memory. The benchmark evaluates 96 tests across 15 suites focusing on tool use, security classification, and event deduplication, with zero API costs and full data privacy.

sharpai.org

3 min

3/20/2026

Computer Use Is 45x More Expensive Than Structured APIs

Computer use requires 53 steps and 551,000 tokens, while structured APIs only need 8 calls and 12,000 tokens. This results in computer use being 45 times more expensive than using structured APIs.

reflex.dev

7 min

5/5/2026

StepFun 3.5 Flash is #1 cost-effective model for OpenClaw tasks (300 battles)

OpenClaw Arena provides a public benchmark to assess AI agents' ability to complete real workflows. Users can compare model performance and cost-effectiveness on actual agent tasks.

app.uniclaw.ai

1 min

4/1/2026

Lambda Calculus Benchmark for AI

LamBench is a benchmarking tool designed to evaluate the performance of language models across various dimensions such as intelligence, speed, and elegance. It provides a structured framework for identifying and addressing performance issues in AI models.

victortaelin.github.io

1 min

4/25/2026

MacBook M5 Pro and Qwen3.5 = Local AI Security System

Qwen3.5-9B achieves a score of 93.8%, closely trailing GPT-5.4, while operating entirely on a MacBook Pro M5 at 25 tok/s and 765ms TTFT, using 13.8 GB of unified memory. The benchmark evaluates 96 tests across 15 suites focusing on tool use, security classification, and event deduplication, with zero API costs and full data privacy.

sharpai.org

3 min

3/20/2026

Computer Use Is 45x More Expensive Than Structured APIs

Computer use requires 53 steps and 551,000 tokens, while structured APIs only need 8 calls and 12,000 tokens. This results in computer use being 45 times more expensive than using structured APIs.

reflex.dev

7 min

5/5/2026

MacBook M5 Pro and Qwen3.5 = Local AI Security System

Qwen3.5-9B achieves a score of 93.8%, closely trailing GPT-5.4, while operating entirely on a MacBook Pro M5 at 25 tok/s and 765ms TTFT, using 13.8 GB of unified memory. The benchmark evaluates 96 tests across 15 suites focusing on tool use, security classification, and event deduplication, with zero API costs and full data privacy.

sharpai.org

3 min

3/20/2026

Lambda Calculus Benchmark for AI

LamBench is a benchmarking tool designed to evaluate the performance of language models across various dimensions such as intelligence, speed, and elegance. It provides a structured framework for identifying and addressing performance issues in AI models.

victortaelin.github.io

1 min

4/25/2026

StepFun 3.5 Flash is #1 cost-effective model for OpenClaw tasks (300 battles)

OpenClaw Arena provides a public benchmark to assess AI agents' ability to complete real workflows. Users can compare model performance and cost-effectiveness on actual agent tasks.

app.uniclaw.ai

1 min

4/1/2026

No more articles to load