Learning the Integral of a Diffusion Model

Sampling from a diffusion model involves an iterative process where a denoiser estimates the tangent direction to a path through input space. Neural networks can be trained to directly predict the integral that transforms samples from a simple noise distribution into samples from a target distribution.

sander.ai

83 min

5/6/2026

Introspective Diffusion Language Models

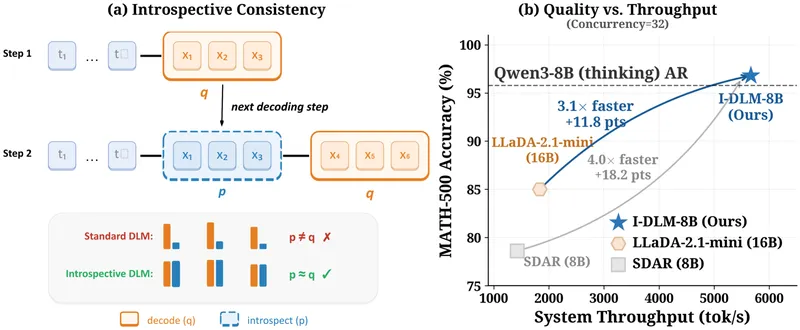

Diffusion language models (DLMs) enable parallel token generation, potentially overcoming the sequential limitations of autoregressive (AR) decoding. However, DLMs currently underperform AR models in quality due to a lack of introspective consistency, where AR models align with their generated outputs.

introspective-diffusion.github.io

4 min

4/14/2026

Hamilton-Jacobi-Bellman Equation: Reinforcement Learning and Diffusion Models

Richard Bellman's 1952 paper established the foundation for optimal control and reinforcement learning. His later work in the 1950s connected continuous-time systems to a previously published physical result from the 1840s, formulating the optimal condition as a partial differential equation (PDE).

dani2442.github.io

16 min

3/30/2026

Learning the Integral of a Diffusion Model

Sampling from a diffusion model involves an iterative process where a denoiser estimates the tangent direction to a path through input space. Neural networks can be trained to directly predict the integral that transforms samples from a simple noise distribution into samples from a target distribution.

sander.ai

83 min

5/6/2026

Hamilton-Jacobi-Bellman Equation: Reinforcement Learning and Diffusion Models

Richard Bellman's 1952 paper established the foundation for optimal control and reinforcement learning. His later work in the 1950s connected continuous-time systems to a previously published physical result from the 1840s, formulating the optimal condition as a partial differential equation (PDE).

dani2442.github.io

16 min

3/30/2026

Introspective Diffusion Language Models

Diffusion language models (DLMs) enable parallel token generation, potentially overcoming the sequential limitations of autoregressive (AR) decoding. However, DLMs currently underperform AR models in quality due to a lack of introspective consistency, where AR models align with their generated outputs.

introspective-diffusion.github.io

4 min

4/14/2026

Learning the Integral of a Diffusion Model

Sampling from a diffusion model involves an iterative process where a denoiser estimates the tangent direction to a path through input space. Neural networks can be trained to directly predict the integral that transforms samples from a simple noise distribution into samples from a target distribution.

sander.ai

83 min

5/6/2026

Introspective Diffusion Language Models

Diffusion language models (DLMs) enable parallel token generation, potentially overcoming the sequential limitations of autoregressive (AR) decoding. However, DLMs currently underperform AR models in quality due to a lack of introspective consistency, where AR models align with their generated outputs.

introspective-diffusion.github.io

4 min

4/14/2026

Hamilton-Jacobi-Bellman Equation: Reinforcement Learning and Diffusion Models

Richard Bellman's 1952 paper established the foundation for optimal control and reinforcement learning. His later work in the 1950s connected continuous-time systems to a previously published physical result from the 1840s, formulating the optimal condition as a partial differential equation (PDE).

dani2442.github.io

16 min

3/30/2026

No more articles to load