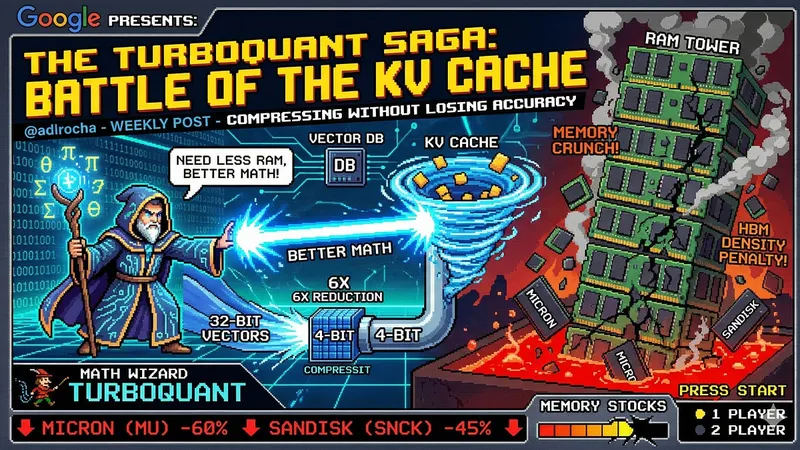

What if AI doesn't need more RAM but better math?

TurboQuant compresses the KV cache in AI applications, improving efficiency without sacrificing accuracy. This innovation addresses the challenges of HBM density penalties and DRAM price pressures in the AI memory landscape.

adlrocha.substack.com

10 min

3/29/2026

David Patterson: Challenges and Research Directions for LLM Inference Hardware

Large Language Model (LLM) inference faces significant challenges primarily related to memory and interconnect issues rather than compute power. The autoregressive Decode phase of Transformer models distinguishes LLM inference from training, complicating the process.

arxiv.org

2 min

1/25/2026

What if AI doesn't need more RAM but better math?

TurboQuant compresses the KV cache in AI applications, improving efficiency without sacrificing accuracy. This innovation addresses the challenges of HBM density penalties and DRAM price pressures in the AI memory landscape.

adlrocha.substack.com

10 min

3/29/2026

David Patterson: Challenges and Research Directions for LLM Inference Hardware

Large Language Model (LLM) inference faces significant challenges primarily related to memory and interconnect issues rather than compute power. The autoregressive Decode phase of Transformer models distinguishes LLM inference from training, complicating the process.

arxiv.org

2 min

1/25/2026

What if AI doesn't need more RAM but better math?

TurboQuant compresses the KV cache in AI applications, improving efficiency without sacrificing accuracy. This innovation addresses the challenges of HBM density penalties and DRAM price pressures in the AI memory landscape.

adlrocha.substack.com

10 min

3/29/2026

David Patterson: Challenges and Research Directions for LLM Inference Hardware

Large Language Model (LLM) inference faces significant challenges primarily related to memory and interconnect issues rather than compute power. The autoregressive Decode phase of Transformer models distinguishes LLM inference from training, complicating the process.

arxiv.org

2 min

1/25/2026

No more articles to load