TurboQuant: Redefining AI efficiency with extreme compression

TurboQuant introduces advanced quantization algorithms that facilitate significant compression of large language models and vector search engines. These algorithms enhance AI efficiency by optimizing how models process and understand information through vector representation.

research.google

7 min

4d ago

LLM Neuroanatomy II: Modern LLM Hacking and Hints of a Universal Language?

Duplicating a block of seven middle layers in Qwen2-72B without weight changes or training produced a top model on the HuggingFace Open LLM Leaderboard. Since mid-2024, several strong open-source models have emerged, including Qwen3.5, MiniMax, and GLM-4.

dnhkng.github.io

20 min

5d ago

Epoch confirms GPT5.4 Pro solved a frontier math open problem

A Ramsey-style problem on hypergraphs has been solved by Kevin Barreto and Liam Price using GPT-5.4 Pro. The solution has been confirmed by Will Brian and will be published, along with a transcript of the original conversation.

epoch.ai

5 min

5d ago

Language Model Teams as Distrbuted Systems

Large language models (LLMs) are being deployed in teams, raising questions about their effectiveness, optimal team size, structural impact on performance, and comparative advantages over individual models. A principled framework is needed to address these key issues in the context of multiagent systems.

arxiv.org

2 min

3/16/2026

Speed at the cost of quality: Study of use of Cursor AI in open source projects (2025)

Cursor AI enhances short-term development speed in open-source projects by leveraging large language models (LLMs). However, this acceleration may lead to increased long-term complexity in software maintenance and quality.

arxiv.org

2 min

3/16/2026

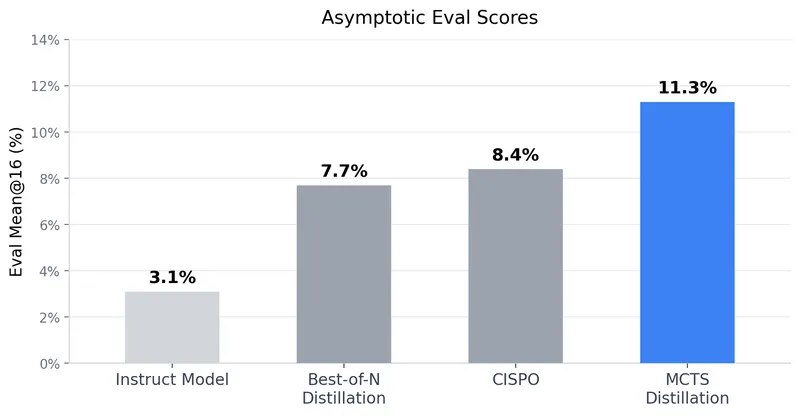

Tree Search Distillation for Language Models Using PPO

Tree Search Distillation utilizes Proximal Policy Optimization (PPO) to enhance language models by integrating a test-time search mechanism similar to that used in game-playing neural networks like AlphaZero. The method aims to distill a stronger, augmented policy back into the language model, addressing the limitations observed in previous attempts with Monte Carlo Tree Search (MCTS).

ayushtambde.com

10 min

3/15/2026

Document poisoning in RAG systems: How attackers corrupt AI's sources

Three fabricated documents were injected into a ChromaDB knowledge base, resulting in a RAG system inaccurately reporting a company's Q4 2025 revenue as $8.3M, a 47% decrease year-over-year, along with a planned workforce reduction. This process was completed in under three minutes on a MacBook Pro without GPU support or cloud services.

aminrj.com

13 min

3/12/2026

SWE-CI: Evaluating Agent Capabilities in Maintaining Codebases via CI

Large language model-powered agents can automate software engineering tasks, including static bug fixing, as shown by benchmarks like SWE-bench. Real-world software development requires navigating complex requirements beyond these capabilities.

arxiv.org

2 min

3/8/2026

Labor market impacts of AI: A new measure and early evidence

A new measure of AI displacement risk, termed observed exposure, combines theoretical LLM capability with real-world usage data, emphasizing automated work-related uses. Occupations with higher observed exposure are projected to experience slower growth through 2034, with workers in these professions more likely to face displacement.

anthropic.com

20 min

3/5/2026

LLMs can unmask pseudonymous users at scale with surprising accuracy

AI techniques can analyze burner accounts on social media to accurately identify pseudonymous users. Experiments show a higher success rate in correlating individuals with accounts across multiple platforms compared to traditional deanonymization methods.

arstechnica.com

2 min

3/4/2026

TurboQuant: Redefining AI efficiency with extreme compression

TurboQuant introduces advanced quantization algorithms that facilitate significant compression of large language models and vector search engines. These algorithms enhance AI efficiency by optimizing how models process and understand information through vector representation.

research.google

7 min

4d ago

Epoch confirms GPT5.4 Pro solved a frontier math open problem

A Ramsey-style problem on hypergraphs has been solved by Kevin Barreto and Liam Price using GPT-5.4 Pro. The solution has been confirmed by Will Brian and will be published, along with a transcript of the original conversation.

epoch.ai

5 min

5d ago

Speed at the cost of quality: Study of use of Cursor AI in open source projects (2025)

Cursor AI enhances short-term development speed in open-source projects by leveraging large language models (LLMs). However, this acceleration may lead to increased long-term complexity in software maintenance and quality.

arxiv.org

2 min

3/16/2026

Document poisoning in RAG systems: How attackers corrupt AI's sources

Three fabricated documents were injected into a ChromaDB knowledge base, resulting in a RAG system inaccurately reporting a company's Q4 2025 revenue as $8.3M, a 47% decrease year-over-year, along with a planned workforce reduction. This process was completed in under three minutes on a MacBook Pro without GPU support or cloud services.

aminrj.com

13 min

3/12/2026

Labor market impacts of AI: A new measure and early evidence

A new measure of AI displacement risk, termed observed exposure, combines theoretical LLM capability with real-world usage data, emphasizing automated work-related uses. Occupations with higher observed exposure are projected to experience slower growth through 2034, with workers in these professions more likely to face displacement.

anthropic.com

20 min

3/5/2026

LLM Neuroanatomy II: Modern LLM Hacking and Hints of a Universal Language?

Duplicating a block of seven middle layers in Qwen2-72B without weight changes or training produced a top model on the HuggingFace Open LLM Leaderboard. Since mid-2024, several strong open-source models have emerged, including Qwen3.5, MiniMax, and GLM-4.

dnhkng.github.io

20 min

5d ago

Language Model Teams as Distrbuted Systems

Large language models (LLMs) are being deployed in teams, raising questions about their effectiveness, optimal team size, structural impact on performance, and comparative advantages over individual models. A principled framework is needed to address these key issues in the context of multiagent systems.

arxiv.org

2 min

3/16/2026

Tree Search Distillation for Language Models Using PPO

Tree Search Distillation utilizes Proximal Policy Optimization (PPO) to enhance language models by integrating a test-time search mechanism similar to that used in game-playing neural networks like AlphaZero. The method aims to distill a stronger, augmented policy back into the language model, addressing the limitations observed in previous attempts with Monte Carlo Tree Search (MCTS).

ayushtambde.com

10 min

3/15/2026

SWE-CI: Evaluating Agent Capabilities in Maintaining Codebases via CI

Large language model-powered agents can automate software engineering tasks, including static bug fixing, as shown by benchmarks like SWE-bench. Real-world software development requires navigating complex requirements beyond these capabilities.

arxiv.org

2 min

3/8/2026

LLMs can unmask pseudonymous users at scale with surprising accuracy

AI techniques can analyze burner accounts on social media to accurately identify pseudonymous users. Experiments show a higher success rate in correlating individuals with accounts across multiple platforms compared to traditional deanonymization methods.

arstechnica.com

2 min

3/4/2026

TurboQuant: Redefining AI efficiency with extreme compression

TurboQuant introduces advanced quantization algorithms that facilitate significant compression of large language models and vector search engines. These algorithms enhance AI efficiency by optimizing how models process and understand information through vector representation.

research.google

7 min

4d ago

Language Model Teams as Distrbuted Systems

Large language models (LLMs) are being deployed in teams, raising questions about their effectiveness, optimal team size, structural impact on performance, and comparative advantages over individual models. A principled framework is needed to address these key issues in the context of multiagent systems.

arxiv.org

2 min

3/16/2026

Document poisoning in RAG systems: How attackers corrupt AI's sources

Three fabricated documents were injected into a ChromaDB knowledge base, resulting in a RAG system inaccurately reporting a company's Q4 2025 revenue as $8.3M, a 47% decrease year-over-year, along with a planned workforce reduction. This process was completed in under three minutes on a MacBook Pro without GPU support or cloud services.

aminrj.com

13 min

3/12/2026

LLMs can unmask pseudonymous users at scale with surprising accuracy

AI techniques can analyze burner accounts on social media to accurately identify pseudonymous users. Experiments show a higher success rate in correlating individuals with accounts across multiple platforms compared to traditional deanonymization methods.

arstechnica.com

2 min

3/4/2026

LLM Neuroanatomy II: Modern LLM Hacking and Hints of a Universal Language?

Duplicating a block of seven middle layers in Qwen2-72B without weight changes or training produced a top model on the HuggingFace Open LLM Leaderboard. Since mid-2024, several strong open-source models have emerged, including Qwen3.5, MiniMax, and GLM-4.

dnhkng.github.io

20 min

5d ago

Speed at the cost of quality: Study of use of Cursor AI in open source projects (2025)

Cursor AI enhances short-term development speed in open-source projects by leveraging large language models (LLMs). However, this acceleration may lead to increased long-term complexity in software maintenance and quality.

arxiv.org

2 min

3/16/2026

SWE-CI: Evaluating Agent Capabilities in Maintaining Codebases via CI

Large language model-powered agents can automate software engineering tasks, including static bug fixing, as shown by benchmarks like SWE-bench. Real-world software development requires navigating complex requirements beyond these capabilities.

arxiv.org

2 min

3/8/2026

Epoch confirms GPT5.4 Pro solved a frontier math open problem

A Ramsey-style problem on hypergraphs has been solved by Kevin Barreto and Liam Price using GPT-5.4 Pro. The solution has been confirmed by Will Brian and will be published, along with a transcript of the original conversation.

epoch.ai

5 min

5d ago

Tree Search Distillation for Language Models Using PPO

Tree Search Distillation utilizes Proximal Policy Optimization (PPO) to enhance language models by integrating a test-time search mechanism similar to that used in game-playing neural networks like AlphaZero. The method aims to distill a stronger, augmented policy back into the language model, addressing the limitations observed in previous attempts with Monte Carlo Tree Search (MCTS).

ayushtambde.com

10 min

3/15/2026

Labor market impacts of AI: A new measure and early evidence

A new measure of AI displacement risk, termed observed exposure, combines theoretical LLM capability with real-world usage data, emphasizing automated work-related uses. Occupations with higher observed exposure are projected to experience slower growth through 2034, with workers in these professions more likely to face displacement.

anthropic.com

20 min

3/5/2026