Billion-Parameter Theories

Billion-parameter theories aim to explain complex phenomena in the universe using concise mathematical formulations. Historical explanations of natural events transitioned from mystical interpretations to scientific inquiry with succinct equations like F=ma and E=mc².

worldgov.org

10 min

3/10/2026

Speculative Speculative Decoding (SSD)

Speculative decoding accelerates autoregressive inference by using a fast draft model to predict upcoming tokens from a slower target model. It verifies predictions in parallel with a single forward pass of the target model, addressing the sequential dependency bottleneck.

arxiv.org

2 min

3/4/2026

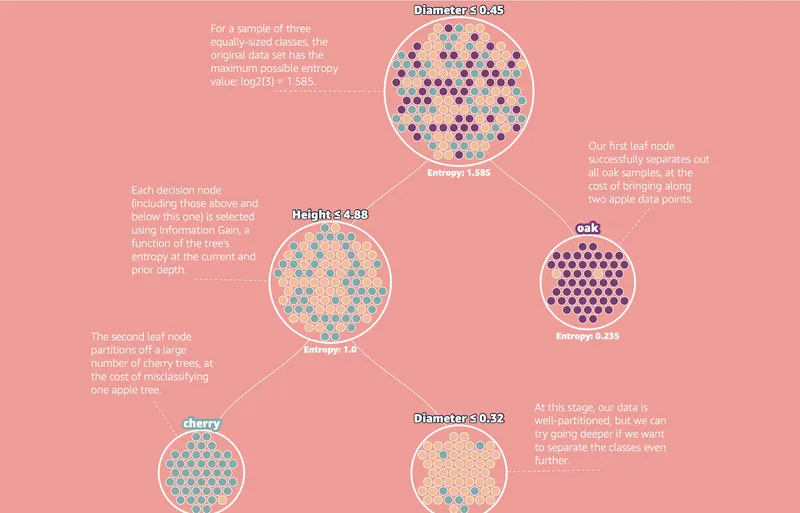

Decision trees – the unreasonable power of nested decision rules

Decision Trees create sequential rules that split data into distinct regions for classification. Entropy is used to measure information and identify regions with significant data separation.

mlu-explain.github.io

6 min

3/1/2026

Looks like it is happening

Data from December 2022 to December 2025 shows a steady increase in submissions, with numbers rising from 800 in 2022 to 855 in 2025. From January 1 to February 15, 2026, submissions reached 617, indicating a year-over-year growth trend.

math.columbia.edu

2 min

2/24/2026

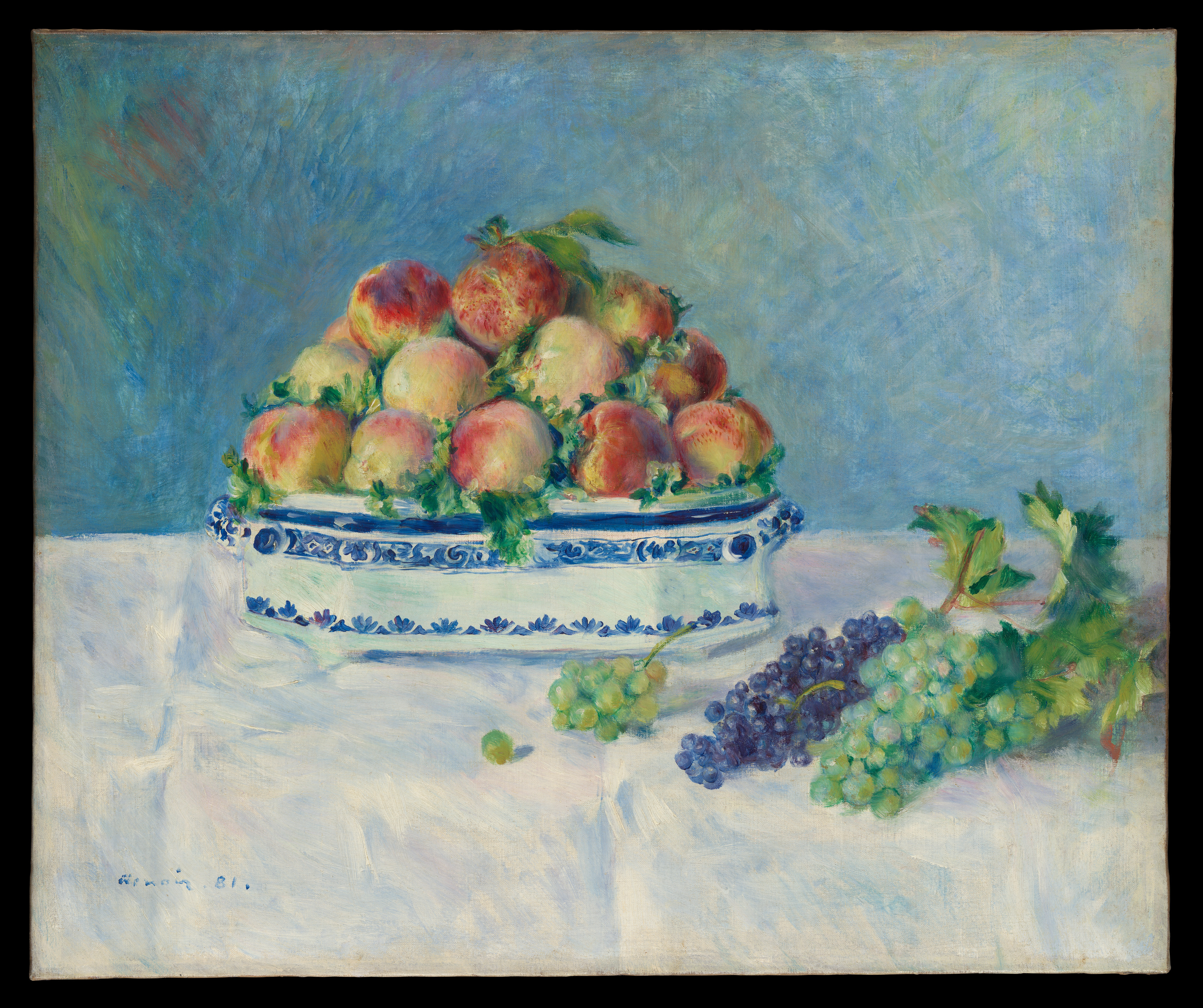

AI Reveals Unexpected New Physics in the Fourth State of Matter

Researchers at Emory University have used a machine learning technique to uncover unexpected features of non-reciprocal forces in many-body systems. The study combines a neural network with laboratory measurements from a dusty plasma, enhancing understanding of the fourth state of matter.

scitechdaily.com

8 min

2/24/2026

Fast KV Compaction via Attention Matching

Fast KV Compaction via Attention Matching addresses the limitations of key-value cache size in scaling language models for long contexts. It proposes a method that improves context management without the lossy effects of traditional summarization techniques.

arxiv.org

2 min

2/20/2026

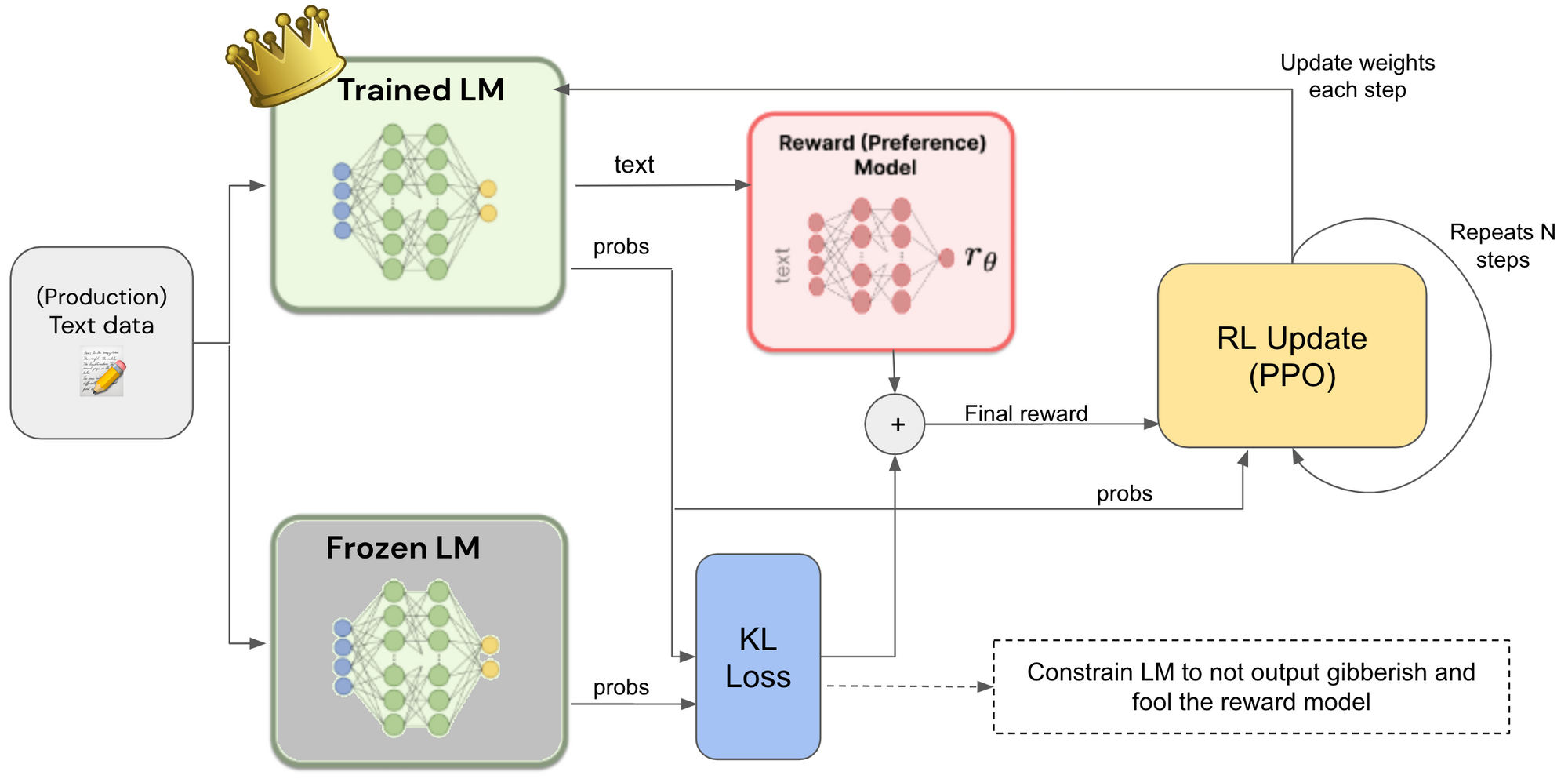

Reinforcement Learning from Human Feedback

Reinforcement learning from human feedback (RLHF) is a key technique for deploying advanced machine learning systems. A new book provides an introduction to the core methods of RLHF for readers with a quantitative background.

arxiv.org

2 min

2/7/2026

Billion-Parameter Theories

Billion-parameter theories aim to explain complex phenomena in the universe using concise mathematical formulations. Historical explanations of natural events transitioned from mystical interpretations to scientific inquiry with succinct equations like F=ma and E=mc².

worldgov.org

10 min

3/10/2026

Decision trees – the unreasonable power of nested decision rules

Decision Trees create sequential rules that split data into distinct regions for classification. Entropy is used to measure information and identify regions with significant data separation.

mlu-explain.github.io

6 min

3/1/2026

AI Reveals Unexpected New Physics in the Fourth State of Matter

Researchers at Emory University have used a machine learning technique to uncover unexpected features of non-reciprocal forces in many-body systems. The study combines a neural network with laboratory measurements from a dusty plasma, enhancing understanding of the fourth state of matter.

scitechdaily.com

8 min

2/24/2026

Reinforcement Learning from Human Feedback

Reinforcement learning from human feedback (RLHF) is a key technique for deploying advanced machine learning systems. A new book provides an introduction to the core methods of RLHF for readers with a quantitative background.

arxiv.org

2 min

2/7/2026

Speculative Speculative Decoding (SSD)

Speculative decoding accelerates autoregressive inference by using a fast draft model to predict upcoming tokens from a slower target model. It verifies predictions in parallel with a single forward pass of the target model, addressing the sequential dependency bottleneck.

arxiv.org

2 min

3/4/2026

Looks like it is happening

Data from December 2022 to December 2025 shows a steady increase in submissions, with numbers rising from 800 in 2022 to 855 in 2025. From January 1 to February 15, 2026, submissions reached 617, indicating a year-over-year growth trend.

math.columbia.edu

2 min

2/24/2026

Fast KV Compaction via Attention Matching

Fast KV Compaction via Attention Matching addresses the limitations of key-value cache size in scaling language models for long contexts. It proposes a method that improves context management without the lossy effects of traditional summarization techniques.

arxiv.org

2 min

2/20/2026

Billion-Parameter Theories

Billion-parameter theories aim to explain complex phenomena in the universe using concise mathematical formulations. Historical explanations of natural events transitioned from mystical interpretations to scientific inquiry with succinct equations like F=ma and E=mc².

worldgov.org

10 min

3/10/2026

Looks like it is happening

Data from December 2022 to December 2025 shows a steady increase in submissions, with numbers rising from 800 in 2022 to 855 in 2025. From January 1 to February 15, 2026, submissions reached 617, indicating a year-over-year growth trend.

math.columbia.edu

2 min

2/24/2026

Reinforcement Learning from Human Feedback

Reinforcement learning from human feedback (RLHF) is a key technique for deploying advanced machine learning systems. A new book provides an introduction to the core methods of RLHF for readers with a quantitative background.

arxiv.org

2 min

2/7/2026

Speculative Speculative Decoding (SSD)

Speculative decoding accelerates autoregressive inference by using a fast draft model to predict upcoming tokens from a slower target model. It verifies predictions in parallel with a single forward pass of the target model, addressing the sequential dependency bottleneck.

arxiv.org

2 min

3/4/2026

AI Reveals Unexpected New Physics in the Fourth State of Matter

Researchers at Emory University have used a machine learning technique to uncover unexpected features of non-reciprocal forces in many-body systems. The study combines a neural network with laboratory measurements from a dusty plasma, enhancing understanding of the fourth state of matter.

scitechdaily.com

8 min

2/24/2026

Decision trees – the unreasonable power of nested decision rules

Decision Trees create sequential rules that split data into distinct regions for classification. Entropy is used to measure information and identify regions with significant data separation.

mlu-explain.github.io

6 min

3/1/2026

Fast KV Compaction via Attention Matching

Fast KV Compaction via Attention Matching addresses the limitations of key-value cache size in scaling language models for long contexts. It proposes a method that improves context management without the lossy effects of traditional summarization techniques.

arxiv.org

2 min

2/20/2026

No more articles to load