Flash-MoE: Running a 397B Parameter Model on a Laptop

Flash-Moe is a pure C/Metal inference engine that runs the Qwen3.5-397B-A17B model, a 397 billion parameter Mixture-of-Experts model, on a MacBook Pro with 48GB RAM at over 4.4 tokens per second. The 209GB model streams from SSD using a custom Metal compute pipeline without relying on Python or other frameworks.

github.com

6 min

3/22/2026

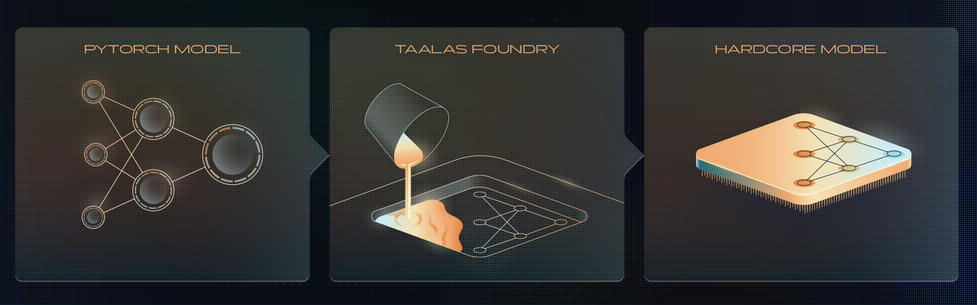

How Taalas “prints” LLM onto a chip?

Taalas has released an ASIC chip that runs Llama 3.1 8B with an inference rate of 17,000 tokens per second, equivalent to writing approximately 30 A4-sized pages in one second. The chip is claimed to be 10 times cheaper in ownership costs and 10 times more energy-efficient than GPU-based inference systems, while also being 10 times faster than current state-of-the-art inference solutions.

anuragk.com

4 min

2/21/2026

Flash-MoE: Running a 397B Parameter Model on a Laptop

Flash-Moe is a pure C/Metal inference engine that runs the Qwen3.5-397B-A17B model, a 397 billion parameter Mixture-of-Experts model, on a MacBook Pro with 48GB RAM at over 4.4 tokens per second. The 209GB model streams from SSD using a custom Metal compute pipeline without relying on Python or other frameworks.

github.com

6 min

3/22/2026

How Taalas “prints” LLM onto a chip?

Taalas has released an ASIC chip that runs Llama 3.1 8B with an inference rate of 17,000 tokens per second, equivalent to writing approximately 30 A4-sized pages in one second. The chip is claimed to be 10 times cheaper in ownership costs and 10 times more energy-efficient than GPU-based inference systems, while also being 10 times faster than current state-of-the-art inference solutions.

anuragk.com

4 min

2/21/2026

Flash-MoE: Running a 397B Parameter Model on a Laptop

Flash-Moe is a pure C/Metal inference engine that runs the Qwen3.5-397B-A17B model, a 397 billion parameter Mixture-of-Experts model, on a MacBook Pro with 48GB RAM at over 4.4 tokens per second. The 209GB model streams from SSD using a custom Metal compute pipeline without relying on Python or other frameworks.

github.com

6 min

3/22/2026

How Taalas “prints” LLM onto a chip?

Taalas has released an ASIC chip that runs Llama 3.1 8B with an inference rate of 17,000 tokens per second, equivalent to writing approximately 30 A4-sized pages in one second. The chip is claimed to be 10 times cheaper in ownership costs and 10 times more energy-efficient than GPU-based inference systems, while also being 10 times faster than current state-of-the-art inference solutions.

anuragk.com

4 min

2/21/2026

No more articles to load