Flash-MoE: Running a 397B Parameter Model on a Laptop

Flash-Moe is a pure C/Metal inference engine that runs the Qwen3.5-397B-A17B model, a 397 billion parameter Mixture-of-Experts model, on a MacBook Pro with 48GB RAM at over 4.4 tokens per second. The 209GB model streams from SSD using a custom Metal compute pipeline without relying on Python or other frameworks.

github.com

6 min

3/22/2026

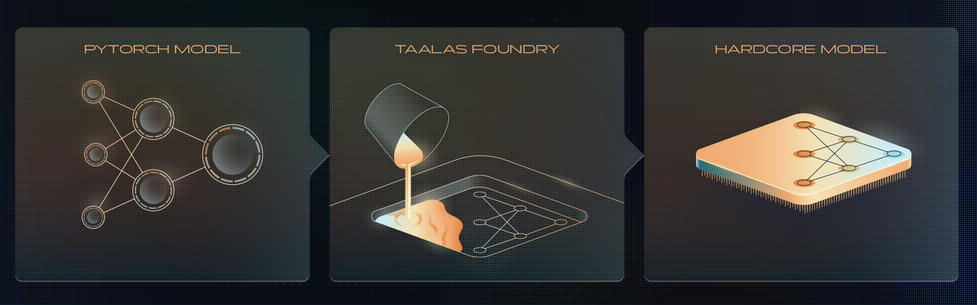

How Taalas “prints” LLM onto a chip?

Taalas has released an ASIC chip that runs Llama 3.1 8B with an inference rate of 17,000 tokens per second, equivalent to writing approximately 30 A4-sized pages in one second. The chip is claimed to be 10 times cheaper in ownership costs and 10 times more energy-efficient than GPU-based inference systems, while also being 10 times faster than current state-of-the-art inference solutions.

anuragk.com

4 min

2/21/2026

Nano-vLLM: How a vLLM-style inference engine works

Large language models (LLMs) rely on inference engines to process prompts and manage requests efficiently in production environments. Understanding the architecture and scheduling of these engines, such as Nano-vLLM, is essential for optimizing LLM deployment.

neutree.ai

9 min

2/2/2026

David Patterson: Challenges and Research Directions for LLM Inference Hardware

Large Language Model (LLM) inference faces significant challenges primarily related to memory and interconnect issues rather than compute power. The autoregressive Decode phase of Transformer models distinguishes LLM inference from training, complicating the process.

arxiv.org

2 min

1/25/2026

Flash-MoE: Running a 397B Parameter Model on a Laptop

Flash-Moe is a pure C/Metal inference engine that runs the Qwen3.5-397B-A17B model, a 397 billion parameter Mixture-of-Experts model, on a MacBook Pro with 48GB RAM at over 4.4 tokens per second. The 209GB model streams from SSD using a custom Metal compute pipeline without relying on Python or other frameworks.

github.com

6 min

3/22/2026

Nano-vLLM: How a vLLM-style inference engine works

Large language models (LLMs) rely on inference engines to process prompts and manage requests efficiently in production environments. Understanding the architecture and scheduling of these engines, such as Nano-vLLM, is essential for optimizing LLM deployment.

neutree.ai

9 min

2/2/2026

How Taalas “prints” LLM onto a chip?

Taalas has released an ASIC chip that runs Llama 3.1 8B with an inference rate of 17,000 tokens per second, equivalent to writing approximately 30 A4-sized pages in one second. The chip is claimed to be 10 times cheaper in ownership costs and 10 times more energy-efficient than GPU-based inference systems, while also being 10 times faster than current state-of-the-art inference solutions.

anuragk.com

4 min

2/21/2026

David Patterson: Challenges and Research Directions for LLM Inference Hardware

Large Language Model (LLM) inference faces significant challenges primarily related to memory and interconnect issues rather than compute power. The autoregressive Decode phase of Transformer models distinguishes LLM inference from training, complicating the process.

arxiv.org

2 min

1/25/2026

Flash-MoE: Running a 397B Parameter Model on a Laptop

Flash-Moe is a pure C/Metal inference engine that runs the Qwen3.5-397B-A17B model, a 397 billion parameter Mixture-of-Experts model, on a MacBook Pro with 48GB RAM at over 4.4 tokens per second. The 209GB model streams from SSD using a custom Metal compute pipeline without relying on Python or other frameworks.

github.com

6 min

3/22/2026

David Patterson: Challenges and Research Directions for LLM Inference Hardware

Large Language Model (LLM) inference faces significant challenges primarily related to memory and interconnect issues rather than compute power. The autoregressive Decode phase of Transformer models distinguishes LLM inference from training, complicating the process.

arxiv.org

2 min

1/25/2026

How Taalas “prints” LLM onto a chip?

Taalas has released an ASIC chip that runs Llama 3.1 8B with an inference rate of 17,000 tokens per second, equivalent to writing approximately 30 A4-sized pages in one second. The chip is claimed to be 10 times cheaper in ownership costs and 10 times more energy-efficient than GPU-based inference systems, while also being 10 times faster than current state-of-the-art inference solutions.

anuragk.com

4 min

2/21/2026

Nano-vLLM: How a vLLM-style inference engine works

Large language models (LLMs) rely on inference engines to process prompts and manage requests efficiently in production environments. Understanding the architecture and scheduling of these engines, such as Nano-vLLM, is essential for optimizing LLM deployment.

neutree.ai

9 min

2/2/2026

No more articles to load