Anthropic Subprocessor Changes

Anthropic's Trust Center focuses on AI safety and research, emphasizing transparency and secure practices in AI development. The initiative aims to support the safe transition to transformative AI technologies.

trust.anthropic.com

1 min

2d ago

Attention Residuals

Attention Residuals (AttnRes) serves as a drop-in replacement for standard residual connections in Transformers, allowing each layer to selectively aggregate earlier representations. It includes two variants: Full AttnRes, where each layer attends over all previous outputs, and Block AttnRes, which groups layers into blocks to reduce memory usage from O(Ld) to O(Nd).

github.com

3 min

3/21/2026

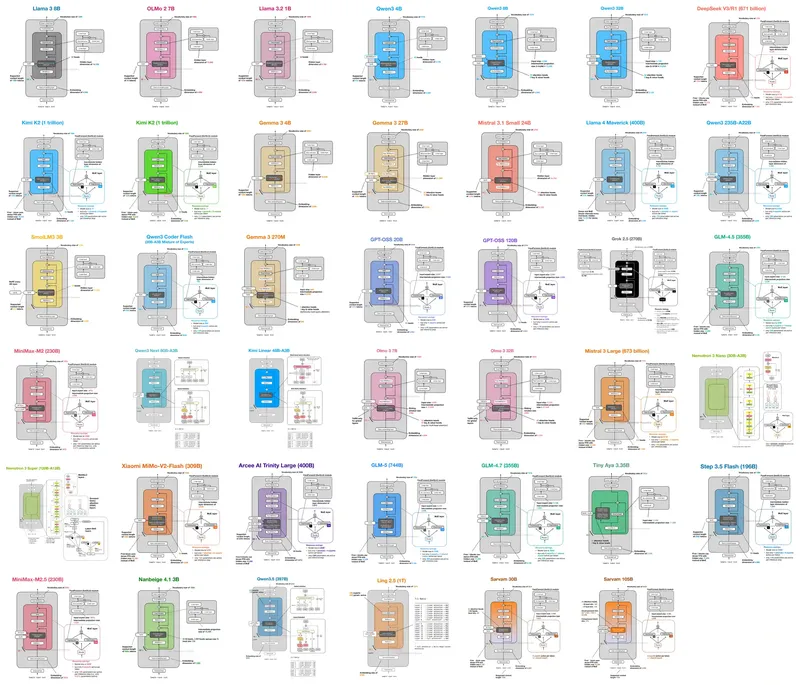

LLM Architecture Gallery

The LLM Architecture Gallery compiles architecture figures and fact sheets from significant LLM comparisons. Users can enlarge figures and navigate to corresponding sections using model titles.

sebastianraschka.com

8 min

3/15/2026

Anthropic Subprocessor Changes

Anthropic's Trust Center focuses on AI safety and research, emphasizing transparency and secure practices in AI development. The initiative aims to support the safe transition to transformative AI technologies.

trust.anthropic.com

1 min

2d ago

LLM Architecture Gallery

The LLM Architecture Gallery compiles architecture figures and fact sheets from significant LLM comparisons. Users can enlarge figures and navigate to corresponding sections using model titles.

sebastianraschka.com

8 min

3/15/2026

Attention Residuals

Attention Residuals (AttnRes) serves as a drop-in replacement for standard residual connections in Transformers, allowing each layer to selectively aggregate earlier representations. It includes two variants: Full AttnRes, where each layer attends over all previous outputs, and Block AttnRes, which groups layers into blocks to reduce memory usage from O(Ld) to O(Nd).

github.com

3 min

3/21/2026

Anthropic Subprocessor Changes

Anthropic's Trust Center focuses on AI safety and research, emphasizing transparency and secure practices in AI development. The initiative aims to support the safe transition to transformative AI technologies.

trust.anthropic.com

1 min

2d ago

Attention Residuals

Attention Residuals (AttnRes) serves as a drop-in replacement for standard residual connections in Transformers, allowing each layer to selectively aggregate earlier representations. It includes two variants: Full AttnRes, where each layer attends over all previous outputs, and Block AttnRes, which groups layers into blocks to reduce memory usage from O(Ld) to O(Nd).

github.com

3 min

3/21/2026

LLM Architecture Gallery

The LLM Architecture Gallery compiles architecture figures and fact sheets from significant LLM comparisons. Users can enlarge figures and navigate to corresponding sections using model titles.

sebastianraschka.com

8 min

3/15/2026

No more articles to load