Attention Residuals

Attention Residuals (AttnRes) serves as a drop-in replacement for standard residual connections in Transformers, allowing each layer to selectively aggregate earlier representations. It includes two variants: Full AttnRes, where each layer attends over all previous outputs, and Block AttnRes, which groups layers into blocks to reduce memory usage from O(Ld) to O(Nd).

github.com

3 min

3/21/2026

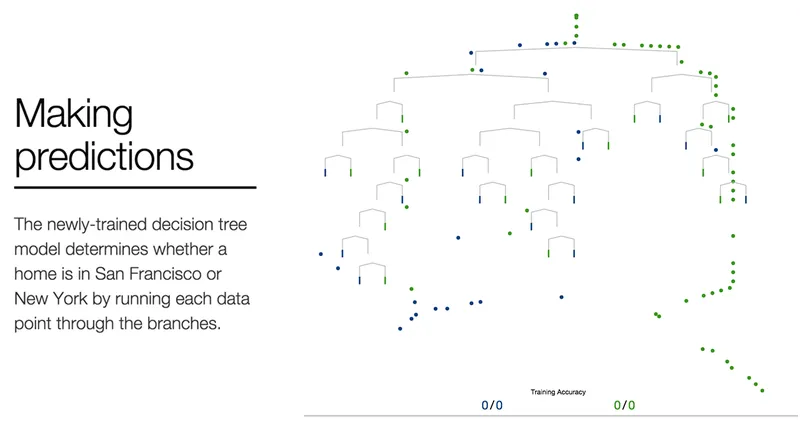

A Visual Introduction to Machine Learning (2015)

Machine learning employs statistical techniques to automatically identify patterns in data, enabling accurate predictions. A model can be created using a dataset about homes to differentiate between homes in New York and those in San Francisco.

r2d3.us

7 min

3/15/2026

This musician built an AI clone of her voice so anyone can sing as her

Holly Herndon has developed an AI voice clone that allows users to create music using her custom models. Her journey into machine learning began in 2015, evolving from initial "scratchy" outputs to sophisticated tools for musical expression.

scientificamerican.com

4 min

3/3/2026

10-202: Introduction to Modern AI (CMU)

The course "10-202: Introduction to Modern AI" covers the workings of modern AI systems, focusing on machine learning methods and large language models (LLMs) such as ChatGPT, Gemini, and Claude. The curriculum emphasizes the contemporary understanding of AI, primarily relating to chatbot technologies used daily.

modernaicourse.org

5 min

3/1/2026

Building a Minimal Transformer for 10-digit Addition

A minimal transformer model has been developed to perform 10-digit addition tasks. The model demonstrates the ability to learn and execute arithmetic operations effectively.

alexlitzenberger.com

1 min

2/28/2026

Music Discovery

AI-powered record discovery. Describe a vibe, name a record you love, or tell us how you're feeling — the clerk will dig through the crates for you.

secondtrack.co

1 min

2/22/2026

Bridging Elixir and Python with Oban

Oban facilitates integration between Elixir and Python, allowing Elixir applications to utilize Python's advanced functionalities such as machine learning models and multimedia processing tools. This integration supports collaboration across teams and aids in gradual migration between programming languages.

oban.pro

5 min

2/19/2026

Attention Residuals

Attention Residuals (AttnRes) serves as a drop-in replacement for standard residual connections in Transformers, allowing each layer to selectively aggregate earlier representations. It includes two variants: Full AttnRes, where each layer attends over all previous outputs, and Block AttnRes, which groups layers into blocks to reduce memory usage from O(Ld) to O(Nd).

github.com

3 min

3/21/2026

This musician built an AI clone of her voice so anyone can sing as her

Holly Herndon has developed an AI voice clone that allows users to create music using her custom models. Her journey into machine learning began in 2015, evolving from initial "scratchy" outputs to sophisticated tools for musical expression.

scientificamerican.com

4 min

3/3/2026

Building a Minimal Transformer for 10-digit Addition

A minimal transformer model has been developed to perform 10-digit addition tasks. The model demonstrates the ability to learn and execute arithmetic operations effectively.

alexlitzenberger.com

1 min

2/28/2026

Bridging Elixir and Python with Oban

Oban facilitates integration between Elixir and Python, allowing Elixir applications to utilize Python's advanced functionalities such as machine learning models and multimedia processing tools. This integration supports collaboration across teams and aids in gradual migration between programming languages.

oban.pro

5 min

2/19/2026

A Visual Introduction to Machine Learning (2015)

Machine learning employs statistical techniques to automatically identify patterns in data, enabling accurate predictions. A model can be created using a dataset about homes to differentiate between homes in New York and those in San Francisco.

r2d3.us

7 min

3/15/2026

10-202: Introduction to Modern AI (CMU)

The course "10-202: Introduction to Modern AI" covers the workings of modern AI systems, focusing on machine learning methods and large language models (LLMs) such as ChatGPT, Gemini, and Claude. The curriculum emphasizes the contemporary understanding of AI, primarily relating to chatbot technologies used daily.

modernaicourse.org

5 min

3/1/2026

Music Discovery

AI-powered record discovery. Describe a vibe, name a record you love, or tell us how you're feeling — the clerk will dig through the crates for you.

secondtrack.co

1 min

2/22/2026

Attention Residuals

Attention Residuals (AttnRes) serves as a drop-in replacement for standard residual connections in Transformers, allowing each layer to selectively aggregate earlier representations. It includes two variants: Full AttnRes, where each layer attends over all previous outputs, and Block AttnRes, which groups layers into blocks to reduce memory usage from O(Ld) to O(Nd).

github.com

3 min

3/21/2026

10-202: Introduction to Modern AI (CMU)

The course "10-202: Introduction to Modern AI" covers the workings of modern AI systems, focusing on machine learning methods and large language models (LLMs) such as ChatGPT, Gemini, and Claude. The curriculum emphasizes the contemporary understanding of AI, primarily relating to chatbot technologies used daily.

modernaicourse.org

5 min

3/1/2026

Bridging Elixir and Python with Oban

Oban facilitates integration between Elixir and Python, allowing Elixir applications to utilize Python's advanced functionalities such as machine learning models and multimedia processing tools. This integration supports collaboration across teams and aids in gradual migration between programming languages.

oban.pro

5 min

2/19/2026

A Visual Introduction to Machine Learning (2015)

Machine learning employs statistical techniques to automatically identify patterns in data, enabling accurate predictions. A model can be created using a dataset about homes to differentiate between homes in New York and those in San Francisco.

r2d3.us

7 min

3/15/2026

Building a Minimal Transformer for 10-digit Addition

A minimal transformer model has been developed to perform 10-digit addition tasks. The model demonstrates the ability to learn and execute arithmetic operations effectively.

alexlitzenberger.com

1 min

2/28/2026

This musician built an AI clone of her voice so anyone can sing as her

Holly Herndon has developed an AI voice clone that allows users to create music using her custom models. Her journey into machine learning began in 2015, evolving from initial "scratchy" outputs to sophisticated tools for musical expression.

scientificamerican.com

4 min

3/3/2026

Music Discovery

AI-powered record discovery. Describe a vibe, name a record you love, or tell us how you're feeling — the clerk will dig through the crates for you.

secondtrack.co

1 min

2/22/2026

No more articles to load