CERN uses tiny AI models burned into silicon for real-time LHC data filtering

theopenreader.org

March 28, 2026

6 min read

Summary

CERN is utilizing tiny AI models burned into silicon chips for real-time filtering of data generated by the Large Hadron Collider. The LHC produces approximately 40,000 exabytes of raw data annually.

Key Takeaways

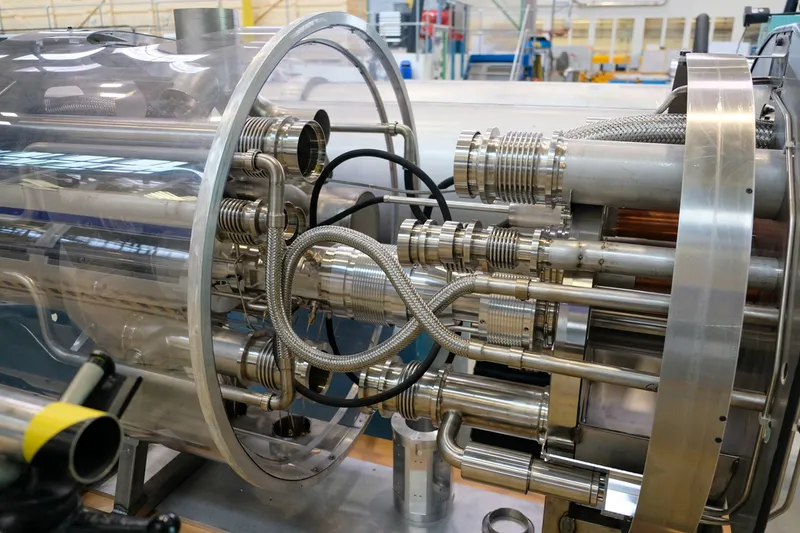

- CERN uses custom AI models burned into silicon chips for real-time filtering of data generated by the Large Hadron Collider (LHC), which produces approximately 40,000 exabytes of data per year.

- The filtering process, known as the Level-1 Trigger, utilizes around 1,000 field-programmable gate arrays (FPGAs) to evaluate incoming data in less than 50 nanoseconds, retaining only about 0.02% of collision events for further analysis.

- CERN's AI models are optimized for ultra-low-latency inference and are compiled using the open-source tool HLS4ML, allowing deployment on FPGAs and application-specific integrated circuits (ASICs) for efficient processing.

- The LHC generates data at peak rates of hundreds of terabytes per second, necessitating immediate decision-making at the detector level to manage the overwhelming data stream.

Community Sentiment

MixedPositives

- The use of custom neural networks with autoencoders for real-time data filtering at CERN showcases innovative applications of AI in high-energy physics.

- Integrating AI directly into silicon chips for data processing could lead to significant improvements in efficiency and speed for handling LHC data.

Concerns

- There is a lack of clarity regarding the specific AI algorithms and techniques used, which diminishes the article's informative value.

- The terminology used in the article may mislead readers, as the AI described is not a large language model but rather a neural network in an FPGA.

Source

theopenreader.org

Published

March 28, 2026

Reading Time

6 minutes

Relevance Score

63/100

🔥🔥🔥🔥🔥

Why It Matters

This page is optimized for focused reading: quick context up top, a clean summary block, and a direct path to the original source when you want the full story.