LLMs work best when the user defines their acceptance criteria first

blog.katanaquant.com

March 7, 2026

21 min read

Summary

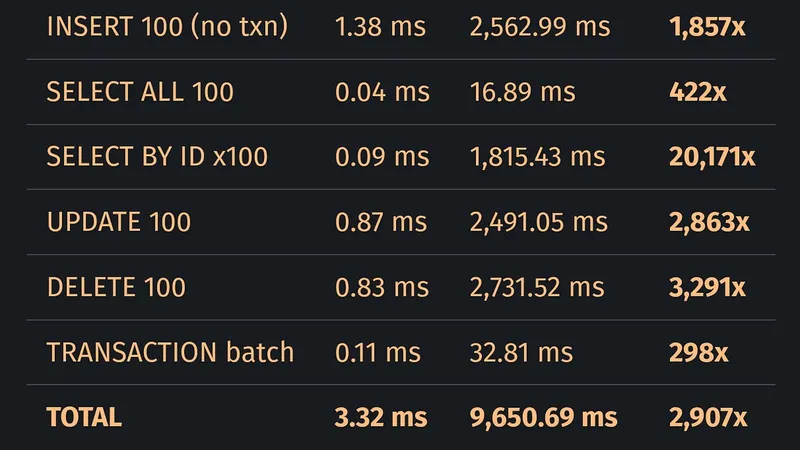

LLM-generated Rust code performs a primary key lookup on 100 rows in 1,815.43 ms, significantly slower than SQLite's 0.09 ms. Although the LLM-generated code compiles and passes tests, it is 20,171 times slower for this basic database operation.

Key Takeaways

- LLM-generated code can be significantly slower than correct implementations, with one example showing a Rust rewrite that is 20,171 times slower than SQLite for a primary key lookup on 100 rows.

- LLMs prioritize plausibility over correctness, leading to code that compiles and passes tests but performs poorly in practice.

- Benchmarking revealed consistent performance gaps in LLM-generated code, particularly in operations requiring database lookups, indicating systemic issues rather than isolated developer errors.

- Two specific bugs in the LLM-generated code were identified, contributing to its inefficiency, including a missing check for the primary key that affects query performance.

Community Sentiment

MixedPositives

- Using LLMs for writing queries and generating tests demonstrates their practical application in optimizing complex backend processes, highlighting their utility in real-world scenarios.

- The article prompts a valuable discussion about the importance of defining acceptance criteria, which can lead to more effective use of LLMs in coding tasks.

Concerns

- LLMs often produce plausible code that may not be efficient or correct, leading to a reliance on extensive workarounds and additional code to address limitations.

- The assertion that LLMs write plausible code overlooks the fact that they primarily generate code based on patterns from training data, which may not always meet user expectations.

Source

blog.katanaquant.com

Published

March 7, 2026

Reading Time

21 minutes

Relevance Score

66/100

🔥🔥🔥🔥🔥

Why It Matters

This page is optimized for focused reading: quick context up top, a clean summary block, and a direct path to the original source when you want the full story.