AI cybersecurity is not proof of work

antirez.com

April 16, 2026

2 min read

🔥🔥🔥🔥🔥

59/100

Summary

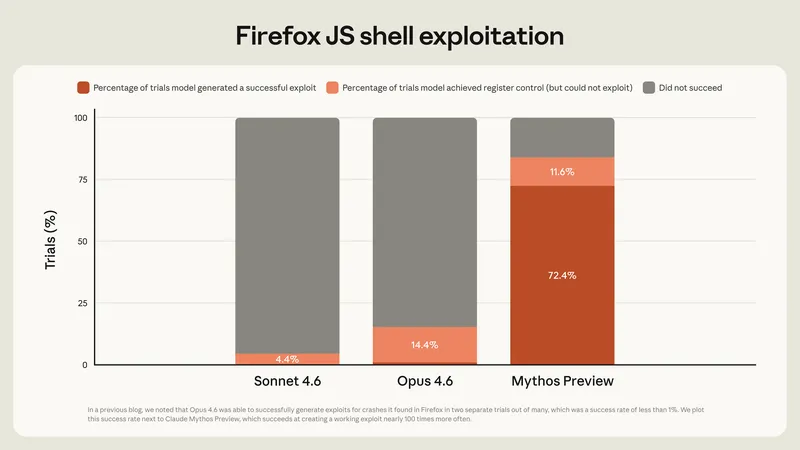

Proof of work in blockchain systems relies on finding hash collisions, which become exponentially harder as the difficulty increases. Different executions of large language models (LLMs) can take various branches, but the possible branches based on code states will eventually saturate, impacting bug detection in code.

Key Takeaways

- Cybersecurity will rely more on the intelligence level of models rather than sheer computational power, contrasting with proof of work systems.

- Different executions of large language models (LLMs) can lead to saturation of possible code branches, affecting their ability to identify bugs.

- Weaker models may hallucinate potential issues without understanding the underlying problems, while stronger models are less likely to identify bugs due to reduced hallucination.

- The OpenBSD SACK bug illustrates that even extensive sampling of inferior models will not guarantee the detection of complex bugs.

Community Sentiment

Positives

- AI models like Mythos can significantly reduce the cost and time associated with finding vulnerabilities, making cybersecurity more accessible to organizations without extensive resources.

- The ability to automate security checks with AI could lead to a more secure software landscape, as even basic models can uncover issues that skilled human experts might overlook.

- Having a combination of advanced models and ample tokens can enhance vulnerability detection, suggesting that investment in AI tools can yield substantial security benefits.

Concerns

- The debate over model effectiveness raises concerns about how we define 'better' in AI, especially when comparing models trained on different objectives.

- There are doubts about whether AI can achieve a level of capability that ensures software is essentially bug-free, indicating skepticism about relying solely on AI for security.

- The restriction of advanced models like Mythos suggests a fear of misuse, highlighting ethical concerns around the deployment of powerful AI tools in cybersecurity.