CC-Canary: Detect early signs of regressions in Claude Code

github.com

April 24, 2026

4 min read

🔥🔥🔥🔥🔥

46/100

Summary

Delta-hq's cc-canary provides drift detection for Claude Code through two installable Agent Skills that analyze JSONL session logs. The tool operates locally without requiring network access, accounts, or telemetry, generating shareable forensic reports based on existing data.

Key Takeaways

- The cc-canary tool detects model drift in Claude Code by analyzing JSONL session logs and generates shareable forensic reports.

- Reports include metrics such as verdicts on model performance, cost analysis, reasoning loops, and cross-version comparisons.

- The tool operates without requiring network access, accounts, or telemetry, and runs on local data already stored on the user's disk.

- Users can install the tool via npm and execute commands to generate reports for specified time windows.

Community Sentiment

Positives

- The CC-Canary tool offers a promising approach to detect early signs of regression in AI models, which could enhance model reliability and user trust.

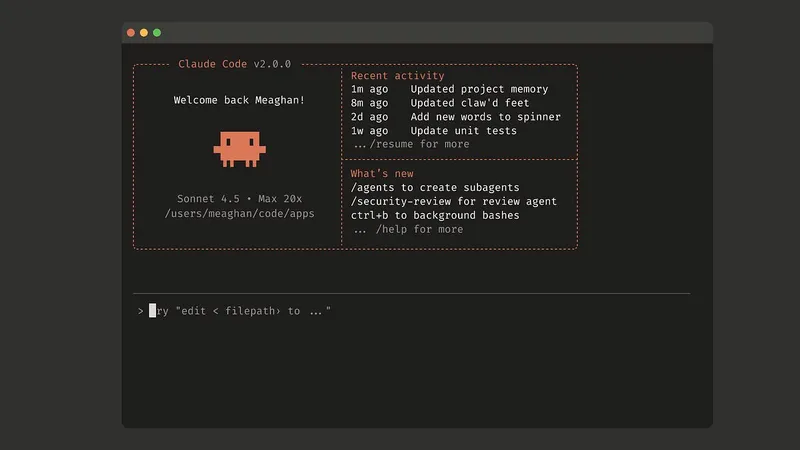

- Adding a persona block to Claude's instructions can make programming more engaging, showing the potential for creative interactions with AI.

- Tracking performance changes when tweaking prompts or adding skills is crucial for developers, and CC-Canary addresses this need effectively.

Concerns

- The concept of 'drift' is often misused in discussions about LLMs, leading to confusion about its implications for model performance.

- There is skepticism about the effectiveness of self-measurement in AI tools, as it raises concerns about accountability and accuracy.

- Many users feel frustrated with current AI tools, suggesting that they often work against developers rather than enhancing productivity.

Related Articles

Claude Code – Everything You Can Configure That the Docs Don't Tell You

May 29, 2026

Universal Claude.md – cut Claude output tokens

Mar 31, 2026

EvanFlow – A TDD driven feedback loop for Claude Code

Apr 27, 2026

Claude Code as a Daily Driver: Claude.md, Skills, Subagents, Plugins, and MCPs

May 27, 2026

Accidentally created my first fork bomb with Claude Code

Mar 31, 2026