He asked AI to count carbs 27000 times. It couldn't give the same answer twice

diabettech.com

April 29, 2026

8 min read

🔥🔥🔥🔥🔥

59/100

Summary

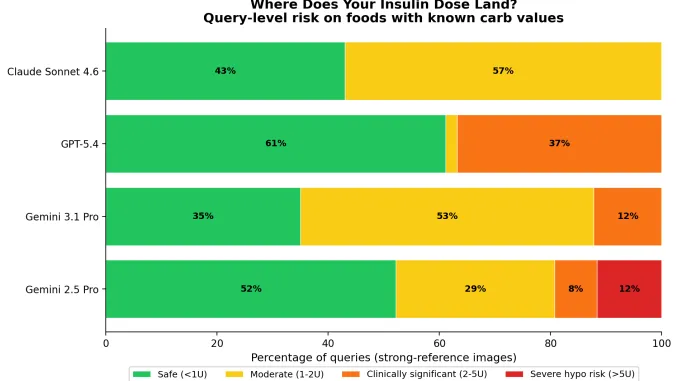

AI-powered carb counting tools can provide inconsistent estimates, yielding different results even when given the same input. This variability poses significant risks for individuals managing diabetes, as inaccurate carb counts can lead to hypoglycemic emergencies.

Key Takeaways

- AI models provided inconsistent carbohydrate estimates for the same food images, with variations large enough to potentially cause hypoglycemic emergencies.

- The median variation in carbohydrate estimates ranged from 2.4% for Claude Sonnet 4.6 to 11.0% for Gemini 2.5 Pro, indicating significant discrepancies in model accuracy.

- A single food photo could yield carbohydrate estimates with a range of up to 429 grams, highlighting the risk of relying on AI for precise dietary management.

- Food identification errors were present in 8 of the 13 test images, demonstrating that AI models do not always accurately recognize food items.

Community Sentiment

Positives

- The study effectively highlights the limitations of LLMs in providing reliable nutritional information, reinforcing the need for caution in using AI for health-related tasks.

- By executing the model multiple times at the lowest randomness setting, the study aimed to minimize variance, showcasing the inherent unpredictability of LLM outputs.

Concerns

- The article demonstrates a serious lack of understanding regarding how LLMs work, leading to unrealistic expectations about their capabilities in carb-counting.

- Many users are unaware that LLMs are not designed to provide accurate nutritional assessments, which could lead to harmful reliance on these models for health decisions.

- The variance in responses from the LLMs, even with minimized randomness, underscores the unreliability of these models for critical tasks like dietary calculations.