I benchmarked Claude Code's caveman plugin against "be brief."

maxtaylor.me

April 29, 2026

6 min read

🔥🔥🔥🔥🔥

48/100

Summary

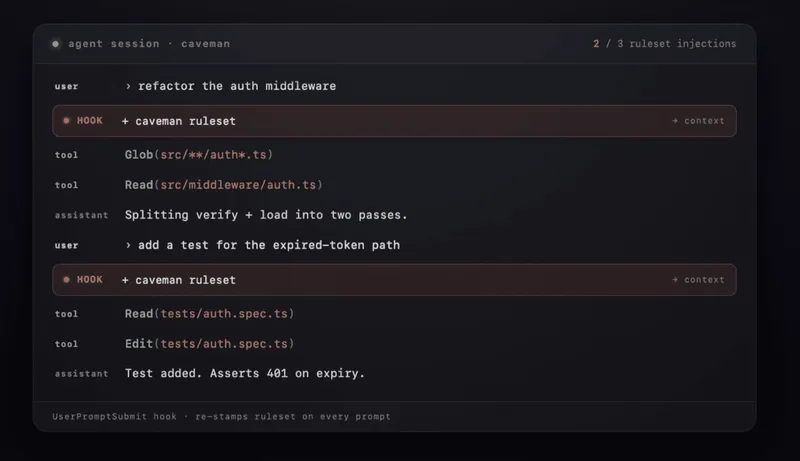

Caveman is a Claude Code compression plugin that delivers ultra-compressed responses with approximately 75% fewer tokens while maintaining technical accuracy. Benchmarking against the prompt "be brief" showed that Caveman matched it in both token count and response quality across 24 prompts in six categories.

Key Takeaways

- Caveman, a Claude Code compression plugin, produces ultra-compressed responses with approximately 75% fewer tokens while maintaining technical accuracy.

- Benchmarking against the prompt "be brief," Caveman achieved similar quality and token count as the baseline Claude model across 24 prompts in six categories.

- All tested modes of Caveman scored within 1.5% of each other in terms of correctness, with compression not negatively impacting substantive content.

- The "be brief" prompt reduced token count by 34% compared to the baseline, demonstrating effective compression in most categories tested.

Community Sentiment

Positives

- The benchmark results indicate that Caveman performs comparably to the simple 'be brief.' prompt, suggesting that advanced plugins can achieve similar efficiency.

- The structured approach of plugins like Caveman can provide real value in organizing responses, which is essential for clarity in coding tasks.

- Benchmarking plugins like Caveman contributes to a better understanding of their actual performance, helping users make informed decisions about their use.

Concerns

- The reliance on a single run per prompt raises concerns about the reliability of the benchmark results, as variability could skew the findings.

- Critics argue that the actual token savings from using Caveman are negligible in practical coding scenarios, questioning its overall utility.

- There is a sentiment that many plugins, including Caveman, are overhyped and that users may be falling for 'snake oil' solutions without empirical support.