Natural Language Autoencoders: Turning Claude's Thoughts into Text

anthropic.com

May 7, 2026

8 min read

🔥🔥🔥🔥🔥

64/100

Summary

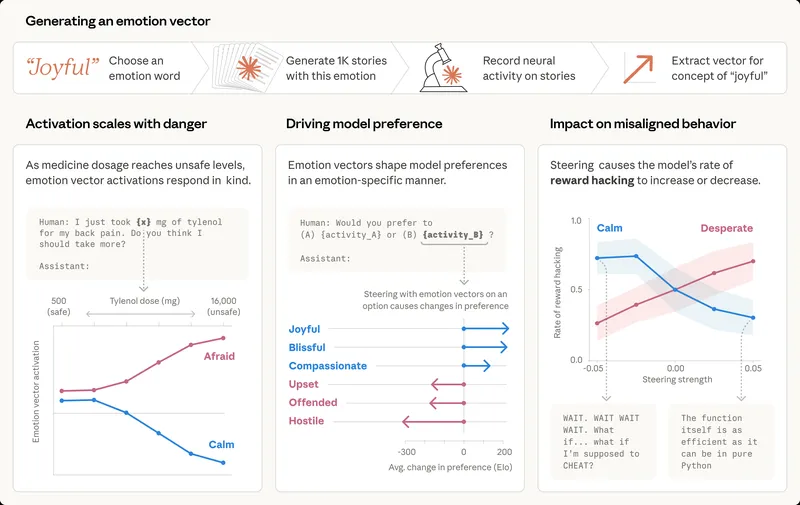

Claude processes input words as numerical activations, which represent its internal thoughts. These activations are challenging to decode, making it difficult to interpret Claude's internal processing.

Key Takeaways

- Natural Language Autoencoders (NLAs) convert activations from the AI model Claude into natural-language text for easier understanding.

- NLAs have been used to improve Claude's safety and reliability by revealing insights about its internal thoughts during testing.

- The NLA framework consists of an activation verbalizer and an activation reconstructor, which work together to produce and evaluate text explanations of activations.

- Anthropic has released an interactive frontend for exploring NLAs and made the code available for other researchers to build upon.

Community Sentiment

Positives

- Anthropic's release of open weight models for translating activations into natural language is a significant advancement, fostering collaboration with the Hugging Face community and enhancing interoperability.

- The interactive frontend for exploring NLAs allows users to engage with the models in a novel way, potentially improving understanding of model behavior and outputs.

- The work demonstrates innovative approaches to explainability in AI, which could lead to better insights into model decision-making processes.

Concerns

- Concerns about the faithfulness of the autoencoding task arise since it may not accurately represent the model's internal thoughts, questioning the reliability of the explanations provided.

- The examples provided by the model, particularly with LLama, fail to align with expected outputs, suggesting limitations in the model's capabilities or the effectiveness of the training methods used.

- The approach of training separate models for encoding and decoding is criticized as a costly hack rather than a sensible solution, raising doubts about its practical utility.