OpenAI agrees with Dept. of War to deploy models in their classified network

twitter.com

February 28, 2026

1 min read

🔥🔥🔥🔥🔥

79/100

Community Sentiment

Concerns

- The agreement with the Department of War raises ethical concerns, suggesting a potential compromise on OpenAI's commitment to safety and responsible AI use.

- The lack of clarity on the differences between OpenAI and Anthropic's contracts indicates possible inconsistencies in ethical standards, which could undermine trust in AI governance.

- The sentiment among some users reflects a belief that OpenAI's actions contradict their stated values, leading to calls for more ethical accountability in AI development.

Related Articles

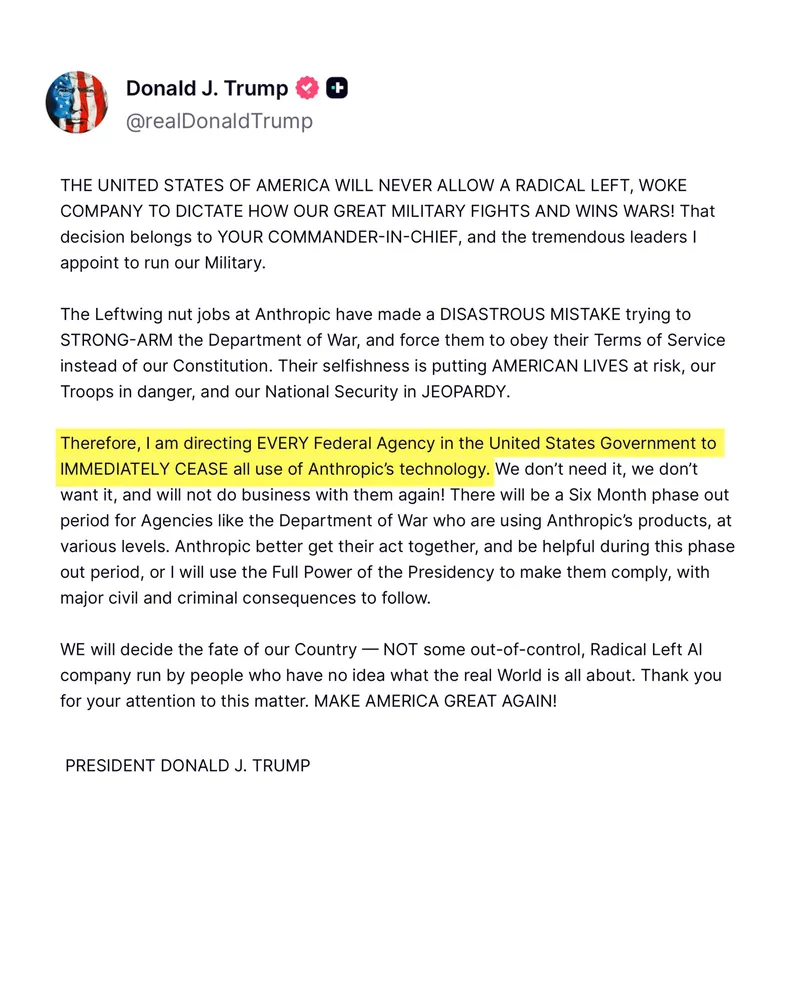

I am directing the Department of War to designate Anthropic a supply-chain risk

Feb 27, 2026

Anthropic announces proof of distillation at scale by MiniMax, DeepSeek,Moonshot

Feb 23, 2026

Trump Bans Anthropic from All US Federal Agencies

Feb 27, 2026

Gemini 3 Deep Think

Feb 12, 2026

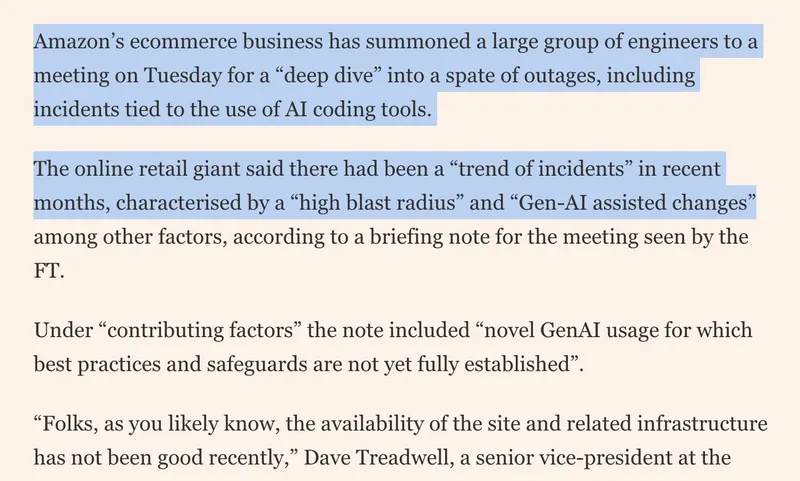

Amazon is holding a mandatory meeting about AI breaking its systems

Mar 10, 2026