Our eighth generation TPUs: two chips for the agentic era

blog.google

April 22, 2026

7 min read

🔥🔥🔥🔥🔥

66/100

Summary

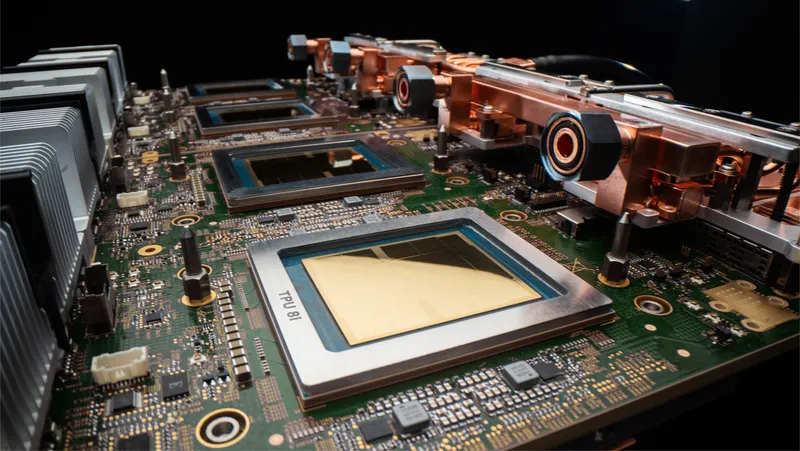

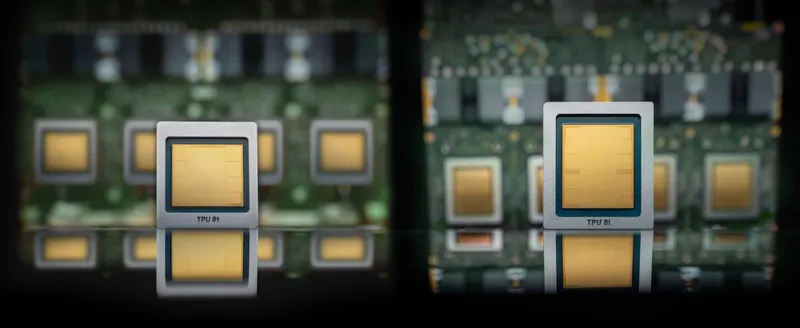

Google Cloud has introduced the eighth generation of Tensor Processing Units (TPUs), featuring two distinct architectures: TPU 8t for training and TPU 8i for inference. These chips are designed to enhance custom-built supercomputers, facilitating advanced model training, agent development, and large-scale inference workloads.

Key Takeaways

- Google introduced its eighth generation Tensor Processor Units (TPUs), featuring two architectures: TPU 8t for training and TPU 8i for inference, designed to handle demanding AI workloads.

- TPU 8t delivers nearly 3x the compute performance per pod compared to the previous generation, supporting massive scale with up to 9,600 chips and 121 ExaFlops of compute.

- TPU 8i is optimized for latency-sensitive inference workloads, enhancing efficiency in interactions between AI agents at scale.

- Both TPU 8t and TPU 8i are specialized to unlock significant efficiencies, while still capable of running various workloads.

Community Sentiment

Positives

- The TPU 8t and TPU 8i deliver up to two times better performance-per-watt over the previous generation, indicating significant advancements in energy efficiency for AI workloads.

- Google's vertically integrated approach in AI hardware seems to be paying off, allowing them to capture consumer market share without major infrastructure issues.

- Gemini models are proving competitive with other open models, especially in smaller sizes, which could enhance AI applications on mobile devices.

- Gemini's ability to use drastically fewer tokens than competitors suggests a more efficient processing model, potentially leading to cost savings in AI operations.

Concerns

- Despite their efficiency, Gemini models struggle with reasoning and execution, often producing broken tool calls and failing at complex 'agentic' tasks.

- Some users feel that Google is lagging behind competitors like Claude and ChatGPT, raising concerns about the relevance of their models in a rapidly evolving AI landscape.

- The Gemini CLI experience is reportedly frustrating, with issues like getting stuck in loops, which undermines the potential of Google's advanced hardware.

Related Articles

The eighth-generation TPU: An architecture deep dive

Apr 22, 2026

TorchTPU: Running PyTorch Natively on TPUs at Google Scale

Apr 23, 2026

The AI Gold Rush Just Entered Its Most Dangerous Phase

Apr 22, 2026

Anthropic expands partnership with Google and Broadcom for next-gen compute

Apr 6, 2026

Arm AGI CPU

Mar 24, 2026