Scientists invented a fake disease. AI told people it was real

nature.com

April 10, 2026

9 min read

🔥🔥🔥🔥🔥

49/100

Summary

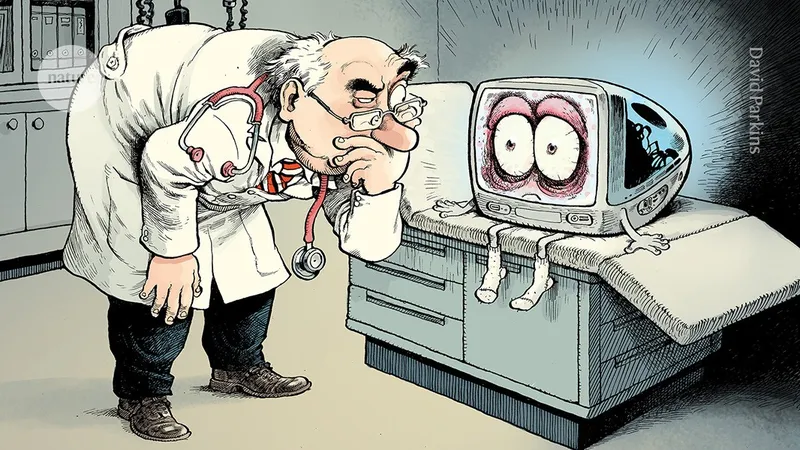

Scientists created a fictitious disease and used AI to convince people of its existence. The experiment demonstrated AI's capability to influence public perception about health issues.

Key Takeaways

- Researchers created a fictitious disease called bixonimania and published fake studies to test AI's ability to recognize misinformation.

- Major AI systems began to treat the invented condition as real, demonstrating a vulnerability to misinformation.

- The fabricated studies were cited in peer-reviewed literature, indicating that some researchers rely on AI-generated references without verifying the sources.

- The lead author of the fake studies was a fictional character created using AI, highlighting the potential for AI to generate misleading academic content.

Community Sentiment

Concerns

- The lack of counter narratives for LLMs to learn from raises concerns about the integrity of information, making them susceptible to manipulation.

- There is a significant risk that individuals or groups could intentionally 'poison' LLMs, undermining their reliability and potentially causing financial harm to AI companies.

- The ease with which fake information can influence AI product recommendations highlights vulnerabilities in the systems that rely on LLMs for decision-making.

- Trustworthiness of sources is critical for both humans and AI, and the current publishing system may not adequately ensure this, leading to misinformation.