Simulacrum of Knowledge Work

blog.happyfellow.dev

April 25, 2026

3 min read

🔥🔥🔥🔥🔥

58/100

Summary

Quality control in knowledge work is critical, as errors such as outdated information, spelling mistakes, and mislabeled graphs can undermine the credibility of reports. Disregarding flawed reports can prevent decision-making based on inaccurate data.

Key Takeaways

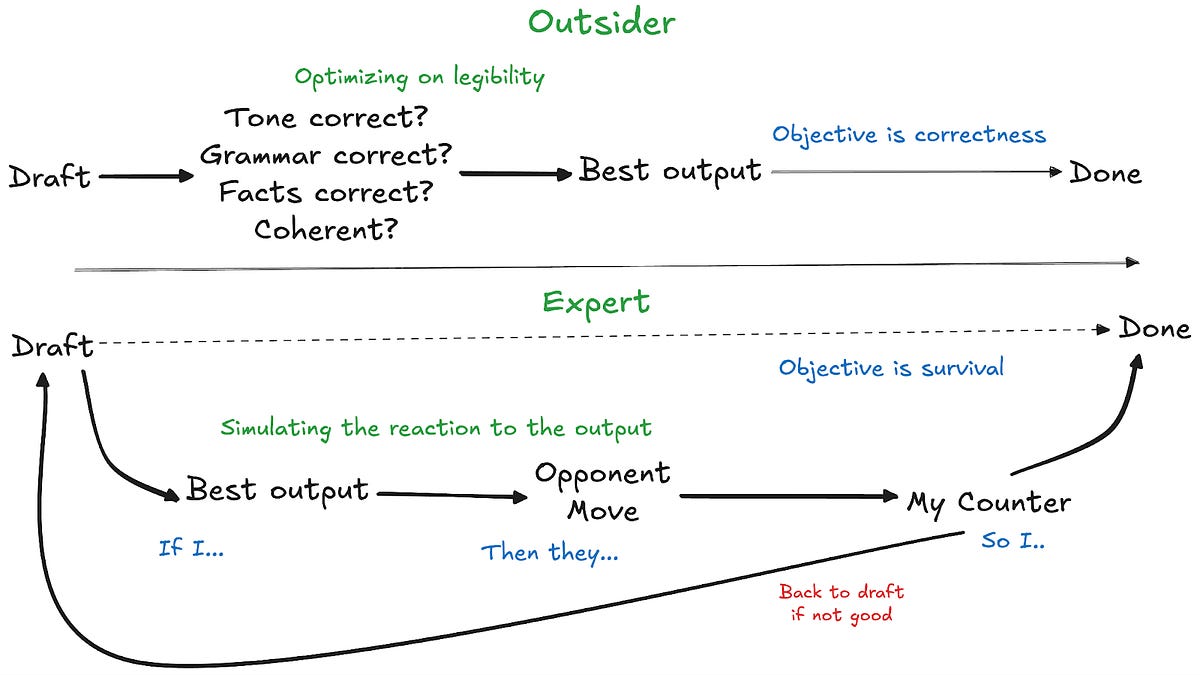

- Large language models (LLMs) can produce text that simulates high-quality knowledge work but may lack true quality and accuracy.

- The reliance on proxy measures for evaluating work quality has increased due to the superficial nature of LLM outputs.

- Companies are prioritizing quantity of LLM-generated content over thorough quality checks, leading to potential issues in decision-making.

- The optimization of LLMs focuses on producing outputs that appear high quality rather than ensuring the truthfulness or usefulness of the information.

Community Sentiment

Positives

- The discussion highlights that while LLMs may produce reports that look good, they can still be flawed, emphasizing the need for critical evaluation of AI-generated content.

- The recognition of AI signatures in text suggests that users are becoming more discerning, which may lead to higher standards in knowledge work.

- The conversation around the evolving nature of quality in AI-generated work indicates a growing awareness of the complexities involved in assessing AI outputs.

Concerns

- Concerns are raised about the reliability of LLMs, as they can produce shoddy or error-filled work, making it harder to distinguish quality.

- The article suggests that the lack of identifiable 'tells' in AI outputs could lead to a proliferation of low-quality work, which poses risks for knowledge assessment.

- The sentiment that automation should achieve perfect competence highlights dissatisfaction with current AI performance, as users expect more than human-like error rates.