Train Your Own LLM from Scratch

github.com

May 5, 2026

4 min read

🔥🔥🔥🔥🔥

56/100

Summary

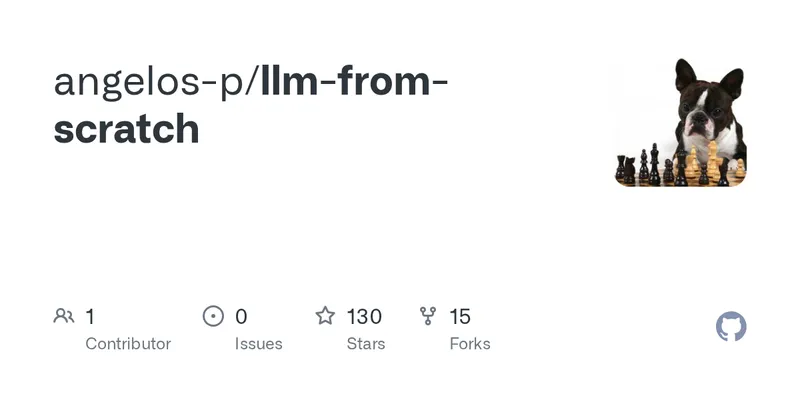

GitHub repository angelos-p/llm-from-scratch offers a hands-on workshop for building a GPT training pipeline from scratch. The workshop focuses on reproducing the GPT-2 model with 124 million parameters using PyTorch.

Key Takeaways

- The workshop allows participants to build a GPT training pipeline from scratch, covering essential components like tokenization, model architecture, and training loops.

- The project targets a ~10M parameter model that can be trained on a laptop in under an hour, using either Apple Silicon GPU, NVIDIA GPU, or CPU.

- Participants will write code for various parts of the model, including a character-level tokenizer, transformer architecture, and text generation methods.

- The workshop is designed for individuals comfortable with Python, without requiring prior machine learning experience.