We decreased our LLM costs with Opus

mendral.com

April 29, 2026

7 min read

🔥🔥🔥🔥🔥

48/100

Summary

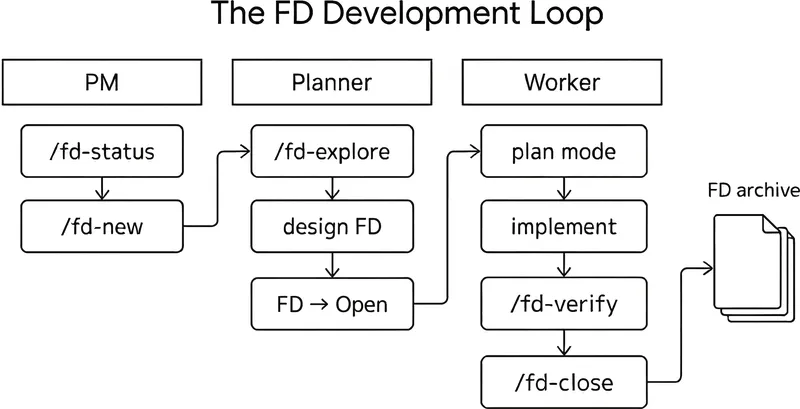

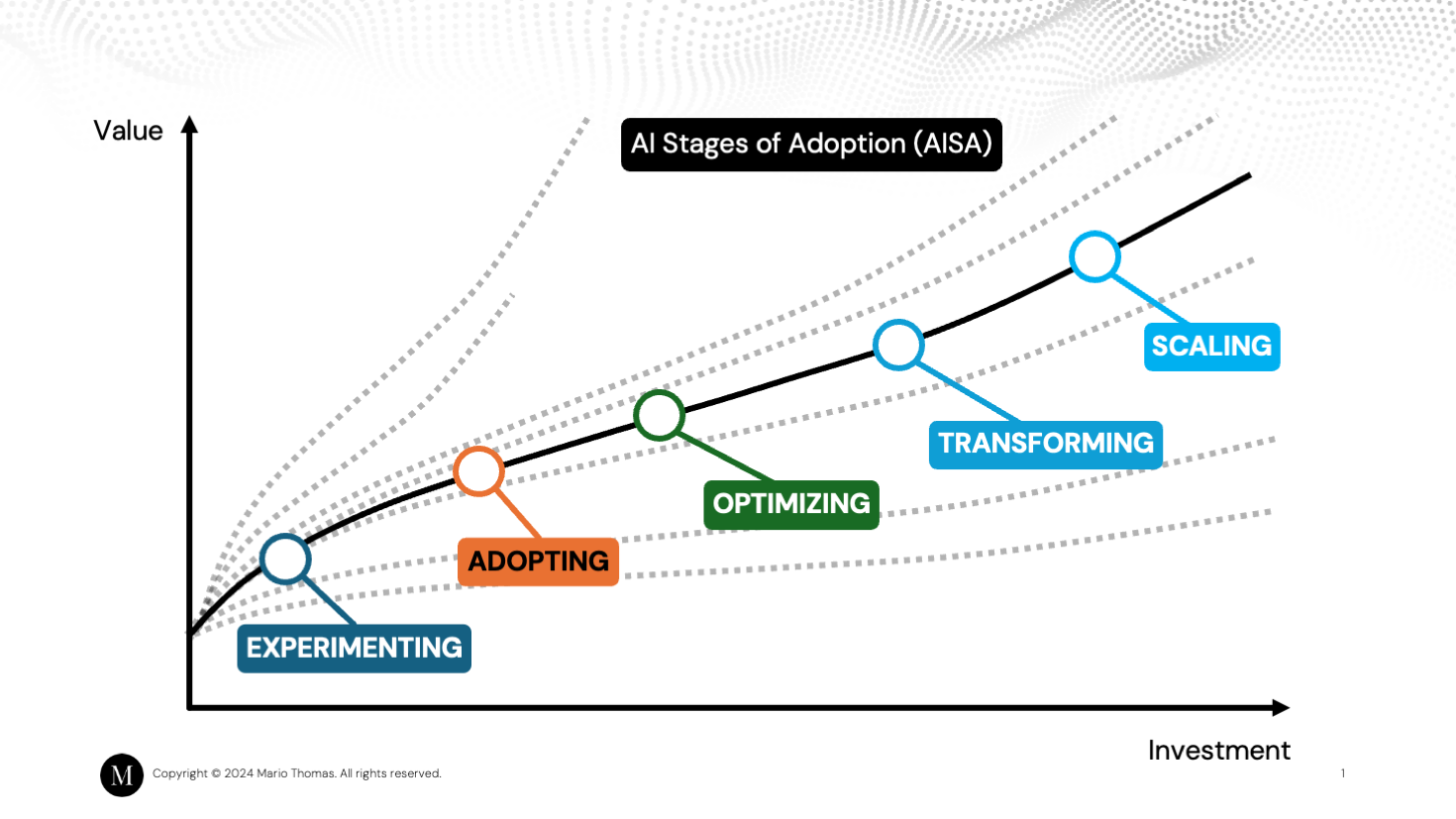

Upgrading to Opus 4.6 has reduced operational costs compared to Sonnet 4.0. The new architecture allows a low-cost agent to determine the necessity of engaging the more expensive model, preventing 80% of failures from reaching it.

Key Takeaways

- The transition from Sonnet 4.0 to Opus 4.6 resulted in lower operational costs due to the new model's ability to filter out 80% of failures before escalating to the more expensive model.

- A "triager" pattern was implemented using a Haiku agent to identify known issues, which significantly reduced the need for costly investigations by handling duplicates efficiently.

- The system allows agents to pull relevant log context through a SQL interface rather than pushing predefined log lines, preventing bias in the investigation process.

- Opus generates hypotheses based on failures and coordinates Haiku sub-agents to conduct specific searches, optimizing the investigation workflow.

Community Sentiment

Positives

- The triager pattern with a Haiku agent significantly reduces costs, as it prevents unnecessary escalation to more expensive models like Opus, optimizing resource allocation.

- Haiku's capabilities, when properly scoped, demonstrate that it can effectively handle specific tasks, which highlights the potential for cost-effective AI solutions.

- The evolution of Opus showcases improved reasoning abilities, indicating advancements in model architecture that can enhance decision-making processes in AI applications.

Concerns

- The article's title is perceived as misleading, which raises concerns about transparency and the authenticity of the claims regarding cost reduction.

- There are doubts about the effectiveness of RAG in the current landscape, suggesting that reliance on traditional methods may be diminishing as newer models emerge.