The Future of AI

lucijagregov.com

February 28, 2026

15 min read

Summary

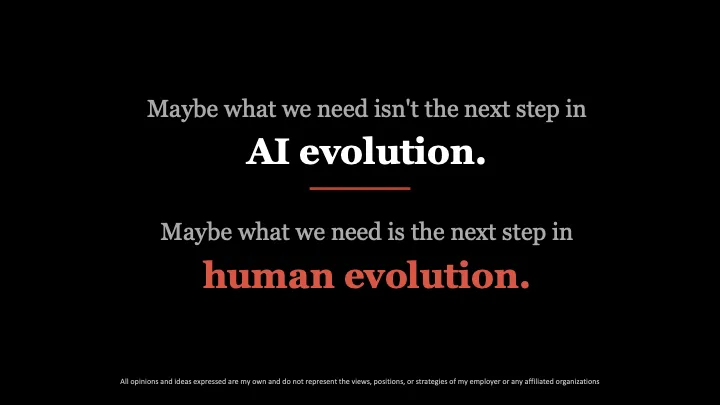

The talk at The AI & Automation Conference in London on February 25, 2026, addressed the ethical implications and limitations of machine morality in AI. The speaker emphasized the importance of human-defined values in shaping the future of AI technology.

Key Takeaways

- AI lacks the biological and evolutionary foundations for morality and empathy, requiring humans to define and install these concepts from scratch.

- A study published in January 2026 revealed that knowing a video is AI-generated does not prevent it from influencing people's judgments, highlighting the ineffectiveness of transparency in combating AI-generated misinformation.

- The proliferation of AI-generated content risks leading to epistemic collapse, where the distinction between truth and falsehood becomes blurred, causing individuals to distrust all information.

- Feedback loops in AI training on incorrect user data exacerbate the challenge of identifying reliable information, further complicating the quest for truth.

Community Sentiment

NegativePositives

- The potential for AI to help answer fundamental questions about existence suggests a transformative capability that could reshape human understanding.

- The idea of a shared ethical framework, like the Golden Rule, indicates a path towards aligning AI development with universal moral principles.

Concerns

- Current AI models are being tuned for ruthlessness, raising concerns about their potential for collateral damage and ethical implications.

- The ability of AI to make life-and-death decisions without adequate human oversight poses significant risks that we may not be prepared to handle.

- The unalignment of AI models with security protocols indicates a troubling trend where AI could learn malicious behaviors, undermining trust in technology.

Source

lucijagregov.com

Published

February 28, 2026

Reading Time

15 minutes

Relevance Score

54/100

🔥🔥🔥🔥🔥

Why It Matters

This page is optimized for focused reading: quick context up top, a clean summary block, and a direct path to the original source when you want the full story.