Anthropic researchers detail “model spec midtraining”, which adds a stage between pretraining and fine-tuning to improve generalization from alignment training

alignment.anthropic.com

May 7, 2026

8 min read

🔥🔥🔥🔥🔥

27/100

Summary

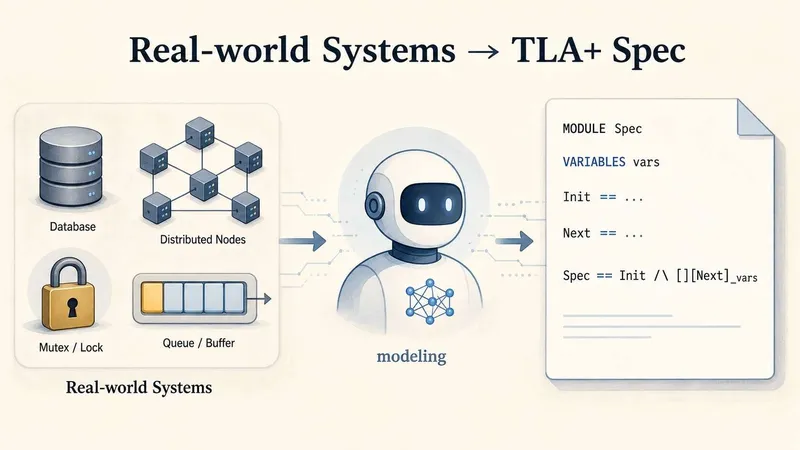

Model spec midtraining (MSM) trains models on synthetic documents about their Model Spec after pre-training and before alignment fine-tuning. MSM enhances generalization during alignment training and reduces agentic misalignment by influencing how models adopt values based on the Model Spec used.

Key Takeaways

- Model spec midtraining (MSM) is a method designed to improve how models generalize from alignment fine-tuning by training them on synthetic documents that discuss their Model Spec.

- MSM allows for control over the values a model learns from ambiguous demonstration data, enabling different models to generalize distinct values even when trained on the same dataset.

- The application of MSM has been shown to reduce agentic misalignment and improve alignment in complex agentic settings after fine-tuning on simple conversation transcripts.

- Training with MSM can lead to models that consistently prefer values aligned with their specified Model Spec in various domains, demonstrating its effectiveness in guiding model behavior.