DeepSeek V4–almost on the frontier, a fraction of the price

simonwillison.net

May 1, 2026

3 min read

47/100

Summary

DeepSeek has released two preview models in its V4 series: DeepSeek-V4-Pro and DeepSeek-V4-Flash. The Pro model features 1.6 trillion total parameters with 49 billion active, while the Flash model has 284 billion total parameters and 13 billion active, both utilizing a 1 million token context Mixture of Experts architecture under the MIT license.

Key Takeaways

- DeepSeek released two models in the V4 series: DeepSeek-V4-Pro with 1.6 trillion parameters and DeepSeek-V4-Flash with 284 billion parameters.

- DeepSeek-V4-Pro is the largest open weights model available, surpassing Kimi K2.6 and GLM-5.1.

- DeepSeek-V4-Flash is priced at $0.14 per million tokens input and $0.28 per million tokens output, making it the cheapest among small models.

- DeepSeek-V4-Pro demonstrates competitive performance on reasoning benchmarks compared to frontier models but lags behind GPT-5.4 and Gemini-3.1-Pro by approximately 3 to 6 months.

Community Sentiment

Positives

- DeepSeek V4 Pro offers comparable quality to OpenAI's models at a significantly lower price, making it an attractive option for budget-conscious developers.

- The API pricing of DeepSeek is highly competitive, allowing users to access a large number of tokens for a fraction of the cost compared to other providers.

- Users appreciate the model's ability to perform well in practical applications, such as frontend development, indicating its versatility for various use cases.

Concerns

- The reliance on traditional evaluation metrics like the pelican raises concerns about the model's innovation and ability to tackle novel challenges in AI.

- Some users feel that many AI models, including DeepSeek, have converged on similar outputs, suggesting a lack of differentiation in capabilities.

Related Articles

Qwen3.5 122B and 35B models offer Sonnet 4.5 performance on local computers

Feb 28, 2026

![[AINews] Why OpenAI Should Build Slack](https://substackcdn.com/image/fetch/$s_!XQAE!,w_1200,h_675,c_fill,f_jpg,q_auto:good,fl_progressive:steep,g_auto/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F89ee056a-0ea2-4473-8e1c-9b21f034c717_1474x2116.png)

OpenAI should build Slack

Feb 14, 2026

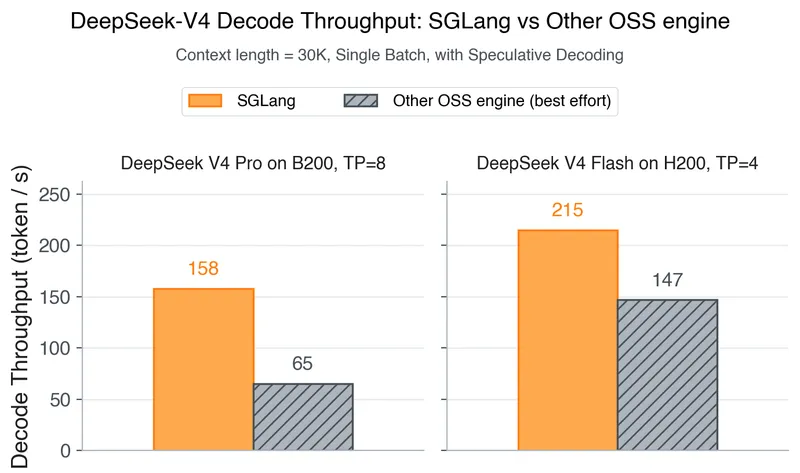

DeepSeek-V4 on Day 0: From Fast Inference to Verified RL with SGLang and Miles

Apr 25, 2026

Step 3.5 Flash – Open-source foundation model, supports deep reasoning at speed

Feb 19, 2026

GPT-5.4

Mar 5, 2026