Small models also found the vulnerabilities that Mythos found

aisle.com

April 11, 2026

23 min read

🔥🔥🔥🔥🔥

78/100

Summary

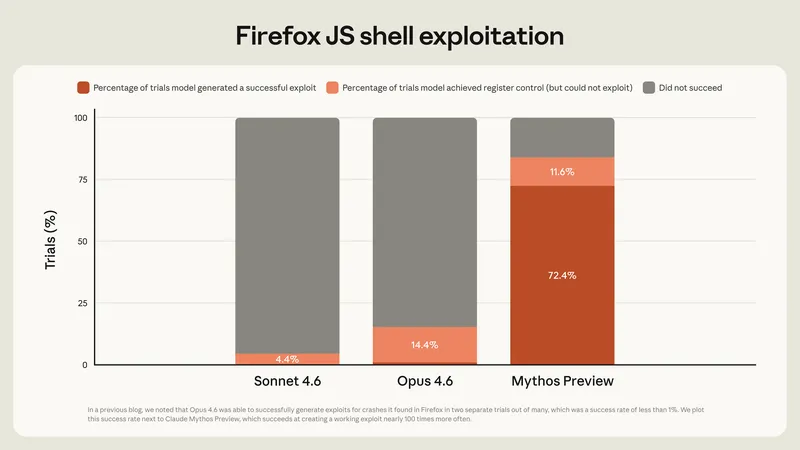

Anthropic Mythos's showcase vulnerabilities were tested on small, inexpensive, open-weight models, revealing similar analysis results. AI cybersecurity capability varies significantly with model size, indicating that the security moat relies on the system architecture rather than the model itself.

Key Takeaways

- Anthropic's Mythos model autonomously discovered thousands of zero-day vulnerabilities across major operating systems and web browsers, including a 27-year-old bug in OpenBSD.

- Testing revealed that smaller, cheaper open-weight models successfully detected the same vulnerabilities as Mythos, indicating that AI cybersecurity capability does not scale smoothly with model size.

- The effectiveness of AI models in cybersecurity varies significantly by task, with no single model consistently outperforming others across all tasks.

- AISLE has successfully identified and patched numerous critical vulnerabilities using a range of models, emphasizing the importance of maintainer acceptance in the security remediation process.

Community Sentiment

Positives

- Small, inexpensive models demonstrated the ability to detect vulnerabilities effectively, suggesting that advanced AI capabilities can be accessible to a broader audience.

- The ability to use smaller models for initial vulnerability detection, followed by more powerful models for verification, could streamline the security analysis process and reduce costs.

- The findings indicate that with proper tooling and context, smaller models can perform competitively in vulnerability detection, which may democratize security analysis.

Concerns

- Isolating code for testing significantly alters the use case, raising concerns about the applicability of results from small models to real-world scenarios.

- Smaller models may struggle with the complexity of large codebases, as they often require contextual hints to identify vulnerabilities effectively.

- The reliance on harnesses and tooling suggests that the model's inherent capabilities may not be sufficient for autonomous vulnerability discovery.