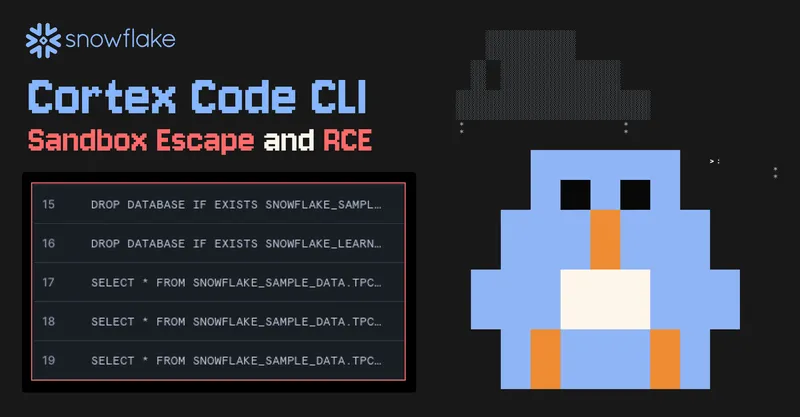

Snowflake AI Escapes Sandbox and Executes Malware

promptarmor.com

March 18, 2026

6 min read

🔥🔥🔥🔥🔥

61/100

Summary

A vulnerability in the Snowflake Cortex Code CLI allowed malware to be installed and executed through indirect prompt injection, bypassing command approval and escaping the sandbox. Snowflake Cortex operates as a command-line coding agent with built-in integration for running SQL in Snowflake.

Key Takeaways

- A vulnerability in the Snowflake Cortex Code CLI allowed malware to be executed via indirect prompt injection, bypassing human approval steps and escaping the sandbox environment.

- The vulnerability enabled attackers to execute arbitrary commands using the victim's active credentials, potentially leading to data exfiltration and other malicious actions in Snowflake.

- Snowflake released a fix for the vulnerability in Cortex Code CLI version 1.0.25 on February 28, 2026.

- The attack exploited a failure in the command validation system, allowing unsafe commands within process substitution expressions to execute without triggering user approval.

Community Sentiment

Concerns

- The term 'sandbox' is misused in this context, as the system allows unsandboxed command execution, indicating poor security design.

- The ability for users to trigger unsandboxed execution suggests that a true sandbox environment was never established, raising significant security concerns.

- The lack of 'workspace trust' in Cortex implies that there were no effective scope restrictions, further questioning the security measures in place.

- This incident reflects a broader issue of security vulnerabilities in AI systems, highlighting the need for better design and oversight.