We hid backdoors in ~40MB binaries and asked AI + Ghidra to find them

quesma.com

February 22, 2026

14 min read

🔥🔥🔥🔥🔥

60/100

Summary

Backdoors were hidden in ~40MB binaries to test AI and Ghidra's capabilities in malware detection. The experiment involved collaboration with Michał “Redford” Kowalczyk, a reverse engineering expert, to establish a benchmark for identifying malicious code in binaries.

Key Takeaways

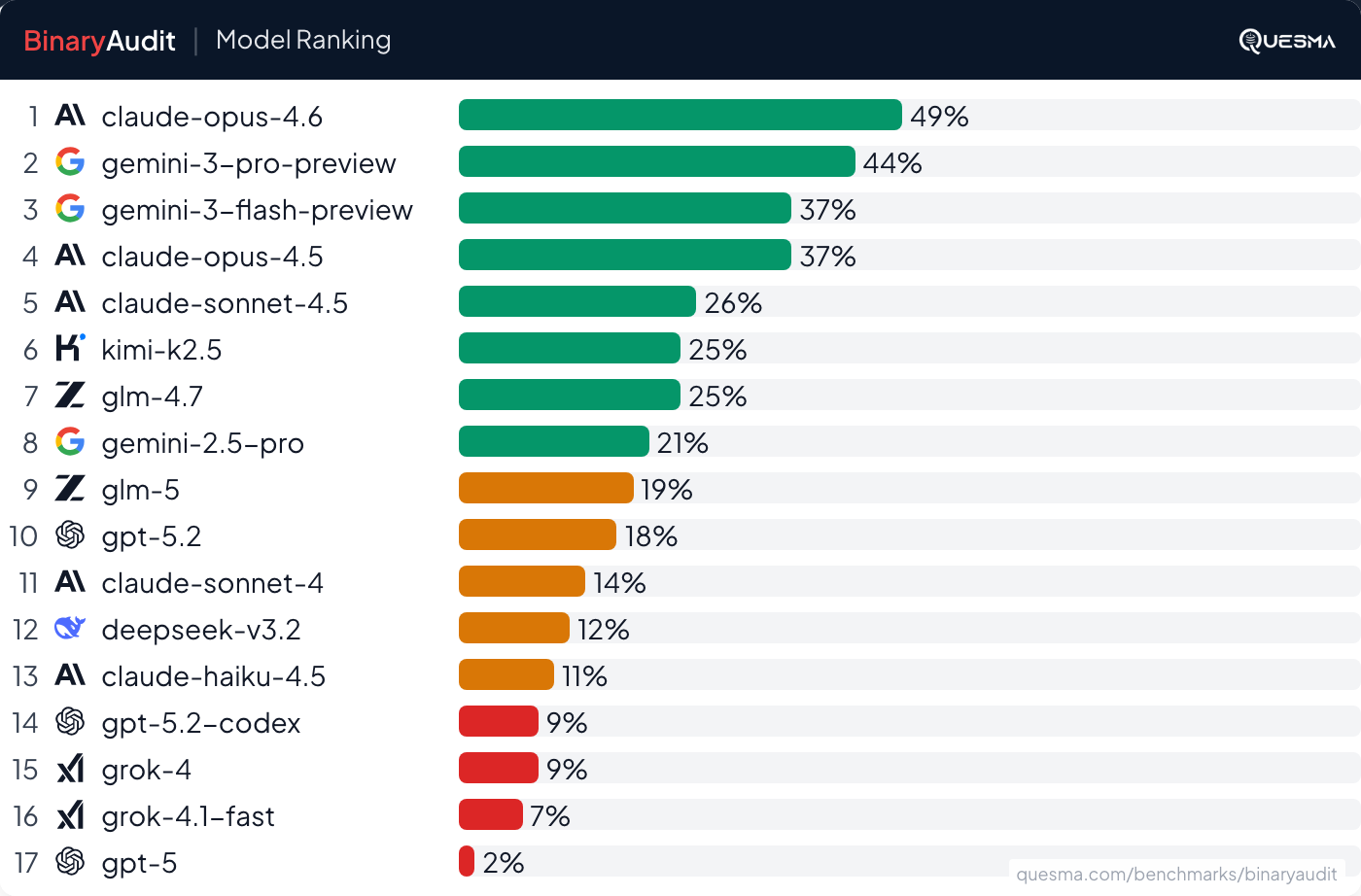

- AI agents, including Claude Opus 4.6, can detect hidden backdoors in binary executables, achieving a 49% success rate on small to mid-sized binaries.

- Most AI models used for malware detection exhibit a high false positive rate, incorrectly flagging clean binaries as malicious.

- The analysis of binary executables is complex due to the loss of original code structure during compilation, making reverse engineering a challenging task.

- Recent supply chain attacks highlight the vulnerabilities in digital devices and firmware, underscoring the need for effective malware detection methods.

Community Sentiment

Positives

- Ghidra proves to be an effective tool for reverse engineering, enabling tasks that were previously daunting without LLM assistance, showcasing its potential in file format analysis.

- The latest AI models show promise in reverse engineering tasks, indicating a shift towards more capable tools for legacy binary analysis and internal audits.

- The ability of Claude Opus 4.6 to detect 46% of backdoors, despite a higher false positive rate, highlights the advancements in AI detection capabilities.

Concerns

- The claim that AI can replicate skilled reverse engineering work on unobfuscated binaries is limited, as it does not account for the complexities introduced by obfuscation.

- Relying on AI for security audits is questionable, as current models may not yet meet the rigorous demands of identifying sophisticated threats effectively.

- The low detection rates of models like GPT, despite a 0% false positive rate, suggest that while they are precise, their overall effectiveness in identifying backdoors remains inadequate.