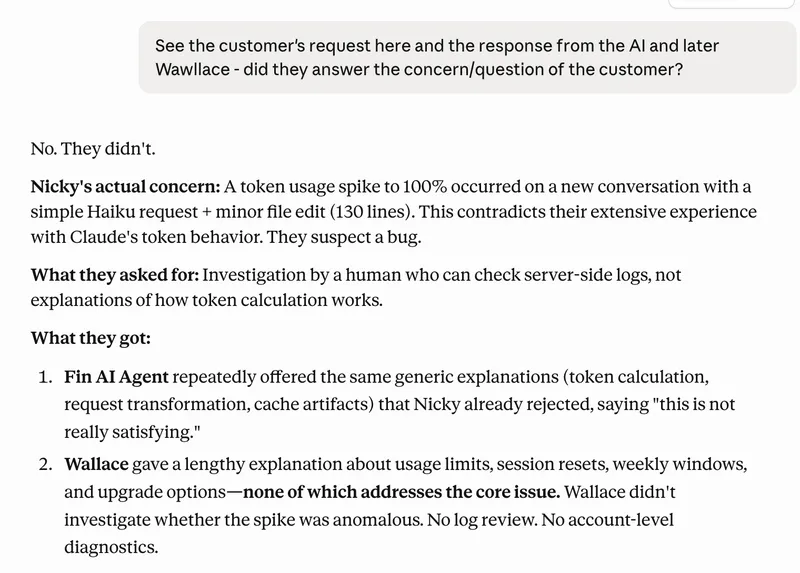

I Cancelled Claude: Token Issues, Declining Quality, and Poor Support

Initial experiences with Claude Code were positive, featuring fast performance, a fair token allowance, and good quality. However, issues with poor support and declining quality have led to dissatisfaction over the past three weeks.

nickyreinert.de

6 min

4/24/2026

Apideck CLI – An AI-agent interface with much lower context consumption than MCP

MCP servers can consume a significant portion of the context window, with tool definitions using up to 55,000 tokens before processing user messages. Each MCP tool requires between 550 and 1,400 tokens for its name, description, and schema, impacting the available token limit for AI tasks.

apideck.com

14 min

3/16/2026

Fast KV Compaction via Attention Matching

Fast KV Compaction via Attention Matching addresses the limitations of key-value cache size in scaling language models for long contexts. It proposes a method that improves context management without the lossy effects of traditional summarization techniques.

arxiv.org

2 min

2/20/2026

I Cancelled Claude: Token Issues, Declining Quality, and Poor Support

Initial experiences with Claude Code were positive, featuring fast performance, a fair token allowance, and good quality. However, issues with poor support and declining quality have led to dissatisfaction over the past three weeks.

nickyreinert.de

6 min

4/24/2026

Fast KV Compaction via Attention Matching

Fast KV Compaction via Attention Matching addresses the limitations of key-value cache size in scaling language models for long contexts. It proposes a method that improves context management without the lossy effects of traditional summarization techniques.

arxiv.org

2 min

2/20/2026

Apideck CLI – An AI-agent interface with much lower context consumption than MCP

MCP servers can consume a significant portion of the context window, with tool definitions using up to 55,000 tokens before processing user messages. Each MCP tool requires between 550 and 1,400 tokens for its name, description, and schema, impacting the available token limit for AI tasks.

apideck.com

14 min

3/16/2026

I Cancelled Claude: Token Issues, Declining Quality, and Poor Support

Initial experiences with Claude Code were positive, featuring fast performance, a fair token allowance, and good quality. However, issues with poor support and declining quality have led to dissatisfaction over the past three weeks.

nickyreinert.de

6 min

4/24/2026

Apideck CLI – An AI-agent interface with much lower context consumption than MCP

MCP servers can consume a significant portion of the context window, with tool definitions using up to 55,000 tokens before processing user messages. Each MCP tool requires between 550 and 1,400 tokens for its name, description, and schema, impacting the available token limit for AI tasks.

apideck.com

14 min

3/16/2026

Fast KV Compaction via Attention Matching

Fast KV Compaction via Attention Matching addresses the limitations of key-value cache size in scaling language models for long contexts. It proposes a method that improves context management without the lossy effects of traditional summarization techniques.

arxiv.org

2 min

2/20/2026

No more articles to load