AMD Ryzen AI NPUs are finally useful under Linux for running LLMs

phoronix.com

March 11, 2026

4 min read

🔥🔥🔥🔥🔥

32/100

Summary

AMD has developed the AMDXDNA accelerator driver for the mainline Linux kernel to support AMD Ryzen AI NPUs. User-space software for effectively utilizing these NPUs on Linux has been limited, with most applications relying on iGPU support instead.

Key Takeaways

- AMD has developed the AMDXDNA accelerator driver for supporting Ryzen AI NPUs in the mainline Linux kernel.

- The Lemonade 10.0 server now includes Linux NPU support for large language models and Whisper, alongside native integration with Claude Code.

- FastFlowLM 0.9.35 has been released, providing official native Linux support for current-gen Ryzen AI NPUs with context lengths up to 256k tokens.

- Ryzen AI NPU support on Linux is compatible with all current AMD Ryzen AI 300/400 series SoCs and requires the Linux 7.0 kernel or AMDXDNA driver back-ports.

Related Articles

AMD's GAIA now allows building custom AI agents via chat, becomes "true desktop app"

Apr 11, 2026

AMD's local, open-source AI can now easily interact with your Gmail

May 8, 2026

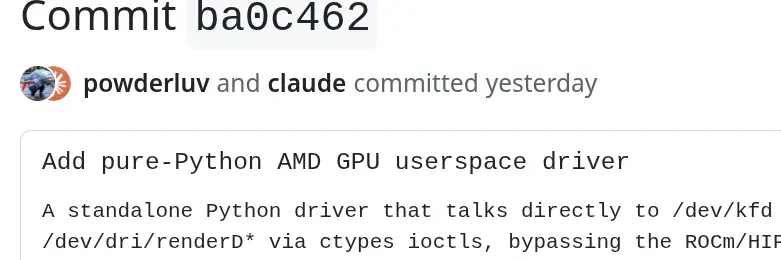

AMD engineer leverages AI to help make a pure-Python AMD GPU user-space driver

Mar 5, 2026

Lemonade by AMD: a fast and open source local LLM server using GPU and NPU

Apr 2, 2026