AMD's local, open-source AI can now easily interact with your Gmail

AMD's Local, Open-Source AI Can Now Easily Interact With Your Gmail AMD software engineers continue rapidly advancing their open-source software efforts around local AI/LLM use on consumer-class Radeon and Ryzen hardware. AMD GAIA 0.17.6 was released on Thursday with more improvements for local AI processing on Windows, Linux, and even macOS. For those trusting enough in local LLM pipelines to do ...

phoronix.com

2 min

5d ago

(AMD) Build AI Agents That Run Locally

GAIA SDK allows developers to build local AI agents using Python and C++ specifically optimized for AMD hardware. It provides tools and documentation for creating efficient AI applications.

amd-gaia.ai

1 min

4/13/2026

Taking on CUDA with ROCm: 'One Step After Another'

AMD's ROCm software stack aims to compete with Nvidia's CUDA for data center GPU market share. Success in this endeavor is viewed as a significant challenge due to CUDA's established dominance.

eetimes.com

6 min

4/12/2026

AMD's GAIA now allows building custom AI agents via chat, becomes "true desktop app"

AMD's GAIA Now Allows Building Custom AI Agents Via Chat, Becomes "True Desktop App" In addition to their efforts around the Lemonade SDK itself, AMD software engineers working on their AI initiatives continue to be investing quite a bit into the Lemonade-using GAIA, the project that originally stood for "Generative AI Is Awesome". AMD's GAIA now allows building your own custom AI agents via chatt...

phoronix.com

2 min

4/11/2026

AMD Ryzen AI NPUs are finally useful under Linux for running LLMs

AMD has developed the AMDXDNA accelerator driver for the mainline Linux kernel to support AMD Ryzen AI NPUs. User-space software for effectively utilizing these NPUs on Linux has been limited, with most applications relying on iGPU support instead.

phoronix.com

4 min

3/11/2026

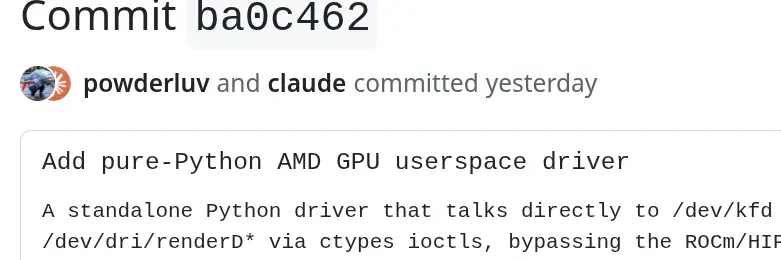

AMD engineer leverages AI to help make a pure-Python AMD GPU user-space driver

Anush Elangovan, AMD's VP of AI Software, is developing a pure-Python AMD GPU user-space driver using Claude Code. This driver aims to support the testing and debugging of the ROCm/HIP user-space stack.

phoronix.com

2 min

3/5/2026

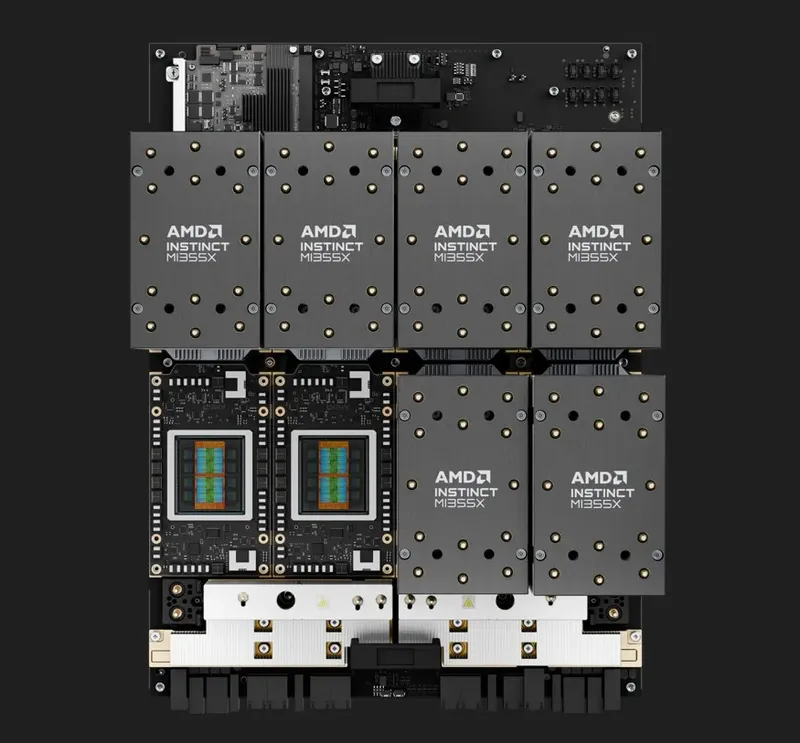

Running a One Trillion-Parameter LLM Locally on AMD Ryzen AI Max+ Cluster

A small-scale distributed inference cluster can be built using AMD’s Ryzen™ AI Max+ AI PC platform to run a one trillion-parameter Large Language Model. A four-node cluster of Framework Desktop systems demonstrates the local inference of the Kimi K2.5 open-source model.

amd.com

14 min

3/1/2026

AMD's local, open-source AI can now easily interact with your Gmail

AMD's Local, Open-Source AI Can Now Easily Interact With Your Gmail AMD software engineers continue rapidly advancing their open-source software efforts around local AI/LLM use on consumer-class Radeon and Ryzen hardware. AMD GAIA 0.17.6 was released on Thursday with more improvements for local AI processing on Windows, Linux, and even macOS. For those trusting enough in local LLM pipelines to do ...

phoronix.com

2 min

5d ago

Taking on CUDA with ROCm: 'One Step After Another'

AMD's ROCm software stack aims to compete with Nvidia's CUDA for data center GPU market share. Success in this endeavor is viewed as a significant challenge due to CUDA's established dominance.

eetimes.com

6 min

4/12/2026

AMD Ryzen AI NPUs are finally useful under Linux for running LLMs

AMD has developed the AMDXDNA accelerator driver for the mainline Linux kernel to support AMD Ryzen AI NPUs. User-space software for effectively utilizing these NPUs on Linux has been limited, with most applications relying on iGPU support instead.

phoronix.com

4 min

3/11/2026

Running a One Trillion-Parameter LLM Locally on AMD Ryzen AI Max+ Cluster

A small-scale distributed inference cluster can be built using AMD’s Ryzen™ AI Max+ AI PC platform to run a one trillion-parameter Large Language Model. A four-node cluster of Framework Desktop systems demonstrates the local inference of the Kimi K2.5 open-source model.

amd.com

14 min

3/1/2026

(AMD) Build AI Agents That Run Locally

GAIA SDK allows developers to build local AI agents using Python and C++ specifically optimized for AMD hardware. It provides tools and documentation for creating efficient AI applications.

amd-gaia.ai

1 min

4/13/2026

AMD's GAIA now allows building custom AI agents via chat, becomes "true desktop app"

AMD's GAIA Now Allows Building Custom AI Agents Via Chat, Becomes "True Desktop App" In addition to their efforts around the Lemonade SDK itself, AMD software engineers working on their AI initiatives continue to be investing quite a bit into the Lemonade-using GAIA, the project that originally stood for "Generative AI Is Awesome". AMD's GAIA now allows building your own custom AI agents via chatt...

phoronix.com

2 min

4/11/2026

AMD engineer leverages AI to help make a pure-Python AMD GPU user-space driver

Anush Elangovan, AMD's VP of AI Software, is developing a pure-Python AMD GPU user-space driver using Claude Code. This driver aims to support the testing and debugging of the ROCm/HIP user-space stack.

phoronix.com

2 min

3/5/2026

AMD's local, open-source AI can now easily interact with your Gmail

AMD's Local, Open-Source AI Can Now Easily Interact With Your Gmail AMD software engineers continue rapidly advancing their open-source software efforts around local AI/LLM use on consumer-class Radeon and Ryzen hardware. AMD GAIA 0.17.6 was released on Thursday with more improvements for local AI processing on Windows, Linux, and even macOS. For those trusting enough in local LLM pipelines to do ...

phoronix.com

2 min

5d ago

AMD's GAIA now allows building custom AI agents via chat, becomes "true desktop app"

AMD's GAIA Now Allows Building Custom AI Agents Via Chat, Becomes "True Desktop App" In addition to their efforts around the Lemonade SDK itself, AMD software engineers working on their AI initiatives continue to be investing quite a bit into the Lemonade-using GAIA, the project that originally stood for "Generative AI Is Awesome". AMD's GAIA now allows building your own custom AI agents via chatt...

phoronix.com

2 min

4/11/2026

Running a One Trillion-Parameter LLM Locally on AMD Ryzen AI Max+ Cluster

A small-scale distributed inference cluster can be built using AMD’s Ryzen™ AI Max+ AI PC platform to run a one trillion-parameter Large Language Model. A four-node cluster of Framework Desktop systems demonstrates the local inference of the Kimi K2.5 open-source model.

amd.com

14 min

3/1/2026

(AMD) Build AI Agents That Run Locally

GAIA SDK allows developers to build local AI agents using Python and C++ specifically optimized for AMD hardware. It provides tools and documentation for creating efficient AI applications.

amd-gaia.ai

1 min

4/13/2026

AMD Ryzen AI NPUs are finally useful under Linux for running LLMs

AMD has developed the AMDXDNA accelerator driver for the mainline Linux kernel to support AMD Ryzen AI NPUs. User-space software for effectively utilizing these NPUs on Linux has been limited, with most applications relying on iGPU support instead.

phoronix.com

4 min

3/11/2026

Taking on CUDA with ROCm: 'One Step After Another'

AMD's ROCm software stack aims to compete with Nvidia's CUDA for data center GPU market share. Success in this endeavor is viewed as a significant challenge due to CUDA's established dominance.

eetimes.com

6 min

4/12/2026

AMD engineer leverages AI to help make a pure-Python AMD GPU user-space driver

Anush Elangovan, AMD's VP of AI Software, is developing a pure-Python AMD GPU user-space driver using Claude Code. This driver aims to support the testing and debugging of the ROCm/HIP user-space stack.

phoronix.com

2 min

3/5/2026

No more articles to load