Autoresearch on an old research idea

ykumar.me

March 23, 2026

6 min read

🔥🔥🔥🔥🔥

66/100

Summary

Karpathy's Autoresearch utilizes a constrained optimization loop with a large language model (LLM) agent. The author applied Autoresearch to legacy code from eCLIP while managing household tasks.

Key Takeaways

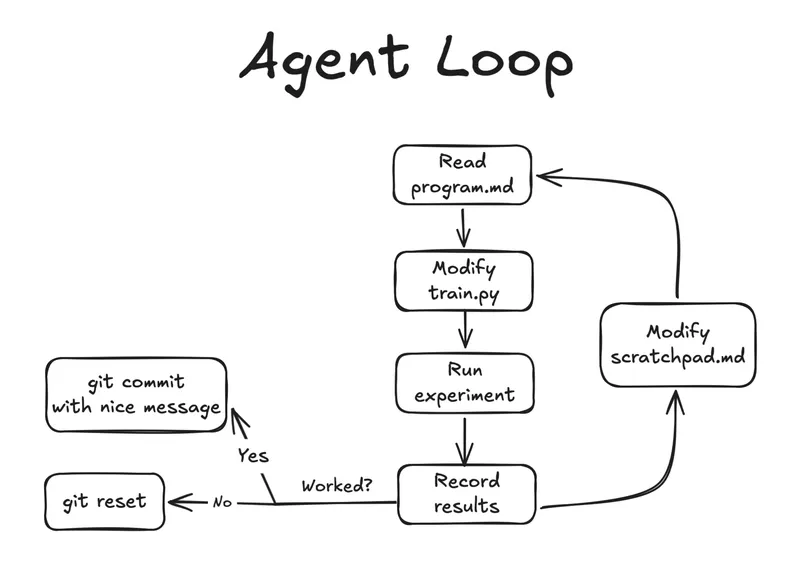

- Autoresearch is implemented as a constrained optimization loop where an LLM agent iteratively improves an evaluation metric by modifying a single file while following structured phases of exploration.

- The experimentation process involves a quick iteration cycle of hypothesizing, editing, training, evaluating, and committing or reverting changes, with each run designed to take around 5 minutes.

- The agent utilized the Ukiyo-eVG dataset, which consists of approximately 11,000 Japanese woodblock prints with bounding box annotations, to test the expert attention mechanism.

- The agent conducted 42 experiments, resulting in 13 committed changes and 29 reverts, significantly improving the evaluation mean rank during the process.

Community Sentiment

Positives

- Using LLMs to explore prior art can yield valuable insights, with even a small percentage of applicable knowledge being beneficial for learning and problem-solving.

- The structured trial and error approach of Autoresearch, despite its limitations, still provides a useful framework for optimizing research processes.

Concerns

- The reliance on hyperparameter tuning in Autoresearch raises concerns about its overall value, suggesting that the costs may not justify the benefits.

- Many recommendations from LLMs are often irrelevant or poorly maintained, which can lead to wasted resources and inefficiencies in real-world applications.

- The effectiveness of Autoresearch is heavily dependent on the quality of the evaluation metrics used, which can undermine the optimization process if they are inadequate.

Related Articles

Autoresearch: Agents researching on single-GPU nanochat training automatically

Mar 7, 2026

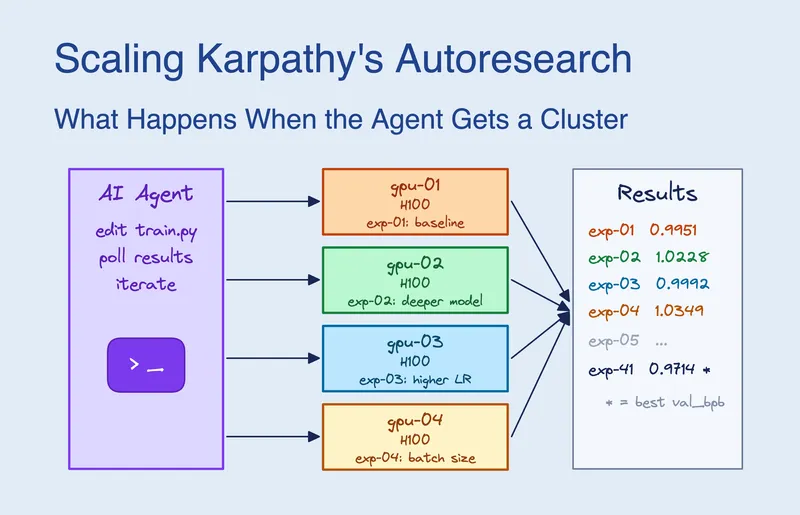

Scaling Karpathy's Autoresearch: What Happens When the Agent Gets a GPU Cluster

Mar 19, 2026

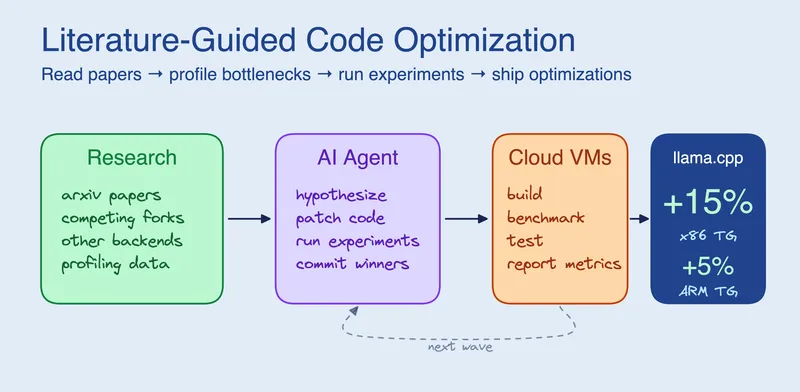

Research-Driven Agents: When an agent reads before it codes

Apr 9, 2026

Building for an audience of one: starting and finishing side projects with AI

Feb 17, 2026

I built a programming language using Claude Code

Mar 10, 2026