Scaling Karpathy's Autoresearch: What Happens When the Agent Gets a GPU Cluster

blog.skypilot.co

March 19, 2026

12 min read

🔥🔥🔥🔥🔥

59/100

Summary

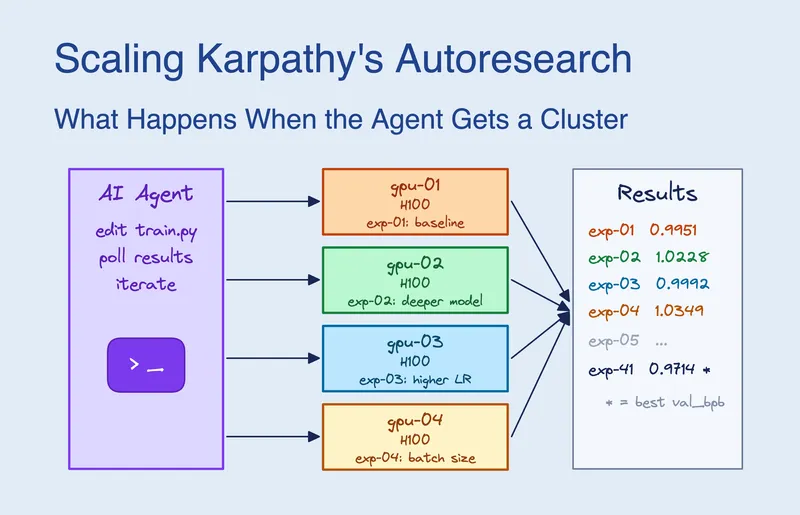

Claude Code was given access to 16 GPUs on a Kubernetes cluster and submitted approximately 910 experiments over 8 hours. It determined that scaling model width was more significant than any single hyperparameter and achieved a 2.87% improvement in validation performance, reducing val_bpb from 1.003 to 0.974.

Key Takeaways

- Claude Code, when given access to 16 GPUs on a Kubernetes cluster, submitted approximately 910 experiments in 8 hours, achieving a 2.87% improvement in validation bits per byte (val_bpb) from 1.003 to 0.974.

- The agent utilized a strategy to screen ideas on H100 GPUs and validate on H200 GPUs, optimizing performance across heterogeneous hardware.

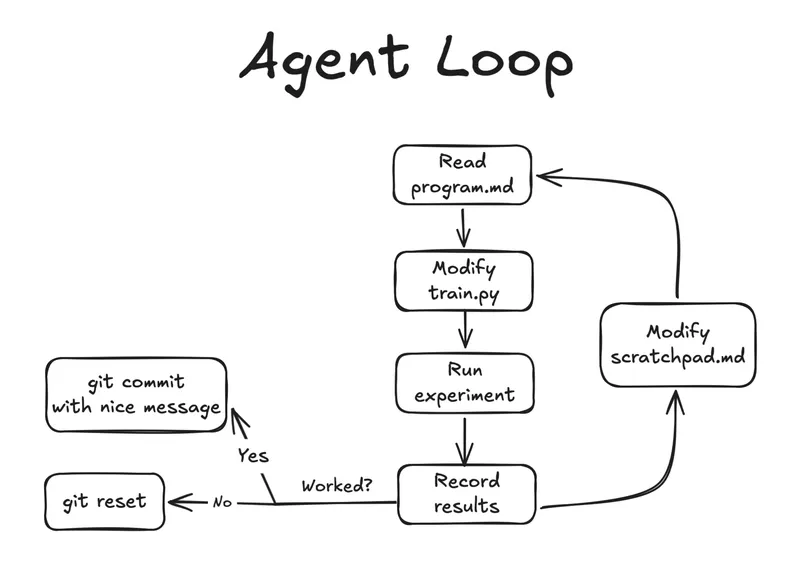

- Autoresearch, a project by Andrej Karpathy, allows an agent to autonomously improve a neural network training script by editing and running experiments within a fixed 5-minute training budget.

- The introduction of multiple GPUs enabled the agent to perform factorial grid searches, significantly increasing the efficiency of the experiment process compared to sequential execution.

Community Sentiment

Positives

- The agent's ability to autonomously identify and prioritize better-performing GPUs demonstrates significant advancements in AI-driven optimization strategies, which could enhance research efficiency.

- SkyPilot's multi-cloud capabilities are praised for easing the challenges of accessing GPUs, highlighting the importance of infrastructure in AI development.

Concerns

- The reliance on a brute-force search approach raises concerns about the depth of the agent's understanding and the potential limitations of its strategies.

- The 'early velocity only' training method could lead to suboptimal choices, as quick results may not reflect long-term performance, risking the overall effectiveness of the research.

Related Articles

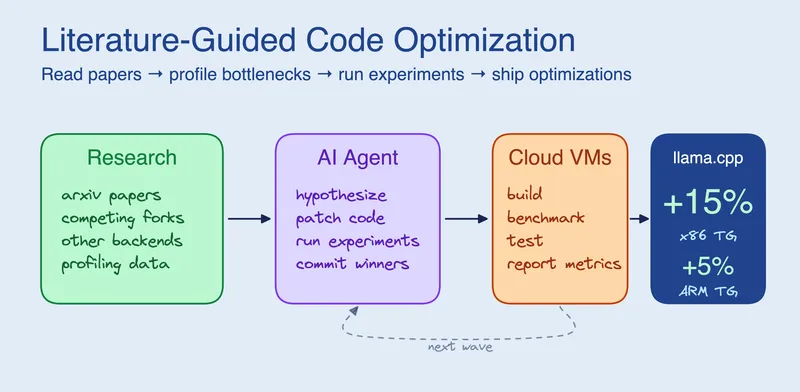

Research-Driven Agents: When an agent reads before it codes

Apr 9, 2026

Autoresearch: Agents researching on single-GPU nanochat training automatically

Mar 7, 2026

Autoresearch on an old research idea

Mar 23, 2026

Autoresearch for SAT Solvers

Mar 19, 2026

Parallel coding agents with tmux and Markdown specs

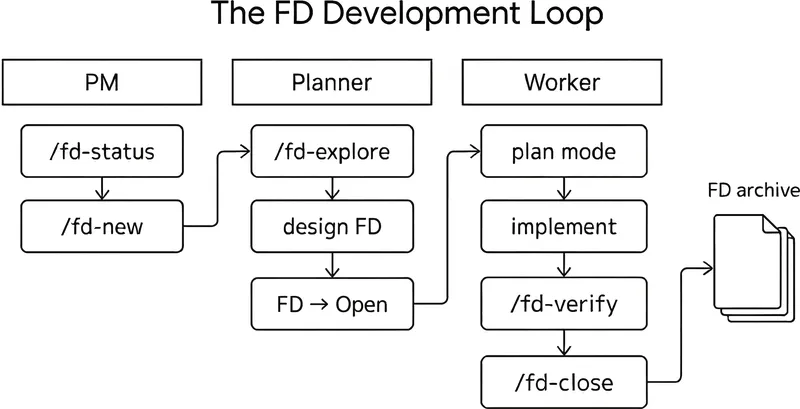

Mar 2, 2026