Research-Driven Agents: When an agent reads before it codes

blog.skypilot.co

April 9, 2026

9 min read

58/100

Summary

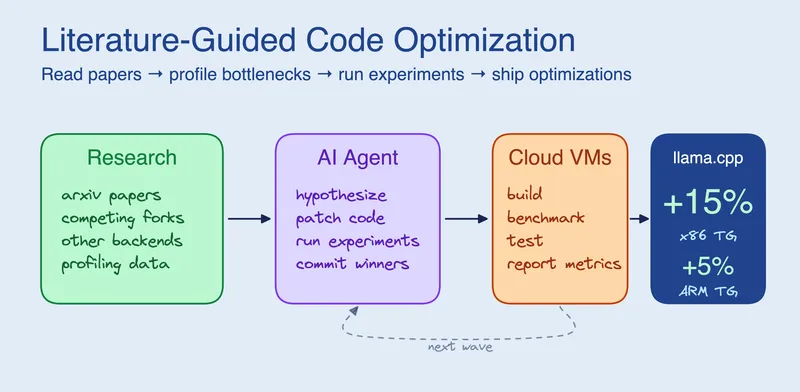

Coding agents achieve improved optimizations by incorporating a literature search phase before coding. In a test using llama.cpp with four cloud VMs, the agents generated five optimizations that enhanced flash attention text generation by 15% on x86 and 5% on ARM within approximately three hours.

Key Takeaways

- Coding agents that read research papers and study competing projects generate optimizations that code-only agents miss, leading to significant performance improvements.

- In experiments, the addition of a literature search phase resulted in five optimizations that enhanced flash attention text generation speed by 15% on x86 and 5% on ARM.

- The total cost for the optimization process was approximately $29, utilizing four cloud VMs over three hours.

- Code-only agents struggle with optimization problems where the solution lies outside the codebase, as they lack the necessary domain knowledge to identify performance bottlenecks.

Community Sentiment

Positives

- Utilizing RST for feeding Arxiv papers to LLMs improves token efficiency and fidelity, which is crucial for effective summarization and knowledge extraction.

- Agent Tuning provides a method to quantify an agent's self-reflection capabilities, enhancing the understanding of how coding agents process instructions.

- Incorporating a directory of annotated papers in projects can significantly improve the quality of AI applications by leveraging existing research, thus fostering innovation.

Concerns

- The reliance on guessing optimizations without profiling indicates a lack of systematic approaches in AI development, which could lead to inefficient solutions.

Related Articles

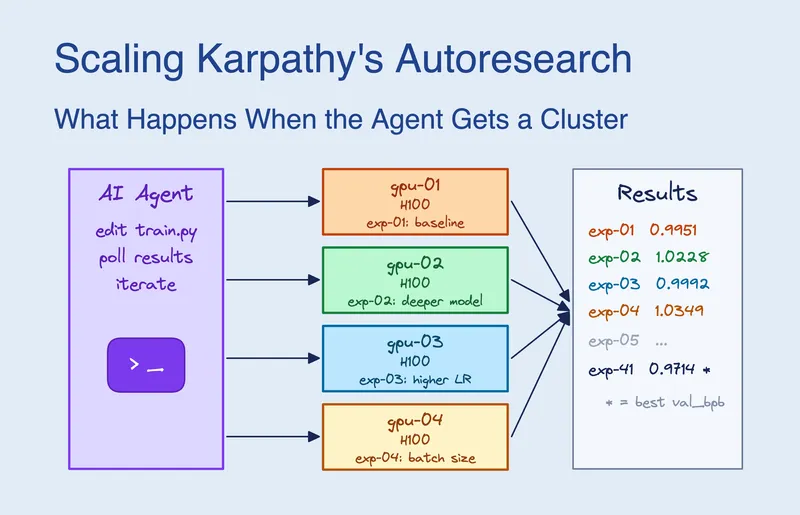

Scaling Karpathy's Autoresearch: What Happens When the Agent Gets a GPU Cluster

Mar 19, 2026

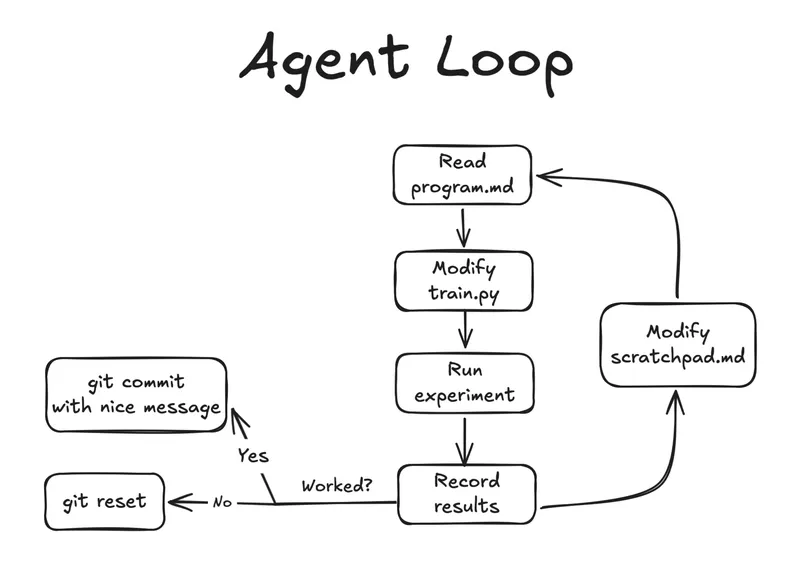

Autoresearch: Agents researching on single-GPU nanochat training automatically

Mar 7, 2026

Autoresearch on an old research idea

Mar 23, 2026

$500 GPU outperforms Claude Sonnet on coding benchmarks

Mar 26, 2026

Building for an audience of one: starting and finishing side projects with AI

Feb 17, 2026