Fast KV Compaction via Attention Matching

arxiv.org

February 20, 2026

2 min read

47/100

Summary

Fast KV Compaction via Attention Matching addresses the limitations of key-value cache size in scaling language models for long contexts. It proposes a method that improves context management without the lossy effects of traditional summarization techniques.

Key Takeaways

- The proposed method, Attention Matching, enables fast context compaction in latent space, significantly improving key-value cache efficiency for language models.

- This approach achieves up to 50x compaction speed on certain datasets with minimal quality loss compared to traditional methods.

- Attention Matching preserves attention mass at a per-key-value head level, allowing for effective reproduction of attention outputs.

- The method decomposes into simple subproblems, some of which can be solved efficiently in closed form.

Community Sentiment

Positives

- The potential for high fidelity, fast compaction could significantly enhance the handling of long context in AI applications, addressing a critical limitation.

- This approach is promising for long-horizon tasks, suggesting it could improve performance in scenarios requiring sustained attention over extended inputs.

Concerns

- The reported compaction accuracies do not seem impressive, raising concerns about the effectiveness of this method in practical applications.

- The ongoing AI arms race may hinder the open publication of meaningful breakthroughs, limiting collaborative advancements in the field.

Related Articles

Speed at the cost of quality: Study of use of Cursor AI in open source projects (2025)

Mar 16, 2026

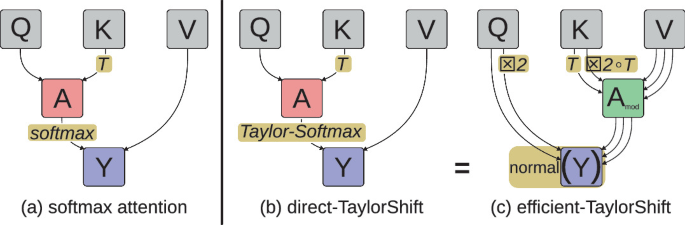

Attention at Constant Cost per Token via Symmetry-Aware Taylor Approximation

Feb 4, 2026

AI Self-preferencing in Algorithmic Hiring: Empirical Evidence and Insights

May 2, 2026

Apple: Embarrassingly Simple Self-Distillation Improves Code Generation

Apr 4, 2026

MegaTrain: Full Precision Training of 100B+ Parameter LLMs on a Single GPU

Apr 8, 2026