Local AI needs to be the norm

unix.foo

May 10, 2026

6 min read

🔥🔥🔥🔥🔥

66/100

Summary

Reliance on cloud-hosted AI models for software features can lead to fragile applications that compromise user privacy. Local AI solutions are suggested as a more robust and secure alternative to avoid these issues.

Key Takeaways

- Relying on cloud-hosted AI models creates software that is fragile, privacy-invasive, and dependent on external factors like server uptime and billing.

- Local devices have sufficient processing power to handle AI tasks, allowing for faster, more private, and reliable applications without the need for server detours.

- Apple has invested in local AI model tooling, enabling developers to create applications that generate AI responses directly on devices without sending user data to third-party servers.

- The Brutalist Report app exemplifies the use of on-device AI for generating summaries, demonstrating that many use cases can be effectively handled locally rather than relying on cloud services.

Community Sentiment

Positives

- Local AI models can operate effectively on consumer-grade hardware, making advanced AI capabilities more accessible and reducing reliance on cloud services.

- The potential for local AI to handle specific tasks without the need for cloud infrastructure could significantly lower costs for users and businesses.

- Emerging technologies like LoRAs enable efficient fine-tuning of models locally, allowing users to adapt AI to their specific needs without extensive resources.

Concerns

- The immense computing power and large datasets required to train functional LLMs locally remain significant barriers for most users, limiting practical applications.

- Concerns about the proprietary nature of local models highlight issues around transparency and control over training data, which could lead to biased outputs.

- The lack of a clear business model for open-weight AI raises doubts about its sustainability and long-term viability in the market.

Related Articles

Every company building your AI assistant is now an ad company

Feb 20, 2026

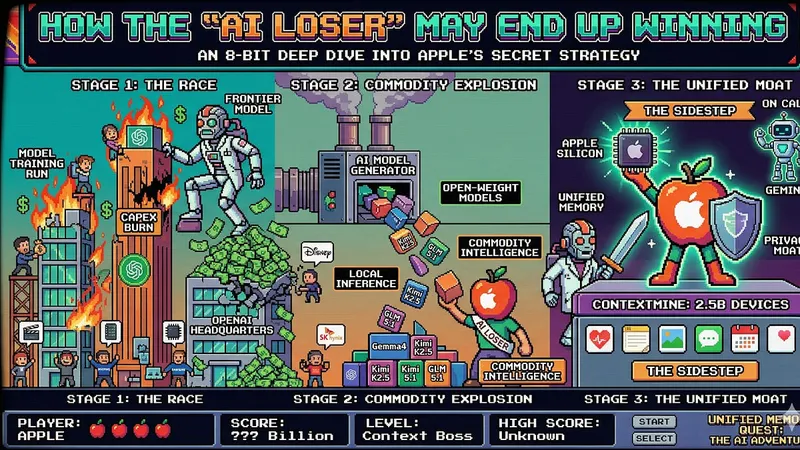

Apple's accidental moat: How the "AI Loser" may end up winning

Apr 13, 2026

Composition Shouldn't be this Hard

Apr 24, 2026

Big Tech : AI Isn’t Taking Your Job. Your Refusal to Use It Might.

Feb 7, 2026

The ladder is missing rungs – Engineering Progression When AI Ate the Middle

Mar 30, 2026