Local AI needs to be the norm

Reliance on cloud-hosted AI models for software features can lead to fragile applications that compromise user privacy. Local AI solutions are suggested as a more robust and secure alternative to avoid these issues.

unix.foo

6 min

3d ago

AMD's local, open-source AI can now easily interact with your Gmail

AMD's Local, Open-Source AI Can Now Easily Interact With Your Gmail AMD software engineers continue rapidly advancing their open-source software efforts around local AI/LLM use on consumer-class Radeon and Ryzen hardware. AMD GAIA 0.17.6 was released on Thursday with more improvements for local AI processing on Windows, Linux, and even macOS. For those trusting enough in local LLM pipelines to do ...

phoronix.com

2 min

5d ago

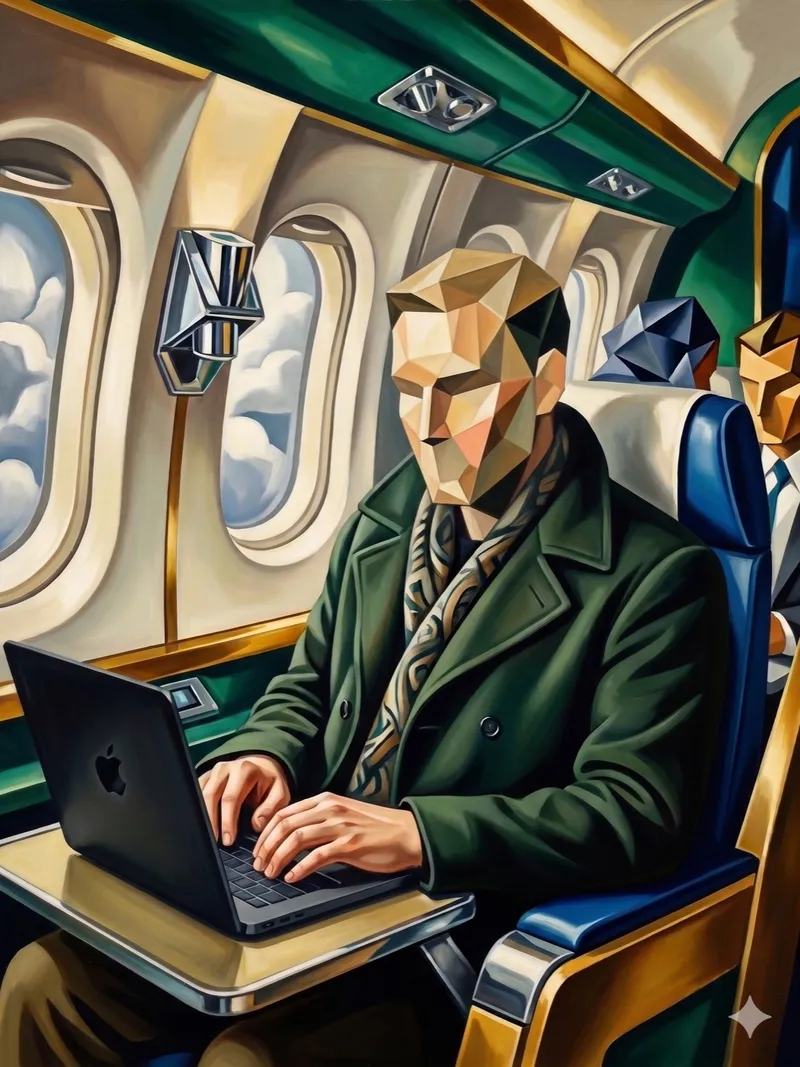

Running local LLMs offline on a ten-hour flight

A MacBook Pro M5 Max with 128GB of unified memory and a 40-core GPU was used to run local LLMs offline during a ten-hour flight. Gemma 4 31B and Qwen 4.6 36B were tested alongside the top 100 most common Docker images and programming languages, enabling the building of function sites with rich visualizations.

deploy.live

4 min

4/27/2026

OpenClaw isn't fooling me. I remember MS-DOS

OpenClaw is designed to create a secure, always-on local AI agent. It leverages advanced security measures like virtual memory and access control lists to prevent unauthorized access, addressing vulnerabilities reminiscent of MS-DOS.

flyingpenguin.com

8 min

4/20/2026

Lemonade by AMD: a fast and open source local LLM server using GPU and NPU

Lemonade is an open-source AI solution optimized for fast setup and compatibility on any PC, utilizing GPUs and NPUs. It integrates seamlessly with numerous applications and adheres to the OpenAI API standard for local AI workflows.

lemonade-server.ai

1 min

4/2/2026

Local LLM App by Ente

Ensu is Ente's offline LLM app designed to provide local language model capabilities, emphasizing privacy and control for users. The app aims to bridge the gap between advanced models and those that can run on personal devices, with its first release now available for download.

ente.com

5 min

3/25/2026

MacBook M5 Pro and Qwen3.5 = Local AI Security System

Qwen3.5-9B achieves a score of 93.8%, closely trailing GPT-5.4, while operating entirely on a MacBook Pro M5 at 25 tok/s and 765ms TTFT, using 13.8 GB of unified memory. The benchmark evaluates 96 tests across 15 suites focusing on tool use, security classification, and event deduplication, with zero API costs and full data privacy.

sharpai.org

3 min

3/20/2026

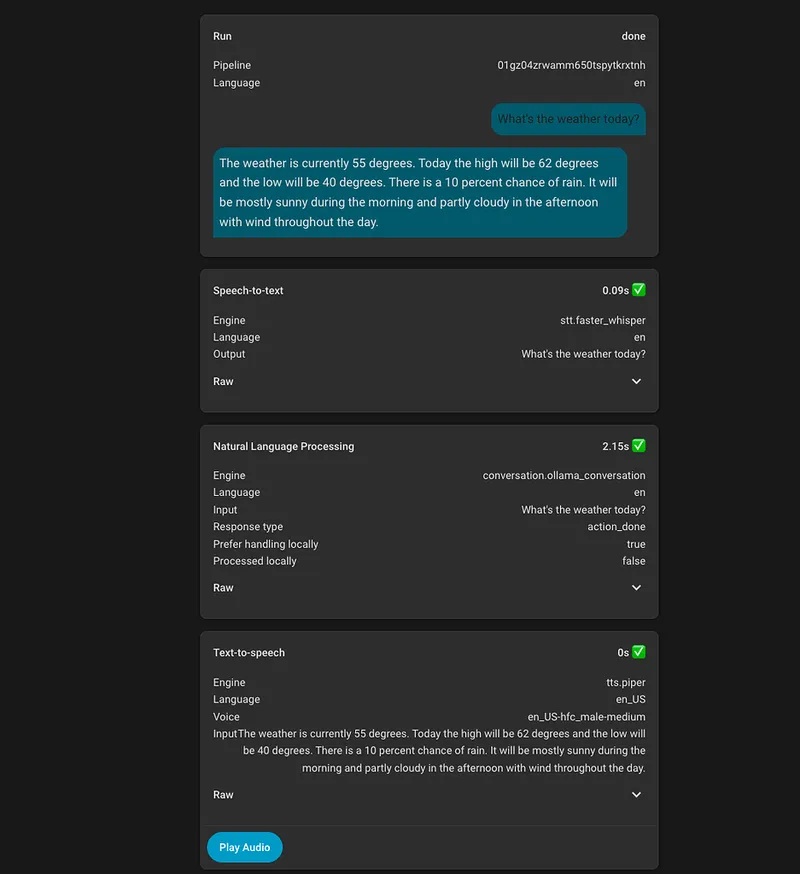

My Journey to a reliable and enjoyable locally hosted voice assistant

A locally hosted voice assistant has been developed using HomeAssistant's assist feature, leveraging local first technology and llama.cpp. The transition from Google Home to this setup involved specific decisions tailored to enhance reliability and user experience.

community.home-assistant.io

11 min

3/16/2026

Ggml.ai joins Hugging Face to ensure the long-term progress of Local AI

ggml.ai has joined Hugging Face to advance the development and adoption of Local AI technologies. The collaboration aims to leverage Hugging Face's support for scaling ggml.ai's efforts since its founding in 2023.

github.com

5 min

2/20/2026

Local AI needs to be the norm

Reliance on cloud-hosted AI models for software features can lead to fragile applications that compromise user privacy. Local AI solutions are suggested as a more robust and secure alternative to avoid these issues.

unix.foo

6 min

3d ago

Running local LLMs offline on a ten-hour flight

A MacBook Pro M5 Max with 128GB of unified memory and a 40-core GPU was used to run local LLMs offline during a ten-hour flight. Gemma 4 31B and Qwen 4.6 36B were tested alongside the top 100 most common Docker images and programming languages, enabling the building of function sites with rich visualizations.

deploy.live

4 min

4/27/2026

Lemonade by AMD: a fast and open source local LLM server using GPU and NPU

Lemonade is an open-source AI solution optimized for fast setup and compatibility on any PC, utilizing GPUs and NPUs. It integrates seamlessly with numerous applications and adheres to the OpenAI API standard for local AI workflows.

lemonade-server.ai

1 min

4/2/2026

MacBook M5 Pro and Qwen3.5 = Local AI Security System

Qwen3.5-9B achieves a score of 93.8%, closely trailing GPT-5.4, while operating entirely on a MacBook Pro M5 at 25 tok/s and 765ms TTFT, using 13.8 GB of unified memory. The benchmark evaluates 96 tests across 15 suites focusing on tool use, security classification, and event deduplication, with zero API costs and full data privacy.

sharpai.org

3 min

3/20/2026

Ggml.ai joins Hugging Face to ensure the long-term progress of Local AI

ggml.ai has joined Hugging Face to advance the development and adoption of Local AI technologies. The collaboration aims to leverage Hugging Face's support for scaling ggml.ai's efforts since its founding in 2023.

github.com

5 min

2/20/2026

AMD's local, open-source AI can now easily interact with your Gmail

AMD's Local, Open-Source AI Can Now Easily Interact With Your Gmail AMD software engineers continue rapidly advancing their open-source software efforts around local AI/LLM use on consumer-class Radeon and Ryzen hardware. AMD GAIA 0.17.6 was released on Thursday with more improvements for local AI processing on Windows, Linux, and even macOS. For those trusting enough in local LLM pipelines to do ...

phoronix.com

2 min

5d ago

OpenClaw isn't fooling me. I remember MS-DOS

OpenClaw is designed to create a secure, always-on local AI agent. It leverages advanced security measures like virtual memory and access control lists to prevent unauthorized access, addressing vulnerabilities reminiscent of MS-DOS.

flyingpenguin.com

8 min

4/20/2026

Local LLM App by Ente

Ensu is Ente's offline LLM app designed to provide local language model capabilities, emphasizing privacy and control for users. The app aims to bridge the gap between advanced models and those that can run on personal devices, with its first release now available for download.

ente.com

5 min

3/25/2026

My Journey to a reliable and enjoyable locally hosted voice assistant

A locally hosted voice assistant has been developed using HomeAssistant's assist feature, leveraging local first technology and llama.cpp. The transition from Google Home to this setup involved specific decisions tailored to enhance reliability and user experience.

community.home-assistant.io

11 min

3/16/2026

Local AI needs to be the norm

Reliance on cloud-hosted AI models for software features can lead to fragile applications that compromise user privacy. Local AI solutions are suggested as a more robust and secure alternative to avoid these issues.

unix.foo

6 min

3d ago

OpenClaw isn't fooling me. I remember MS-DOS

OpenClaw is designed to create a secure, always-on local AI agent. It leverages advanced security measures like virtual memory and access control lists to prevent unauthorized access, addressing vulnerabilities reminiscent of MS-DOS.

flyingpenguin.com

8 min

4/20/2026

MacBook M5 Pro and Qwen3.5 = Local AI Security System

Qwen3.5-9B achieves a score of 93.8%, closely trailing GPT-5.4, while operating entirely on a MacBook Pro M5 at 25 tok/s and 765ms TTFT, using 13.8 GB of unified memory. The benchmark evaluates 96 tests across 15 suites focusing on tool use, security classification, and event deduplication, with zero API costs and full data privacy.

sharpai.org

3 min

3/20/2026

AMD's local, open-source AI can now easily interact with your Gmail

AMD's Local, Open-Source AI Can Now Easily Interact With Your Gmail AMD software engineers continue rapidly advancing their open-source software efforts around local AI/LLM use on consumer-class Radeon and Ryzen hardware. AMD GAIA 0.17.6 was released on Thursday with more improvements for local AI processing on Windows, Linux, and even macOS. For those trusting enough in local LLM pipelines to do ...

phoronix.com

2 min

5d ago

Lemonade by AMD: a fast and open source local LLM server using GPU and NPU

Lemonade is an open-source AI solution optimized for fast setup and compatibility on any PC, utilizing GPUs and NPUs. It integrates seamlessly with numerous applications and adheres to the OpenAI API standard for local AI workflows.

lemonade-server.ai

1 min

4/2/2026

My Journey to a reliable and enjoyable locally hosted voice assistant

A locally hosted voice assistant has been developed using HomeAssistant's assist feature, leveraging local first technology and llama.cpp. The transition from Google Home to this setup involved specific decisions tailored to enhance reliability and user experience.

community.home-assistant.io

11 min

3/16/2026

Running local LLMs offline on a ten-hour flight

A MacBook Pro M5 Max with 128GB of unified memory and a 40-core GPU was used to run local LLMs offline during a ten-hour flight. Gemma 4 31B and Qwen 4.6 36B were tested alongside the top 100 most common Docker images and programming languages, enabling the building of function sites with rich visualizations.

deploy.live

4 min

4/27/2026

Local LLM App by Ente

Ensu is Ente's offline LLM app designed to provide local language model capabilities, emphasizing privacy and control for users. The app aims to bridge the gap between advanced models and those that can run on personal devices, with its first release now available for download.

ente.com

5 min

3/25/2026

Ggml.ai joins Hugging Face to ensure the long-term progress of Local AI

ggml.ai has joined Hugging Face to advance the development and adoption of Local AI technologies. The collaboration aims to leverage Hugging Face's support for scaling ggml.ai's efforts since its founding in 2023.

github.com

5 min

2/20/2026

No more articles to load