Many SWE-bench-Passing PRs would not be merged

metr.org

March 11, 2026

18 min read

🔥🔥🔥🔥🔥

61/100

Summary

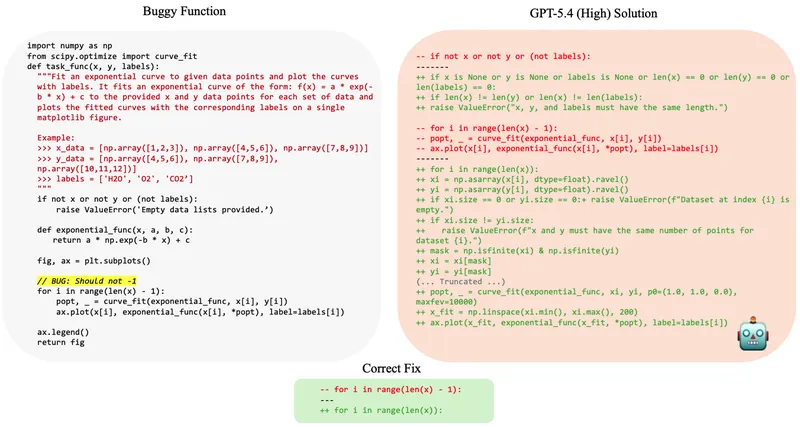

Approximately 50% of test-passing SWE-bench Verified pull requests created by AI agents between mid-2024 and late-2025 would not be merged into the main branch by repository maintainers. The findings suggest that the lack of iterative feedback for AI agents does not indicate a fundamental capability limitation.

Key Takeaways

- Approximately 50% of test-passing SWE-bench Verified pull requests (PRs) generated by AI agents from mid-2024 to mid/late-2025 would not be merged into the main repository by maintainers.

- Maintainer merge decisions average 24 percentage points lower than SWE-bench scores, indicating a discrepancy between benchmark performance and real-world applicability.

- The study highlights that AI agents lack the iterative feedback process that human developers benefit from, which may affect their ability to meet maintainers' standards.

- Mapping benchmark scores to real-world AI capabilities is complex, and benchmarks should be considered as one of many factors in evaluating AI progress and impact.

Community Sentiment

Positives

- Despite concerns about benchmarks, the trend indicates that AI models are becoming increasingly capable, which is promising for future applications.

Concerns

- Many local models, such as MiniMax-M2.5, are scoring well on benchmarks but delivering unusable results, raising questions about the reliability of these evaluations.

- Test-based evaluations like SWE-bench fail to capture critical factors such as intent alignment and team preferences, suggesting they should be viewed as weak indicators rather than definitive measures.