Why SWE-bench Verified no longer measures frontier coding capabilities

openai.com

April 26, 2026

9 min read

🔥🔥🔥🔥🔥

56/100

Summary

SWE-bench Verified is becoming less reliable for measuring frontier coding capabilities due to contamination. SWE-bench Pro is recommended as a more accurate alternative for assessing models on autonomous software engineering tasks.

Key Takeaways

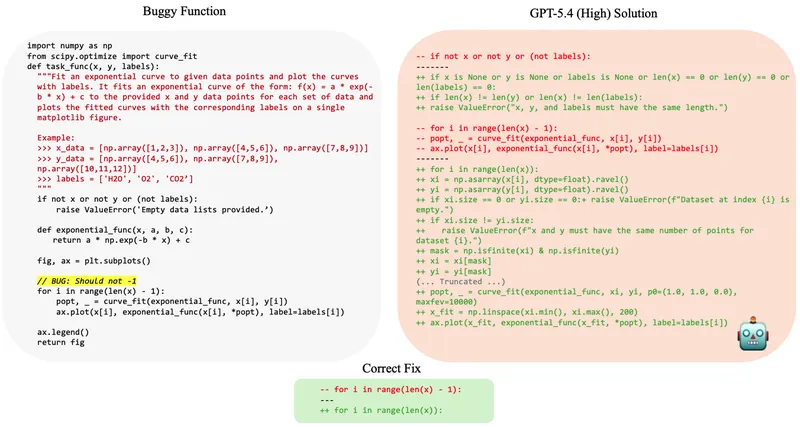

- SWE-bench Verified is no longer suitable for measuring progress in autonomous software engineering capabilities due to flaws in its test cases and contamination from training data.

- An analysis revealed that 59.4% of audited problems in SWE-bench Verified contained flawed test cases that rejected correct solutions.

- All tested frontier models were able to reproduce original bug fixes or problem specifics, indicating they had been exposed to the benchmark during training.

- OpenAI recommends transitioning to SWE-bench Pro for more reliable evaluations of coding capabilities.

Community Sentiment

Positives

- The introduction of novel benchmarks like Zork bench aims to address the limitations of existing coding benchmarks by focusing on unique challenges that LLMs struggle to solve.

- The anticipation for ARC-AGI-3 highlights the community's interest in reasoning-heavy benchmarks, which could better evaluate model capabilities beyond simple tasks.

- The expectation of improvement in models like Claude Opus 4.6 suggests that ongoing advancements in AI will lead to better performance in challenging benchmarks.

Concerns

- The revelation that a significant portion of SWE-bench Verified's test cases were flawed raises serious concerns about the validity of benchmarks used to measure AI capabilities.

- The community's skepticism about the reliability of benchmarks reflects a broader issue in AI evaluation, where many models may not accurately represent real-world performance.

- Concerns about the potential for benchmarks to be gamed or optimized for marketing purposes undermine trust in their effectiveness for assessing true AI capabilities.