Attention at Constant Cost per Token via Symmetry-Aware Taylor Approximation

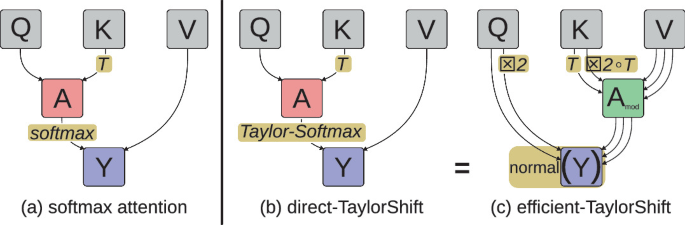

Self-attention mechanisms in Transformers typically incur costs that increase with context length, leading to higher demands for storage, compute, and energy. A new method using symmetry-aware Taylor approximation aims to maintain constant cost per token for self-attention, potentially alleviating these resource demands.

arxiv.org

2 min

2/4/2026

Attention at Constant Cost per Token via Symmetry-Aware Taylor Approximation

Self-attention mechanisms in Transformers typically incur costs that increase with context length, leading to higher demands for storage, compute, and energy. A new method using symmetry-aware Taylor approximation aims to maintain constant cost per token for self-attention, potentially alleviating these resource demands.

arxiv.org

2 min

2/4/2026

Attention at Constant Cost per Token via Symmetry-Aware Taylor Approximation

Self-attention mechanisms in Transformers typically incur costs that increase with context length, leading to higher demands for storage, compute, and energy. A new method using symmetry-aware Taylor approximation aims to maintain constant cost per token for self-attention, potentially alleviating these resource demands.

arxiv.org

2 min

2/4/2026

No more articles to load