Advancing AI Benchmarking with Game Arena

blog.google

February 2, 2026

5 min read

🔥🔥🔥🔥🔥

53/100

Summary

Google DeepMind and Kaggle launched Game Arena, a public benchmarking platform for AI models to compete in strategic games, starting with chess. The platform aims to develop AI capable of making decisions in environments with incomplete information.

Key Takeaways

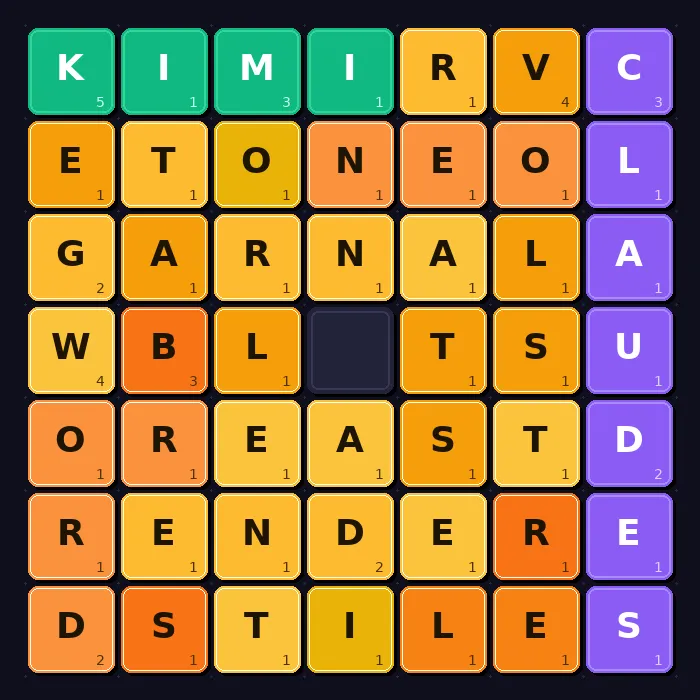

- Google DeepMind expanded the Game Arena benchmarking platform to include new games, Werewolf and poker, to assess AI models' abilities in social dynamics and calculated risk.

- The chess benchmark released last year evaluates AI models on strategic reasoning and long-term planning, with Gemini 3 Pro and Gemini 3 Flash currently leading the leaderboard.

- Werewolf, a social deduction game, tests AI models on communication, negotiation, and the ability to navigate ambiguity, essential skills for future AI assistants.

- The Game Arena serves as a controlled environment for agentic safety research, allowing for the assessment of AI models' capabilities in detecting manipulation and deception.

Community Sentiment

Positives

- Implementing a new benchmark like CodeClash allows for innovative comparisons between AI agents, revealing deeper insights into their coding capabilities and performance.

- Benchmarking AI through competitive gaming scenarios can lead to significant advancements in understanding model strengths and weaknesses in real-world applications.

Concerns

- The relevance of AI's ability to play games like Chess is questioned, as programming a Chess Engine may be seen as a more practical application than game-playing capabilities.