DeepSeek 4 Flash local inference engine for Metal

github.com

May 7, 2026

15 min read

67/100

Summary

DeepSeek 4 Flash is a native inference engine designed specifically for Metal, focusing on executing DeepSeek V4 Flash graphs. It includes features for loading, prompt rendering, KV state management, and server API integration, and is built upon contributions from llama.cpp and GGML.

Key Takeaways

- DeepSeek V4 Flash is a specialized inference engine designed for Metal, focusing on efficient local inference with a context window of 1 million tokens.

- The model is faster than other smaller dense models due to fewer active parameters and produces shorter thinking sections proportional to problem complexity.

- DeepSeek V4 Flash supports 2-bit quantization, enabling it to run on MacBooks with 128GB of RAM.

- The project emphasizes a narrow focus on one model at a time, integrating testing and validation to ensure credible local inference on high-end personal machines.

Related Articles

Flash-MoE: Running a 397B Parameter Model on a Laptop

Mar 22, 2026

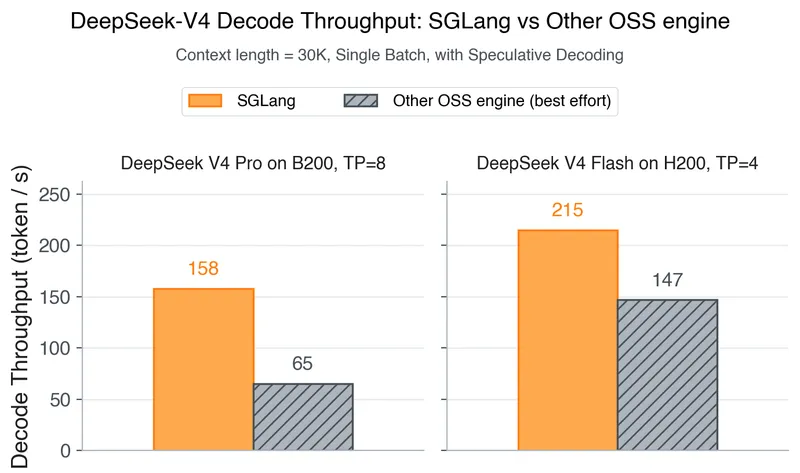

DeepSeek-V4 on Day 0: From Fast Inference to Verified RL with SGLang and Miles

Apr 25, 2026

Rust implementation of Mistral's Voxtral Mini 4B Realtime runs in your browser

Feb 10, 2026

Parakeet.cpp – Parakeet ASR inference in pure C++ with Metal GPU acceleration

Feb 27, 2026

Running Gemma 4 locally with LM Studio's new headless CLI and Claude Code

Apr 5, 2026