Flash-MoE: Running a 397B Parameter Model on a Laptop

github.com

March 22, 2026

6 min read

🔥🔥🔥🔥🔥

65/100

Summary

Flash-Moe is a pure C/Metal inference engine that runs the Qwen3.5-397B-A17B model, a 397 billion parameter Mixture-of-Experts model, on a MacBook Pro with 48GB RAM at over 4.4 tokens per second. The 209GB model streams from SSD using a custom Metal compute pipeline without relying on Python or other frameworks.

Key Takeaways

- A 397 billion parameter Mixture-of-Experts model, Qwen3.5-397B-A17B, runs on a MacBook Pro with 48GB RAM at over 4.4 tokens per second using a custom C/Metal inference engine.

- The model utilizes a 209GB data size, streamed from SSD with no reliance on Python or frameworks, employing only C, Objective-C, and hand-tuned Metal shaders.

- The architecture includes 60 transformer layers with 512 experts per layer, activating K=4 experts per token for processing.

- The system achieves a 71% hit rate for expert data caching using the OS page cache, outperforming custom caching approaches.

Community Sentiment

Positives

- Running the 397B parameter Qwen 3.5 model on consumer devices is now feasible, showcasing advancements in model quantization and accessibility.

- Achieving an 87.86% score on the MMLU benchmark indicates that the model performs well even on limited hardware, which is promising for broader AI applications.

- The success of running the model on an M1 Ultra with substantial context length highlights the potential for high-performance AI on consumer-grade devices.

Concerns

- Reducing the number of experts per token to fit the model on consumer hardware may significantly degrade performance, raising concerns about the trade-offs in quality.

- The reliance on 2-bit quantization for running large models could lead to inadequate performance for real-world applications, limiting its practical usability.

Related Articles

TurboQuant KV Compression and SSD Expert Streaming for M5 Pro and IOS

Apr 1, 2026

DeepSeek 4 Flash local inference engine for Metal

May 7, 2026

Making LLM Training Faster with Unsloth and NVIDIA

May 7, 2026

We got 207 tok/s with Qwen3.5-27B on an RTX 3090

Apr 20, 2026

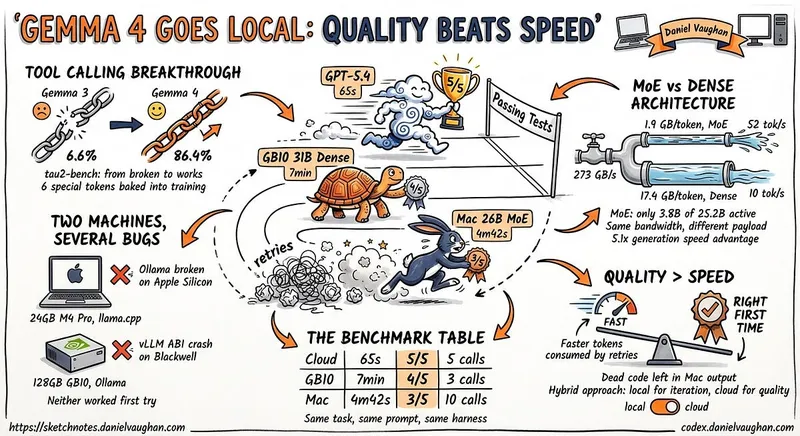

I ran Gemma 4 as a local model in Codex CLI

Apr 12, 2026