LLM Neuroanatomy II: Modern LLM Hacking and Hints of a Universal Language?

dnhkng.github.io

March 24, 2026

20 min read

🔥🔥🔥🔥🔥

54/100

Summary

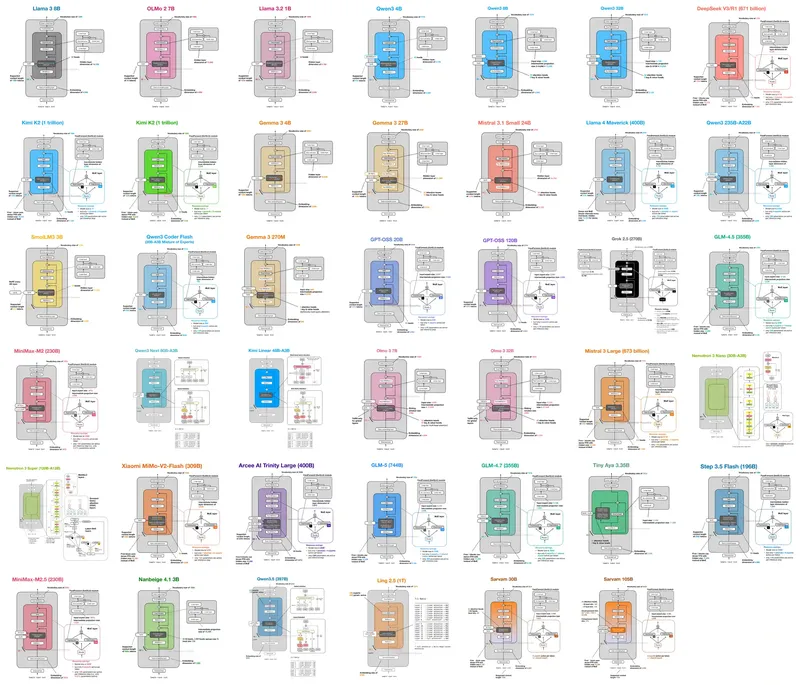

Duplicating a block of seven middle layers in Qwen2-72B without weight changes or training produced a top model on the HuggingFace Open LLM Leaderboard. Since mid-2024, several strong open-source models have emerged, including Qwen3.5, MiniMax, and GLM-4.

Key Takeaways

- The RYS (Repeat Your Self) method, which involves duplicating layers without weight changes, has been shown to enhance model performance, as demonstrated with Qwen2-72B.

- Relayering techniques remain effective on modern models, including Qwen3.5-27B, indicating that this approach is a general property of Transformer architectures.

- An experiment confirmed a three-phase structure in language models, where early layers encode, middle layers reason, and late layers decode, revealing a universal "thinking space" for different languages.

- Pairwise cosine similarity tests across languages and content types indicated that the middle layers of the model operate in a format-agnostic reasoning space.

Community Sentiment

Positives

- The research highlights the potential of using repeated layers in LLMs, which could enhance performance without increasing memory usage, making it suitable for edge applications.

- The findings on language-agnostic representations suggest that LLMs can effectively process multiple languages, which could lead to more universal AI applications across diverse linguistic contexts.

- The observation that cross-language representations converge in early layers indicates a promising direction for improving multilingual model training and efficiency.

Concerns

- The complexity of the research may hinder understanding and accessibility for those less familiar with LLM architectures, potentially limiting its impact on broader audiences.

- Uncertainty remains about the performance implications of duplicating layer sets, indicating that further exploration is needed to fully understand the benefits of this approach.

![[AINews] Why OpenAI Should Build Slack](https://substackcdn.com/image/fetch/$s_!XQAE!,w_1200,h_675,c_fill,f_jpg,q_auto:good,fl_progressive:steep,g_auto/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F89ee056a-0ea2-4473-8e1c-9b21f034c717_1474x2116.png)